Sam Altman offers us a new essay, The Gentle Singularity. It’s short (if a little long to quote in full), so given you read my posts it’s probably worth reading the whole thing.

First off, thank you to Altman for publishing this and sharing his thoughts. This was helpful, and contained much that was good. It’s important to say that first, before I start tearing into various passages, and pointing out the ways in which this is trying to convince us that everything is going to be fine when very clearly the default is for everything to be not fine.

I have now done that. So here we go.

Sam Altman (CEO OpenAI): We are past the event horizon; the takeoff has started. Humanity is close to building digital superintelligence, and at least so far it’s much less weird than it seems like it should be.

Robots are not yet walking the streets, nor are most of us talking to AI all day. People still die of disease, we still can’t easily go to space, and there is a lot about the universe we don’t understand.

And yet, we have recently built systems that are smarter than people in many ways, and are able to significantly amplify the output of people using them. The least-likely part of the work is behind us; the scientific insights that got us to systems like GPT-4 and o3 were hard-won, but will take us very far.

Assuming we agree that the takeoff has started, I would call that the ‘calm before the storm,’ or perhaps ‘how exponentials work.’

Being close to building something is not going to make the world look weird. What makes the world look weird is actually building it. Some people (like Tyler Cowen) claim o3 is AGI, but everyone agrees we don’t have ASI (superintelligence) yet.

Also, frankly, yeah, it’s super weird that we have these LLMs we can talk to, it’s just that you get used to ‘weird’ things remarkably quickly. It seems like it ‘should be weird’ (or perhaps ‘weirder’?) because what we do have now is still unevenly distributed and not well-exploited, and many of us including Altman are comparing the current level of weirdness to the near future True High Weirdness that is coming, much of which is already baked in.

If anything, I think the current low level of High Weirdness is due to us. as I argue later, not being used to these new capabilities. Why do we see so few scams, spam and slop and bots and astroturfing and disinformation, deepfakes, cybercrime, giant boosts in productivity, talking mainly to AIs all day, actual learning and so on? Mostly I think it’s because People Don’t Do Things and don’t know what is possible.

Sam Altman: 2025 has seen the arrival of agents that can do real cognitive work; writing computer code will never be the same. 2026 will likely see the arrival of systems that can figure out novel insights. 2027 may see the arrival of robots that can do tasks in the real world.

That’s a bold prediction, modulo the ‘may’ and the values of ‘tasks in the real world’ and ‘novel insights.’

And yes, I agree that the following is true, as long as you notice the word ‘may’:

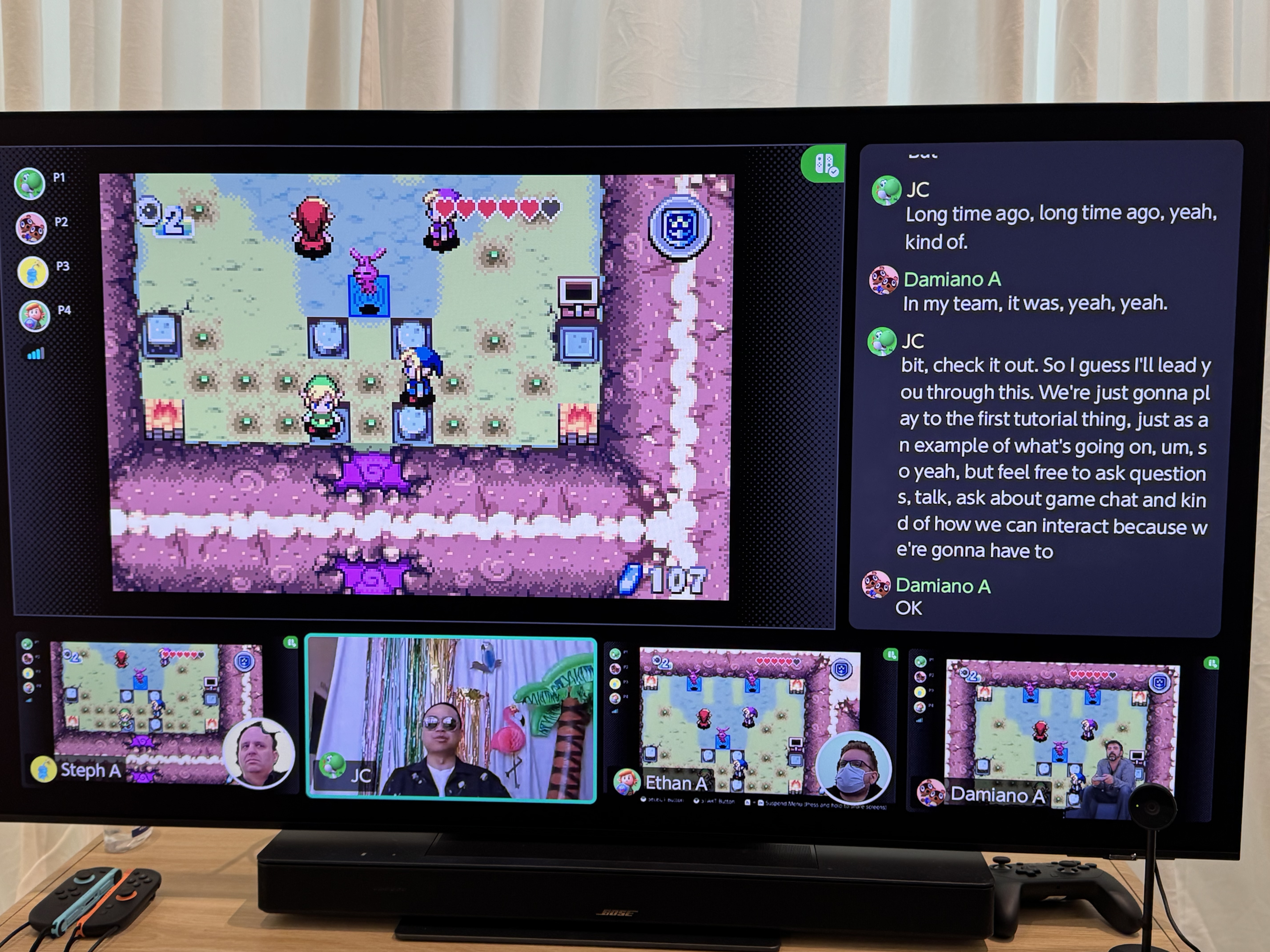

In the most important ways, the 2030s may not be wildly different. People will still love their families, express their creativity, play games, and swim in lakes.

Note not only the ‘may’ but the low bar for ‘not be wildly different.’ The people of 1000 BCE did all those things, plausibly they also did them in 10,000 BCE or 100,000 BCE. Is that what would count as ‘not wildly different’?

This is essentially asserting that people in the 2030s will be alive. Well, I hope so!

Already we live with incredible digital intelligence, and after some initial shock, most of us are pretty used to it.

I get why one would say this, but it seems very wrong? First of all, who is this ‘us’ of which you speak? If the ‘us’ refers to the people of Earth or of the United States, then the statement to me seems clearly false. If it refers to Altman’s readers, then the claim is at least plausible. But I still think it is false. I’m not used to o3-pro. Even I haven’t found the time to properly figure out what I can fully do with even o3 or Opus without building tools.

We are ‘used to this’ in the sense that we are finding ways to mostly ignore it because life is, as Agnes Callard says, coming at us 15 minutes at a time, and we are busy, so we take some low-hanging fruit and then take it for granted, and don’t notice how much is left to pick. We tell ourselves we are used to it so we can go about our day.

This is how the singularity goes: wonders become routine, and then table stakes.

We already hear from scientists that they are two or three times more productive than they were before AI.

I note that Robin Hanson responded to this here:

Robin Hanson: “We already hear from scientists that they are two or three times more productive than they were before AI.”

If so, wouldn’t their wages now be 2-3x larger too?

Abram: No, why would they be?

Robin Hanson: Supply and demand.

o3 pro, you want to take this one? Oh right, nominal and contratual stickiness, institutional price controls, surplus division, supply response, measurement mismatch, time reallocation, you get maybe a 10% pay bump, wages track bargained marginal revenue not raw technical output, and both lag the technical shock by years.

I did, however, notice my economics being several times more productive there.

From here on, the tools we have already built will help us find further scientific insights and aid us in creating better AI systems. Of course this isn’t the same thing as an AI system completely autonomously updating its own code, but nevertheless this is a larval version of recursive self-improvement.

There are other self-reinforcing loops at play. The economic value creation has started a flywheel of compounding infrastructure buildout to run these increasingly-powerful AI systems. And robots that can build other robots (and in some sense, datacenters that can build other datacenters) aren’t that far off.

If we have to make the first million humanoid robots the old-fashioned way, but then they can operate the entire supply chain—digging and refining minerals, driving trucks, running factories, etc.—to build more robots, which can build more chip fabrication facilities, data centers, etc, then the rate of progress will obviously be quite different.

Yep. Then table stakes, recursive self-improvement, self-perpetuating growth, a robot-based parallel physical production economy. His timeline seems to be AI 2028.

Then true superintelligence, then it keeps going after that, then what?

The rate of technological progress will keep accelerating, and it will continue to be the case that people are capable of adapting to almost anything.

…

The rate of new wonders being achieved will be immense. It’s hard to even imagine today what we will have discovered by 2035.

It’s important to notice that this ‘adapt to anything’ is true in some ways and not in others. There are some things that are like decapitations, in that you very much cannot adapt because they kill you, dead. Or that deny you the necessary resources to survive, or to compete. You can’t ‘adapt’ to compete with someone or something sufficiently more capable than you.

We probably won’t adopt a new social contract all at once, but when we look back in a few decades, the gradual changes will have amounted to something big.

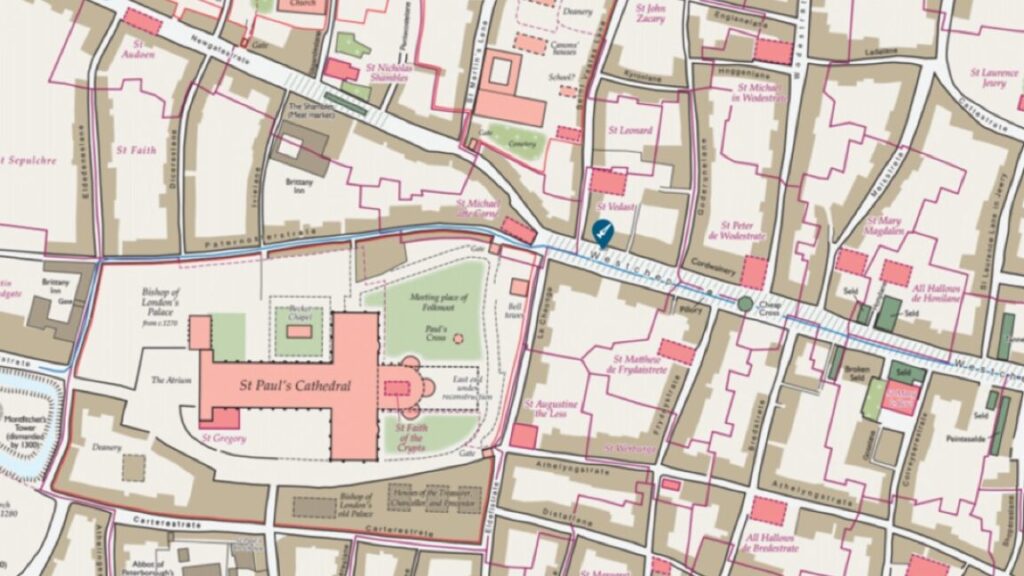

If history is any guide, we will figure out new things to do and new things to want, and assimilate new tools quickly (job change after the industrial revolution is a good recent example).

I sigh every time I see this ‘well in the past we’ve adapted and there were more things to do so in the future when we make superintelligent things universally better at everything no reason this shouldn’t still be true, we just need some time.’ Um, no? Or at least, definitely not by default?

I seriously don’t understand how you can expect robots by 2028 and wonders beyond the imagination along with superintelligence by 2035 and think mostly humans will do the things we usually do only with more capabilities at our disposal, or something? It’s like there’s some sort of semantic stop sign to not think about the obvious implications? Is there an actual model of what this world looks like?

A subsistence farmer from a thousand years ago would look at what many of us do and say we have fake jobs, and think that we are just playing games to entertain ourselves since we have plenty of food and unimaginable luxuries. I hope we will look at the jobs a thousand years in the future and think they are very fake jobs, and I have no doubt they will feel incredibly important and satisfying to the people doing them.

There are two halves to this.

The first half is, would the subsistence farmer think the jobs were fake? For some jobs yes, but once you explained what was going on and they got over future shock, I don’t think their breakdown of real versus fake would be that different from that of a farmer today. They might think a lot of them are not ‘necessary,’ that they were products of great luxury, but that would not be different than how they thought about the jobs of those at their king’s court.

I too hope that I look a thousand years in the future and I see people at all, who are actually alive, doing things at all. I hope they move beyond thinking of them quite as ‘jobs’ but I will happily take jobs. This time is different, however. Before humans built tools and grew more capable through those tools, opening up our ability to do more things.

The thing Altman is describing is very obviously, as I keep saying, not a mere tool. Humans will no longer be the strongest optimizers, or the smartest minds, or the most capable agents. Anything we can do, AI can do better, except insofar as the thing doesn’t count unless a human does it. Otherwise, an AI does the new job too.

Altman talks about some people deciding to ‘plug in’ to machine-human interfaces while others choose not to. Won’t this be like deciding not to have a phone or not use computers, only vastly more so, and also the computer and phone are applying for your job? Then again, if all the jobs that involve the AI are done better by the AI alone anyway, including manual labor via robots, perhaps you don’t lose that much by not plugging in?

And indeed, if there are jobs that ‘require you be a human’ it might also require that you not be plugged in.

Think about chess. First humans beat AIs. Then AIs beat humans, but for a brief period AI and humans working together, essentially these ‘merges,’ still beat AIs. Then the humans no longer added anything. We’re going through the same process in a lot of places, like diagnostic reasoning, where the doctor is arguably already a net negative when they don’t accept the AI’s opinion.

Now, humans use the AIs to train, perhaps, but they don’t ‘merge’ or ‘plug in’ because if they did that then the AIs would be playing chess. We want two humans to play chess, so they need to be fully unplugged, or the exercise loses its meaning.

So, again, seriously, ‘merge’? Why do people think this is a thing?

Looking forward, this sounds hard to wrap our heads around. But probably living through it will feel impressive but manageable. From a relativistic perspective, the singularity happens bit by bit, and the merge happens slowly.

I have no idea where this expectation is coming from, other than that the people won’t have a say in it. The singularity will, as Douglas Adams wrote about deadlines, give a whoosh as it flies by. That will be that. There’s nothing to manage, your services are no longer required.

Jamie Sevilla notes that Altman’s estimate here that the average ChatGPT query uses about 0.34 watt-hours, about what an oven would use in a little over one second, and roughly one fifteenth of a teaspoon of water, similar to Epoch’s estimate of 0.3 watt-hours, which was a 90% reduction over previous estimates. Compute efficiency is improving rapidly. Also note o3’s 80% price drop.

Like Dario Amodei’s Machines of Loving Grace, the latest Altman essay spends the bulk of its time hand waving away all the important concerns about such futures, both in terms of getting there and where the there is we even want to get. It’s basically wish fulfillment. There’s some value in pointing out that such worlds will, to the extent we can direct those worlds and their AIs to do things, be very good at wish fulfillment. There are some people who need to hear that. But that’s not the hard part.

Finally, we get to the ‘serious challenges to confront’ section.

There are serious challenges to confront along with the huge upsides. We do need to solve the safety issues, technically and societally, but then it’s critically important to widely distribute access to superintelligence given the economic implications. The best path forward might be something like:

-

Solve the alignment problem, meaning that we can robustly guarantee that we get AI systems to learn and act towards what we collectively really want over the long-term (social media feeds are an example of misaligned AI; the algorithms that power those are incredible at getting you to keep scrolling and clearly understand your short-term preferences, but they do so by exploiting something in your brain that overrides your long-term preference).

-

Then focus on making superintelligence cheap, widely available, and not too concentrated with any person, company, or country. Society is resilient, creative, and adapts quickly. If we can harness the collective will and wisdom of people, then although we’ll make plenty of mistakes and some things will go really wrong, we will learn and adapt quickly and be able to use this technology to get maximum upside and minimal downside. Giving users a lot of freedom, within broad bounds society has to decide on, seems very important. The sooner the world can start a conversation about what these broad bounds are and how we define collective alignment, the better.

That’s it. Allow me to summarize this plan:

-

Solve the alignment problem so AIs learn and act towards what we collectively really want over the long term.

-

Make superintelligence cheap, widely available, and not too concentrated.

-

We’ll adapt, muddle through, figure it out, user freedom, it’s all good, society is resilient and adapts quickly.

I’m sorry, but that’s contradictory, doesn’t address the hard questions, isn’t an answer.

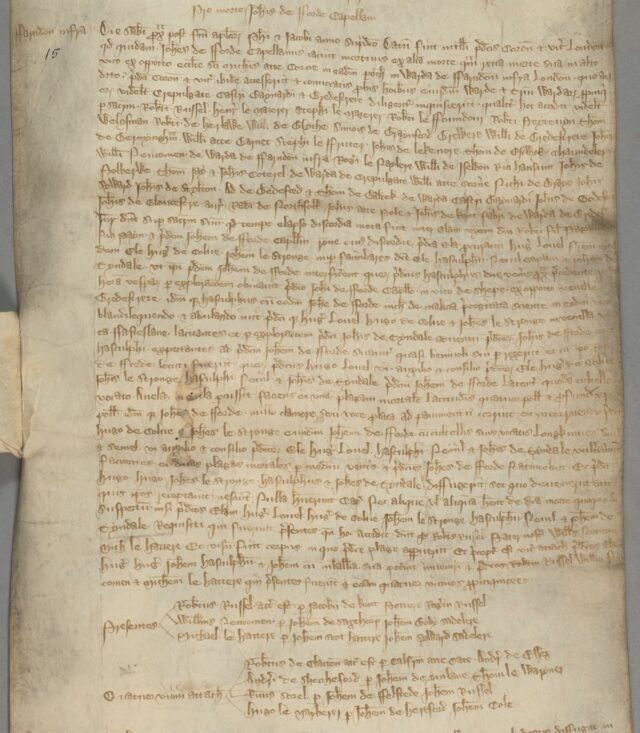

Instead it passes the buck to ‘society’ to discuss and answer the questions, when for various overdetermined reasons we do not seem capable of having these conversations in any serious fashion – and indeed, the moment Altman comes up against possibly joining such a conversation, he punts, and says ‘you first.’ Indeed, he seems to acknowledge this, and to want to wait until after the singularity to figure out how to deal with the effects of the singularity – he says ‘the sooner the better’ but the plan is clearly not to get to this all that soon.

This all assumes facts not in evidence and likely false in context. Why should we expect society to be resilient in this situation? What even is ‘society’ when the smartest minds, most capable agents, are AIs? How do users have this freedom, how is the intelligence widely available, if the agents all are going to act towards ‘what we collectively really want over the long term?’ and how do you reconcile these conflicting goals? Who decides what all of that means?

If the power is diffused how do we avoid inevitable gradual disempowerment? What ‘broad bounds’ are we going to ‘decide upon’ and how are we deciding? How would certain people feel about the call for diffusion of power outside of ‘any one country’ and how does this square with all the ‘America must win the race’ talk? Either the power diffusion is meaningful or it isn’t. Given history, why would you expect there to be such a voluntary diffusion of power?

That’s in addition to not addressing the technical aspect of any of this, at all. Yes, good, ‘solve the alignment problem,’ how the heck do you propose we do that? For any definition of that plan, especially if this has to survive wide distribution?

I get that none of that is ‘the point’ of The Gentle Singularity. But the right answers to those questions are the only way such a singularity can stay gentle, or end well. It’s not an optional conversation.

Eli Lifland: I’m most concerned about not being able to align superintelligence with any goal, rather than only being able to align them with short-term goals.

I’m concerned about this characterization of the alignment problem [the #1 above].

Appreciate him publishing this.

Jeffrey Ladish: Huh it seems right to me. Being able to align them to a long term goal is a subset of being able to align them to any goal. I think Sam is wildly optimistic but the target doesn’t seem wrong

Eli Lifland: I think with the example it gives the wrong impression.

Social media feeds are in many ways a highly helpful example and intuition pump here, as it illustrates what ‘aligned’ means here. Those feeds are clearly aligned to the companies in question. There’s an additional question of whether such actions are indeed in the best interests of the companies, but for this purpose I think we should accept that they likely are. Thus, alignment here means aligned to the user’s longer term best interests, and how long term and how paternalistic that should be are left as open questions.

The place this is potentially misleading is, if we did get a feed here that was aligned to the user’s ‘true’ preferences in some sense, then is it aligned? What if that was against what we collectively ‘really want,’ let’s say because it encourages too much social media use by being too good, or it doesn’t push you enough towards making new friends? And that’s only a microcosm. Not doing the social media misalignment thing is relatively easy – we all know how to align the algorithm to the user far better, and we all know why it isn’t done. The general case job here is vastly harder.

Matt Yglesias wishes Sam Altman and others would tell us which policy ideas they think we should entertain, since he mentions that a much richer world could entertain new ideas.

My honest-Altman response is two-fold.

-

We’re not richer yet, so we can’t yet entertain them. There’s a reason Altman says we won’t adapt the new social contract all at once. So it would be unwise to tell the world what they are. I think this is actually a strong argument. There are many aspects of the future that will require sacred value tradeoffs, if you take any side of one of those you open yourself up to attack, and if you do so before it is clear the tradeoff is forced it is vastly worse. There’s no winning doing this.

-

If we do get into this richer position with the ability to meaningfully enact policy, if we are all still alive and in control over the future, then this is the part where we can adapt and muddle through and fix things in post. We can have that discussion later (and have superintelligent help). There’s no need to get distracted by this.

An obvious objection is, what makes us think we can use, demand or enforce social contracts in such a future? The foundations of social contract theory don’t hold in a world with superintelligence. I think that contra many science fiction writers that a future very rich world will choose to treat its less fortunate rather well even if nothing is forcing the elite to do so, but also nothing will be forcing the elite to do so.

Finally, like Rob Wiblin I notice I am confused by this closing:

Sam Altman: May we scale smoothly, exponentially and uneventfully through superintelligence.

It is hard not to interpret this, and many aspects of the essay, as essentially saying ‘don’t worry, nothing to see here, we got this, wonders beyond your imagination with no downsides that can’t be easily fixed, so don’t regulate me. Just go gently into that good night, and everything will be fine.’

Daniel Faggella: the singularity is going to hit so hard it’ll rip the skin off your fucking bones tbh

It’ll fucking shred not only hominid-ness, put probably almost any semblance of hominid values or works.

It’ll be a million things at once, or a trillion. it sure af won’t be gentle lol

Can’t blame sam for saying what he’s saying, the incentives make it hard to say otherwise.

I don’t understand why people respond so often with the common counterpoint of ‘well the singularity hasn’t happened yet, so the idea that it will hit you hard when it does come hasn’t been borne out.’ That doesn’t bear on the question at all.

Max Kesin sums up the appropriate response: