Reported Dreamcast addict Tim Walz is now an unofficial Crazy Taxi character

Are you ready? Here. We. Go! —

New “Tim Walz Edition” mod lets the VP hopeful earn some ca-razy (campaign) money.

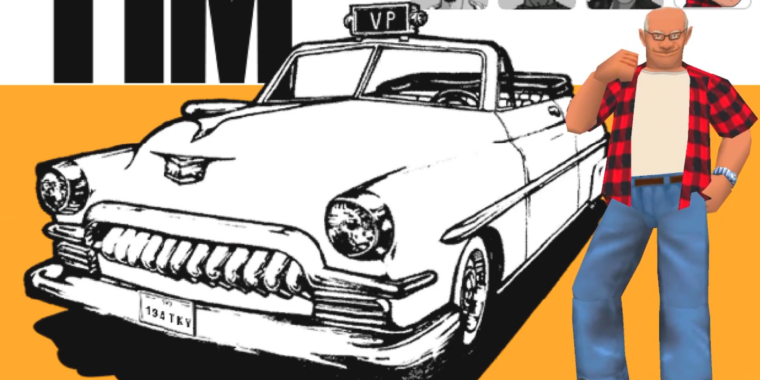

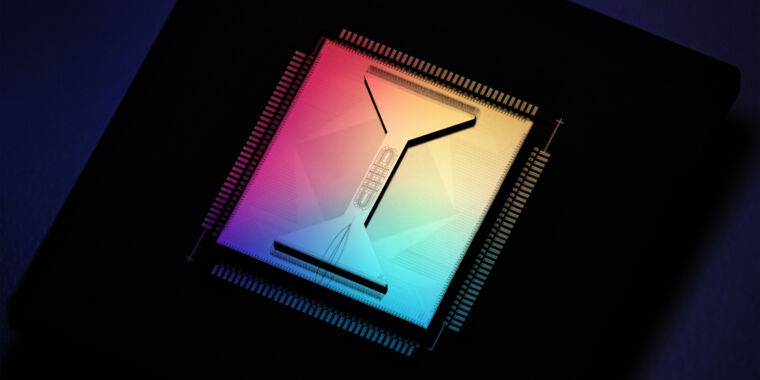

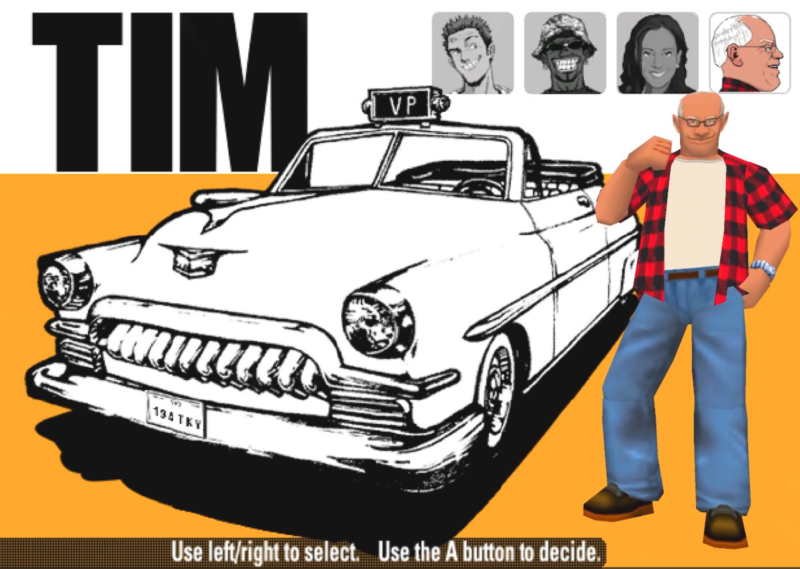

Enlarge / The “VP” on the cab light is a nice touch.

Last month, in a profile of newly named Democratic vice presidential candidate Tim Walz, The New York Times included a throwaway line about “the time his wife had seized his Dreamcast, the Sega video game console, because he had been playing to excess.” Weeks later, that anecdote formed the unlikely basis for the unlikely Crazy Taxi: Tim Walz Edition mod, which inserts the Minnesota governor (and top-of-the-ticket running mate Kamala Harris) into the Dreamcast classic driving game.

“Rumor has it that Tim Walz played Crazy Taxi so much his wife took his Dreamcast away from him… so I decided to put him in the game,” modder Edward La Barbera wrote on the game’s Itch.io page.

Unfortunately, the pay-what-you-want mod can’t just be burned to a CD-R and played on actual Dreamcast hardware. Currently, the mod’s visual files are tuned to work only with Dreamcast emulator Flycast, which includes built-in features for replacing in-game textures.

After a few minutes of playing with settings and folder structures, launching the mod replaces the Crazy Taxi character Gus with a model of Walz sporting an open-buttoned black and red flannel shirt (a look The Wall Street Journal said has “made him a DNC fashion icon”). There are plenty of little visual touches beyond the Walz character, too, including “D” and “R” gears labeled as “Democrat” and “Republican,” a fare counter that’s labeled as “campaign $$$,” and a countdown measuring the seconds “till election time.”

The mod files also include new voice lines for Walz and Harris sourced from “their respective DNC speeches.” Getting those files to work in a standard Crazy Taxi ROM requires a good deal of fiddling with third-party ISO management programs, though, so you might be better off watching a gameplay video if you just want to hear a taxi-driving Walz cry out, “Mind your own business” to an impatient passenger.

The mod lets Walz and Harris (unofficially) join a long line of real political figures appearing in video games, including Bill Clinton and Al Gore as hidden NBA Jam characters, Barack Obama and Sarah Palin as DLC characters in Mercenaries 2 and, uh, Abraham Lincoln leading a mechanical strike team in 3DS title Code Name: S.T.E.A.M.

Where are they now: Walz’s Dreamcast edition

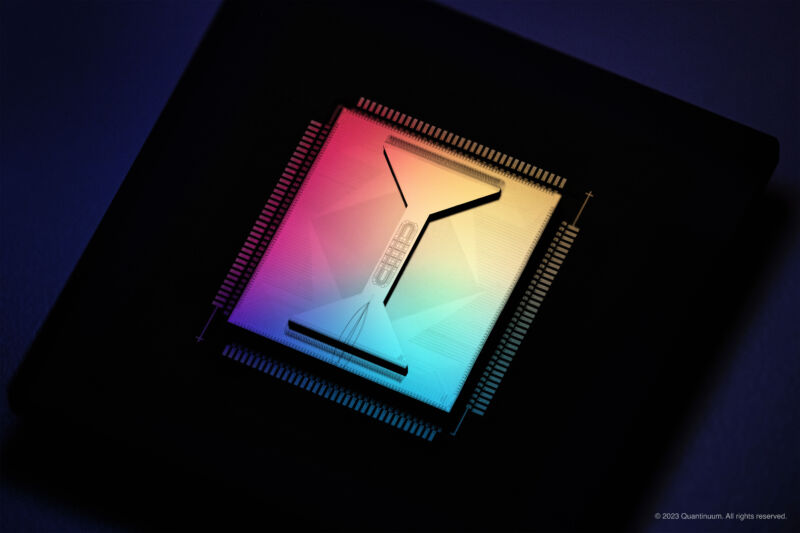

Enlarge / Current owner Bryn Tanner poses with the actual Dreamcast once owned by Tim Walz.

While Walz and the campaign haven’t commented publicly on his reported Dreamcast addiction, there’s been a surprising amount of detailed reporting on the fate of the Governor’s fabled Dreamcast itself. Former Walz student and campaign intern Tom Johnson told IGN that Walz brought the old console in as a potential donation in the summer of 2007. Tanner said it didn’t get much use in the office break room, but after the campaign, he took the system—complete with a copy of Crazy Taxi in the drive—home to play with roommate Alex Gaterud.

From there, Gaterud sold the console on Craigslist for a mere $25 in 2012—he recalled to IGN that, at the time, “advertising something ‘formerly owned by a US Congressman’ doesn’t add any value on Craigslist.” The customer in that Craigslist sale, Bryn Tanner, was posting about the acquisition online years before Walz became a national political celebrity.

More recently, Tanner has taken to posting TikTok videos about his relatively notable console and showing it off at local gaming convention 2D Con. “I’m basically famous,” Tanner joked in a post following the NYT story.

Walz, 60, is on the edge of the first generation of national politicians that grew up in the era of early home video game consoles (he would have been about 13 when the Atari 2600 launched). Younger politicians have even started to cater more specifically to the gaming demographic, too, as when congresswoman Alexandria Ocasio-Cortez appeared on a charity-focused Donkey Kong 64 marathon Twitch stream to promote transgender awareness and political action.

But some of Walz’s political opponents have tried to make hay of the fact that he was apparently hooked on a console that came out when he was in his mid-30s. “This is reported as a fun, relatable story, but doesn’t anybody else find a 35-year-old man getting that addicted to video games a little sad?” conservative activist Charlie Kirk posted on social media.

To the people who knew Walz as a gamer, though, it just makes him more human. “I think it’s sort of the specificity of it that really gets me,” Tanner told the Minneapolis Star-Tribune last month. “The fact that it wasn’t just, ‘Oh, my wife took away my video game,’ because that could be anything. It was the Dreamcast. It was this kind of underrated flash in the pan.”

Reported Dreamcast addict Tim Walz is now an unofficial Crazy Taxi character Read More »