Explain it like I’m 5: Why is everyone on speakerphone in public?

The key to working at a place like Ars Technica is solid news judgment. I’m talking about the kind of news judgment that knows whether a pet peeve is merely a pet peeve or whether it is, instead, a meaningful example of the Ways that Technology is Changing our World.

The difference between the two is one of degree: A pet peeve may drive me nuts but does not appear to impact anyone else. A Ways that Technology is Changing our World story must be about something that drives a lot of people nuts.

“But where is the threshold?” I hear you asking plaintively. “It’s extremely important that I know when something crosses the line from pet peeve to important, chin-stroking journalism topic!”

Fortunately, the answer is simple. The threshold has been breached when your local public transit agency puts up a sign about the behavior in question.

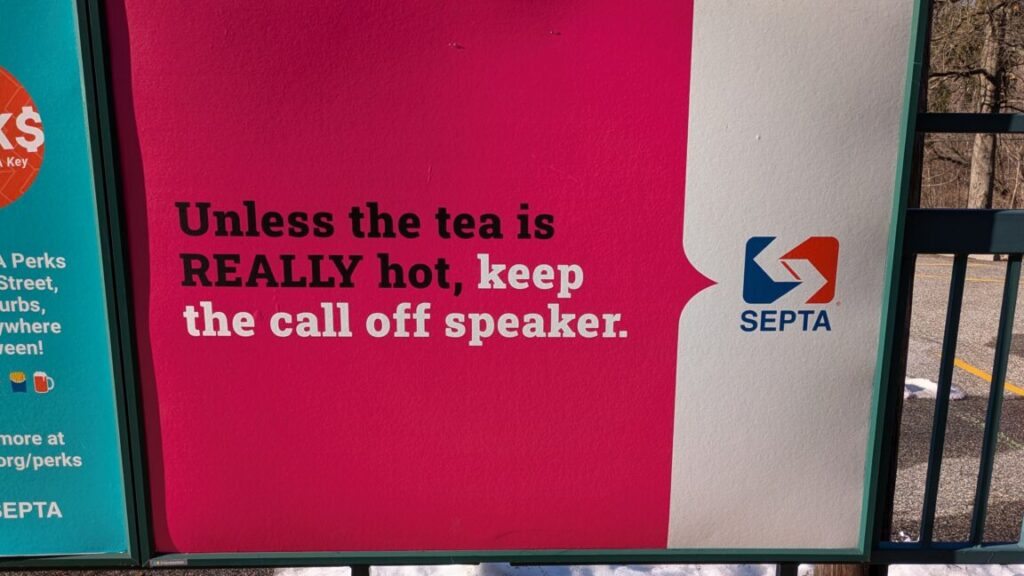

Which brings me to the sign I saw yesterday in Philadelphia.

“Unless the tea is REALLY hot, keep the call off speaker,” it said.

(For those not in the US, “tea” in this context means gossip or news.)

SEPTA, the local transit agency, runs the buses and commuter rail in Philadelphia, and you can tell from the light-hearted-but-seriously-don’t-do-this tone of the message that speakerphone-wielding passengers are now widely complained about by their fellow riders.

I share their disdain, but for me, the dark and judgmental thoughts I have when I see this behavior are also paired with confusion. Why is it happening? Do these people not know that it is actually more work to hold your phone out in front of you than up to your ear? Do they have no common decency, manners, or taste? Do they genuinely not care if everyone in the frozen foods aisle overhears them talking about Aunt Kathy’s diagnosis? It’s bizarre.

At least when it comes to something like TikTok or Spotify, there’s a certain logic. Perhaps you have no headphones but need to unwind after a long day, and you just can’t imagine anyone who might not enjoy the soothing sounds of [Harry Styles/Cannibal Corpse/Wu-Tang Clan]?

Explain it like I’m 5: Why is everyone on speakerphone in public? Read More »