Waymo to roll out driverless taxis on highways in three US cities

Mawakana added her company did not think in terms of “how many [incidents] are allowable” and the challenge was ensuring the “bar on safety” was high enough.

Highway routes will initially be available to users who have opted in to early access for new features, before being rolled out more widely.

Passengers will be able to travel to and from the airport in San Jose, in the San Francisco Bay Area. The company already serves Phoenix airport but will now be able to access it on the highway.

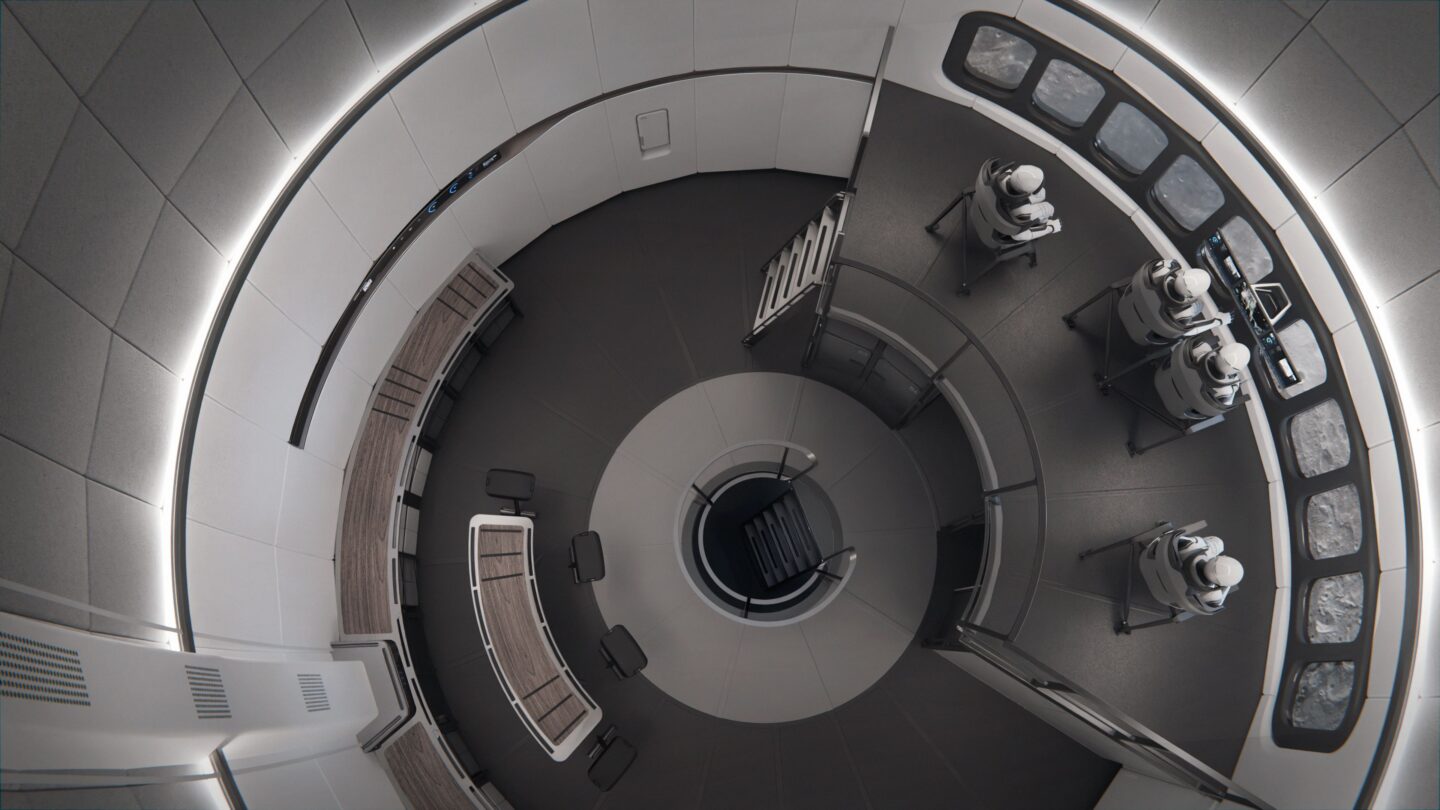

Waymo, which launched its paid driverless taxi services in 2020, operates more than 250,000 rides a week. It has a fleet of more than 2,000 vehicles across its five US markets, primarily made up of Jaguar Land Rover electric I-Pace vehicles kitted out with its bespoke sensors and computing system.

Tesla, its main US rival, in September expanded services to the public as part of its robotaxi pilot in Austin, Texas, while Amazon-owned Zoox recently began services in Las Vegas.

Waymo said its vehicles would exit the highways in the event that they encountered technical issues. It also said it had partnered with highway patrol to prepare for circumstances where the vehicle would need to pull over.

The company has reported more than 1,250 collisions involving its vehicles since 2021, according to the National Highway Traffic Safety Administration. This has included accidents with passenger vehicles, trucks, and other objects, including a closing gate.

© 2025 The Financial Times Ltd. All rights reserved. Not to be redistributed, copied, or modified in any way.

Waymo to roll out driverless taxis on highways in three US cities Read More »