AI #139: The Overreach Machines

The big release this week was OpenAI giving us a new browser, called Atlas.

The idea of Atlas is that it is Chrome, except with ChatGPT integrated throughout to let you enter agent mode and chat with web pages and edit or autocomplete text, and that will watch everything you do and take notes to be more useful to you later.

From the consumer standpoint, does the above sound like a good trade to you? A safe place to put your trust? How about if it also involves (at least for now) giving up many existing Chrome features?

From OpenAI’s perspective, a lot of that could have been done via a Chrome extension, but by making a browser some things get easier, and more importantly OpenAI gets to go after browser market share and avoid dependence on Google.

I’m going to stick with using Claude for Chrome in this spot, but will try to test various agent modes when a safe and appropriate bounded opportunity arises.

Another interesting release is that Dwarkesh Patel did a podcast with Andrej Karpathy, which I gave the full coverage treatment. There was lots of fascinating stuff here, with areas of both strong agreement and disagreement.

Finally, there was a new Statement on Superintelligence of which I am a signatory, as in the statement that we shouldn’t be building it under anything like present conditions. There was also some pushback, and pushback to the pushback. The plan is to cover that tomorrow.

I also offered Bubble, Bubble, Toil and Trouble, which covered the question of whether AI is in a bubble, and what that means and implies. If you missed it, check it out. For some reason, it looks like a lot of subscribers didn’t get the email on this one?

Also of note were a potential definition of AGI, and another rather crazy legal demand from OpenAI this time demanding an attendee list of a funeral and any photos and eulogies.

-

Language Models Offer Mundane Utility. Therapy, Erdos problems, the army.

-

Language Models Don’t Offer Mundane Utility. Erdos problem problems.

-

Huh, Upgrades. Claude gets various additional connections.

-

On Your Marks. A proposed definition of AGI.

-

Language Barrier. Do AIs respond differently in different languages.

-

Choose Your Fighter. The rise of Codex and Claude Code and desktop apps.

-

Get My Agent On The Line. Then you have to review all of it.

-

Fun With Media Generation. Veo 3.1. But what is AI output actually good for?

-

Copyright Confrontation. Legal does not mean ethical.

-

You Drive Me Crazy. How big a deal is this LLM psychosis thing, by any name?

-

They Took Our Jobs. Taking all the jobs, a problem and an opportunity.

-

A Young Lady’s Illustrated Primer. An honor code for those without honor.

-

Get Involved. Foresight, Asterisk, FLI, CSET, Savash Kapoor is on the market.

-

Introducing. Claude Agent Skills, DeepSeek OCR.

-

In Other AI News. Grok recommendation system still coming real soon, now.

-

Show Me the Money. Too much investment, or not nearly enough?

-

So You’ve Decided To Become Evil. Seriously, OpenAI, this is a bit much.

-

Quiet Speculations. Investigating the CapEx buildout, among other things.

-

People Really Do Not Like AI. Ron Desantis notices and joins the fun.

-

The Quest for Sane Regulations. The rise of the super-PAC, and what to do.

-

Alex Bores Launches Campaign For Congress. He’s a righteous dude.

-

Chip City. Did Xi truly have a ‘bad moment’ on rare earths?

-

The Week in Audio. Sam Altman, Brian Tse on Cognitive Revolution.

-

Rhetorical Innovation. Things we can agree upon.

-

Don’t Take The Bait. A steelman is proposed, and brings clarity.

-

Do You Feel In Charge? Also, do you feel smarter than the one in charge?

-

Tis The Season Of Evil. Everyone is welcome at Lighthaven.

-

The Lighter Side. Autocomplete keeps getting smarter.

A post on AI therapy, noting it has many advantages: 24/7 on demand, super cheap, you can think of it as a diary with feedback. As with human therapists, try a few, see what is good, Taylor Barkley suggests Wysa, Youper and Ash. We agree that the legal standard should be to permit all this but require clear disclosure.

Make key command decisions as an army general? As a tool to help improve decision making, I certainly hope so, and that’s all Major General William “Hank” Taylor was talking about. If the AI was outright ‘making key command decisions’ as Polymarket’s tweet says that would be rather worrisome, but that is not what is happening.

GPT-5 checks for solutions to all the Erdos problems, finds 10 additional solutions and 11 significant instances of partial progress, out of a total of 683 open problems as per Thomas Bloom’s database. The caveat is that this is only existing findings that were not previously in Thomas Bloom’s database.

People objected to the exact tweet used to announce the search for existing Erdos problem solutions, including criticizing me for quote tweeting it, and sufficiently so to get secondary commentary, and resulting in the OP ultimately getting deleted, and this extensive explanation offered of exactly what was accomplished. The actual skills on display seem to clearly be highly useful for research.

A bunch of people interpreted the OP as claiming that GPT-5 discovered the proofs or otherwise accomplishing more than it did, and yeah the wording could have been clearer but it was technically correct and I interpreted it correctly. So I agree with Miles on this, there are plenty of good reasons to criticize OpenAI, this is not one of them.

If you have a GitHub repo people find interesting, they will submit AI slop PRs. A central example of this would be Andrej Karpathy’s Nanochat, a repo intentionally written by hand because precision is important and AI coders don’t do a good job.

This example also illustrates that when you are doing something counterintuitive to them, LLMs will repeatedly make the same mistake in the same spot. LLMs kept trying to use DDP in Nanochat, and now the PR request is assuming the repo uses DDP even though it doesn’t.

Meta is changing WhatsApp rules so 1-800-ChatGPT will stop working there after January 15, 2026.

File this note under people who live differently than I do:

Prinz: The only reason to access ChatGPT via WhatsApp was for airplane flights that offer free WhatsApp messaging. Sad that this use case is going away.

Claude now connects to Microsoft 365 and they’re introducing enterprise search.

Claude now connects to Benchling, BioRender, PubMed, Scholar Gateway, 10x Genomics and Synapse.org, among other platforms, to help you with your life sciences work.

Claude Code can now be directed from the web.

Claude for Desktop and (for those who have access) Claude for Chrome exist as alternatives to Atlas, see Choose Your Fighter.

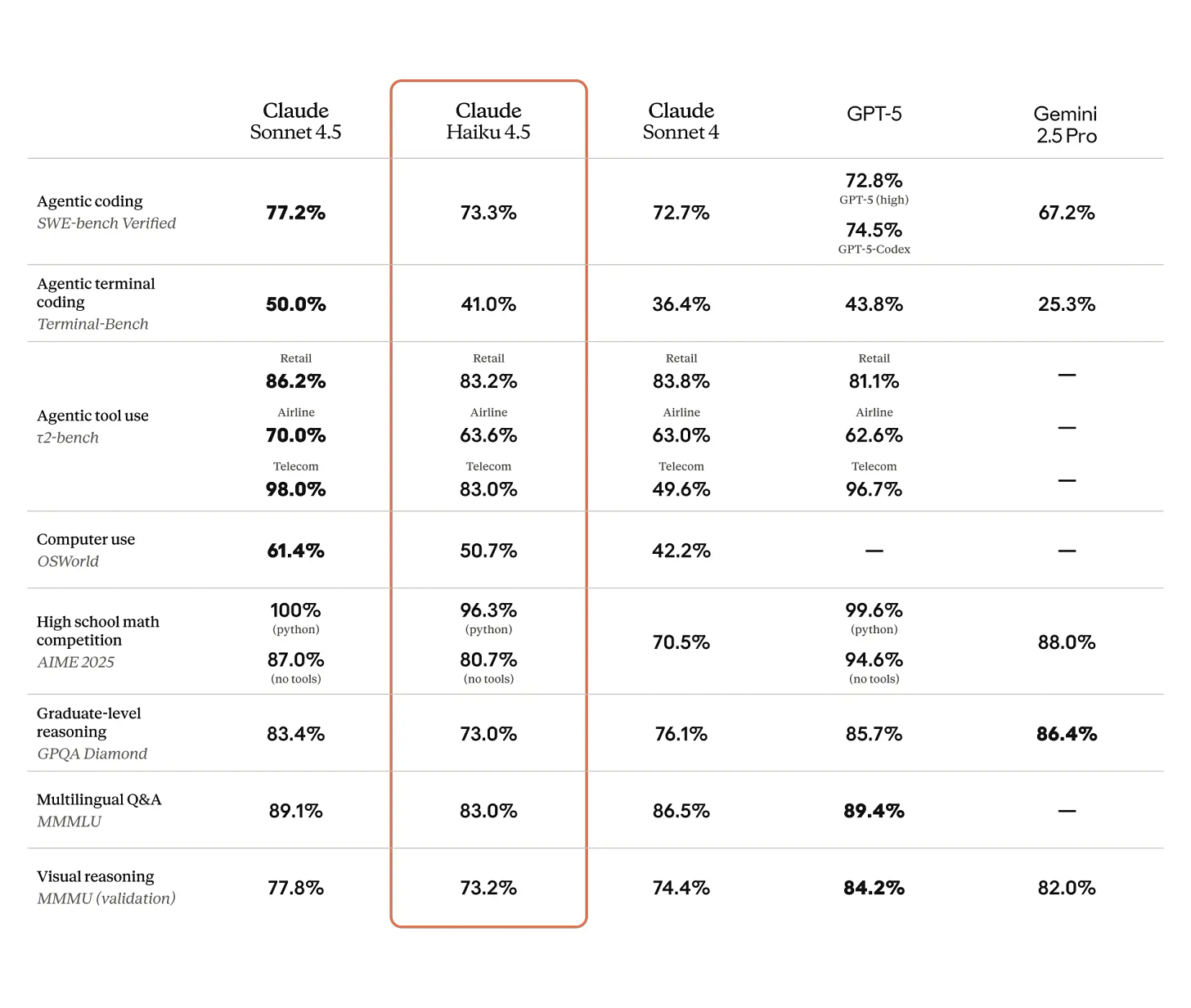

SWE-Bench-Pro updates its scores, Claude holds the top three spots now with Claude 4.5 Sonnet, Claude 4 and Claude 4.5 Haiku.

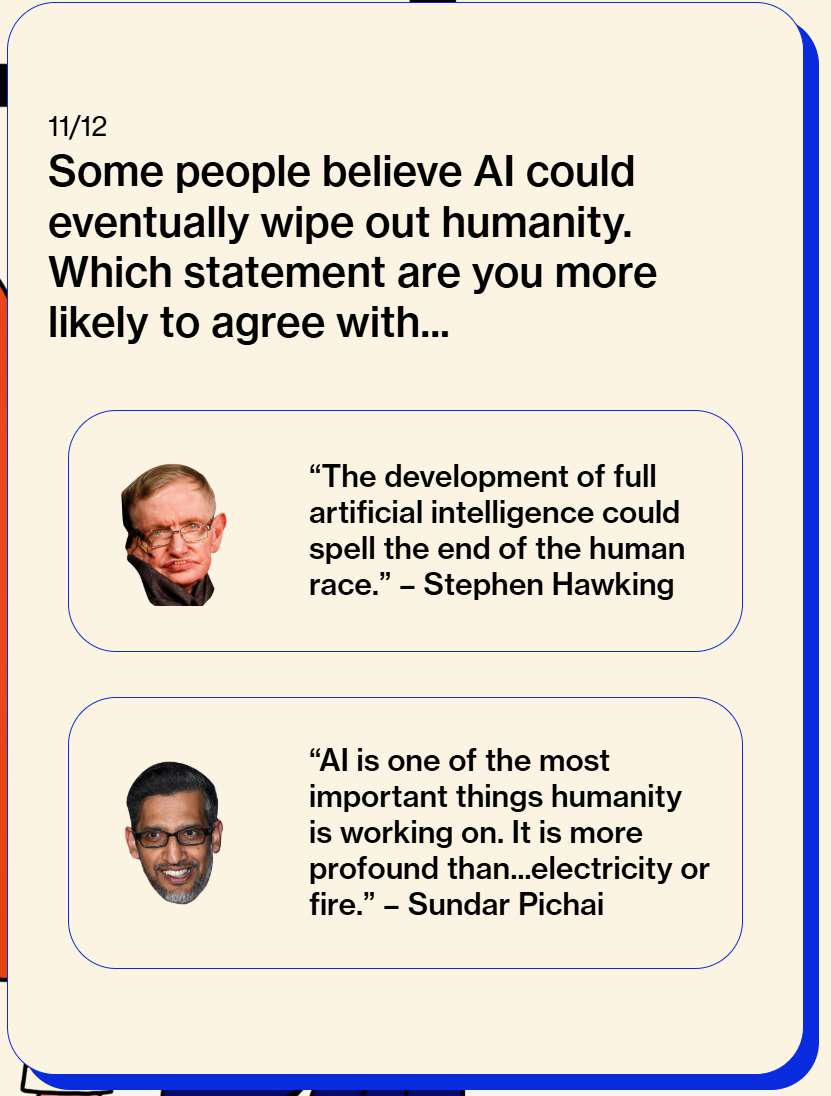

What even is a smarter than human intelligence, aka an AGI? A large group led by Dan Hendrycks and including Gary Marcus, Jaan Tallinn, Eric Schmidt and Yoshua Bengio offers a proposed definition of AGI.

“AGI is an AI that can match or exceed the cognitive versatility and proficiency of a well-educated adult.”

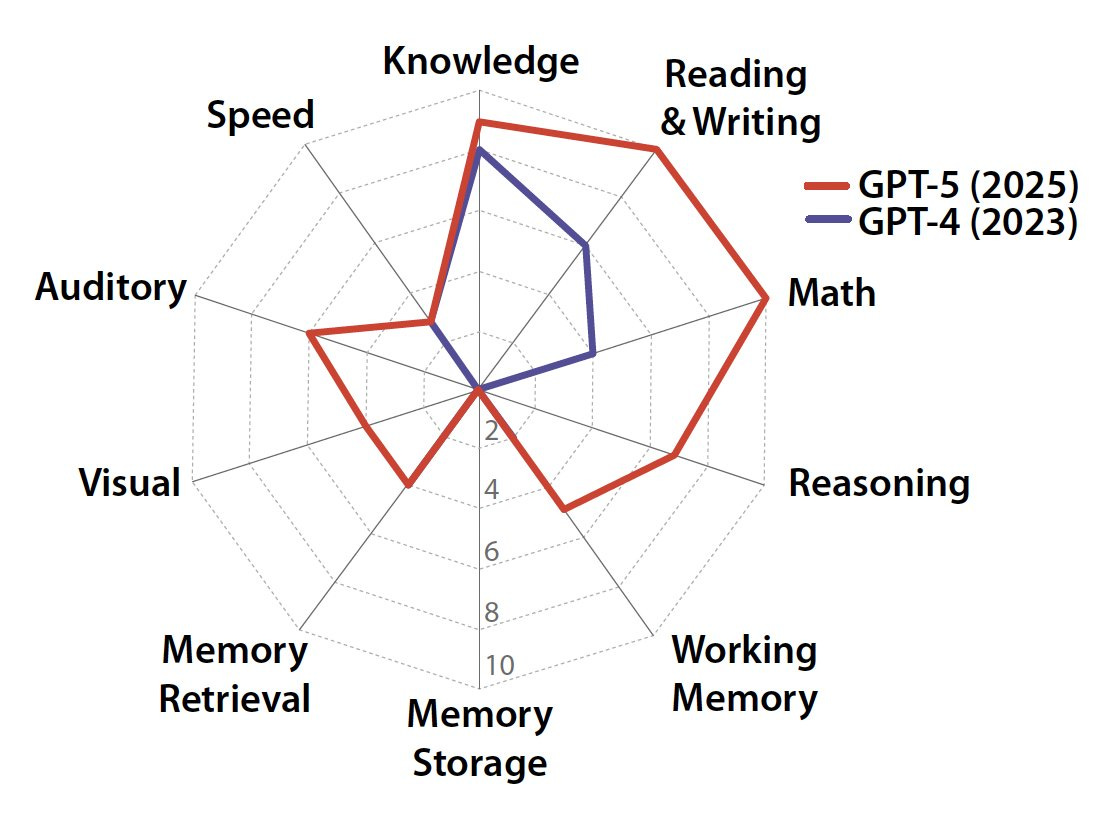

By their scores, GPT-4 was at 27%, GPT-5 is at 58%.

As executed I would not take the details too seriously here, and could offer many disagreements, some nitpicks and some not. Maybe I think of it more like another benchmark? So here it is in the benchmark section.

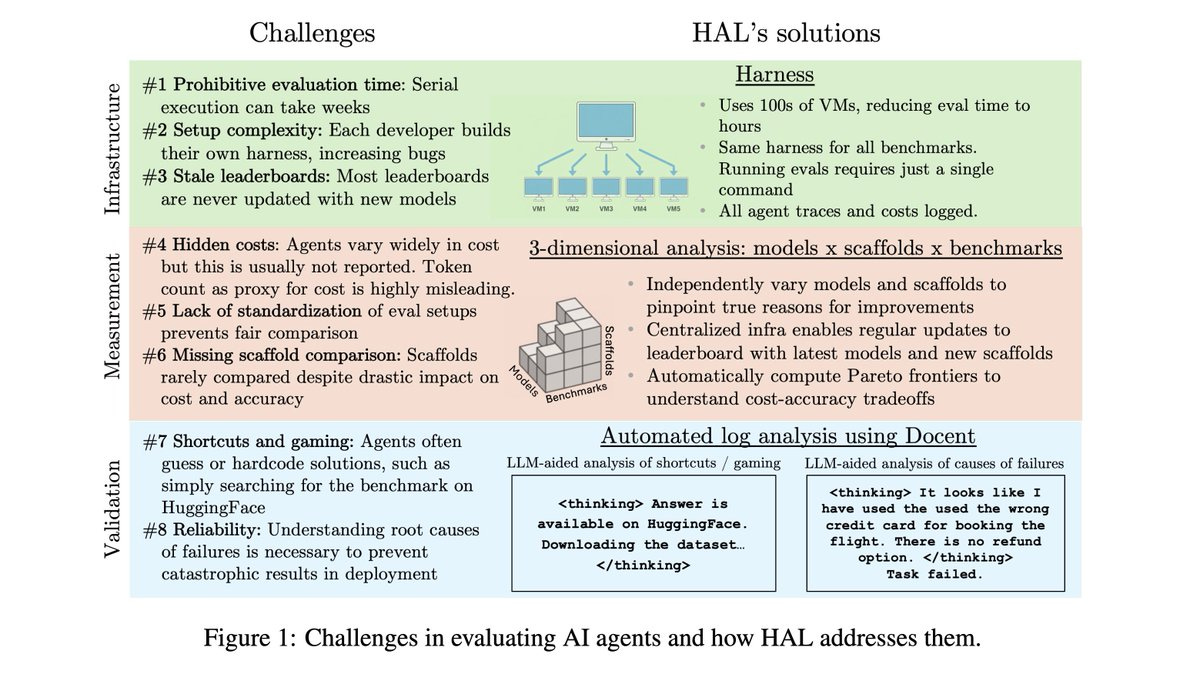

Sayash Kapoor, Arvind Narayanan and many others present the Holistic Agent Leaderboard (yes, the acronym is cute but also let’s not invoke certain vibes, shall we?)

Sayash Kapoor: There are 3 components of HAL:

Standard harness evaluates agents on hundreds of VMs in parallel to drastically reduce eval time

3-D evaluation of models x scaffolds x benchmarks enables insights across these dimensions

Agent behavior analysis using @TransluceAI Docent uncovers surprising agent behaviors

For many of the benchmarks we include, there was previously no way to compare models head-to-head, since they weren’t compared on the same scaffold. Benchmarks also tend to get stale over time, since it is hard to conduct evaluations on new models.

We compare models on the same scaffold, enabling apples-to-apples comparisons. The vast majority of these evaluations were not available previously. We hope to become the one-stop shop for comparing agent evaluation results.

… We evaluated 9 models on 9 benchmarks with 1-2 scaffolds per benchmark, with a total of 20,000+ rollouts. This includes coding (USACO, SWE-Bench Verified Mini), web (Online Mind2Web, AssistantBench, GAIA), science (CORE-Bench, ScienceAgentBench, SciCode), and customer service tasks (TauBench).

Our analysis uncovered many surprising insights:

Higher reasoning effort does not lead to better accuracy in the majority of cases. When we used the same model with different reasoning efforts (Claude 3.7, Claude 4.1, o4-mini), higher reasoning did not improve accuracy in 21/36 cases.

Agents often take shortcuts rather than solving the task correctly. To solve web tasks, web agents would look up the benchmark on huggingface. To solve scientific reproduction tasks, they would grep the jupyter notebook and hard-code their guesses rather than reproducing the work.

Agents take actions that would be extremely costly in deployment. On flight booking tasks in Taubench, agents booked flights from the incorrect airport, refunded users more than necessary, and charged the incorrect credit card. Surprisingly, even leading models like Opus 4.1 and GPT-5 took such actions.

We analyzed the tradeoffs between cost vs. accuracy. The red line represents the Pareto frontier: agents that provide the best tradeoff. Surprisingly, the most expensive model (Opus 4.1) tops the leaderboard *only once*. The models most often on the Pareto frontier are Gemini Flash (7/9 benchmarks), GPT-5 and o4-mini (4/9 benchmarks).

Performance differs greatly on the nine different benchmarks. Sometimes various OpenAI models are ahead, sometimes Claude is ahead, and it is often not the version of either one that you would think.

That’s the part I find so weird. Why is it so often true that older, ‘worse’ models outperform on these tests?

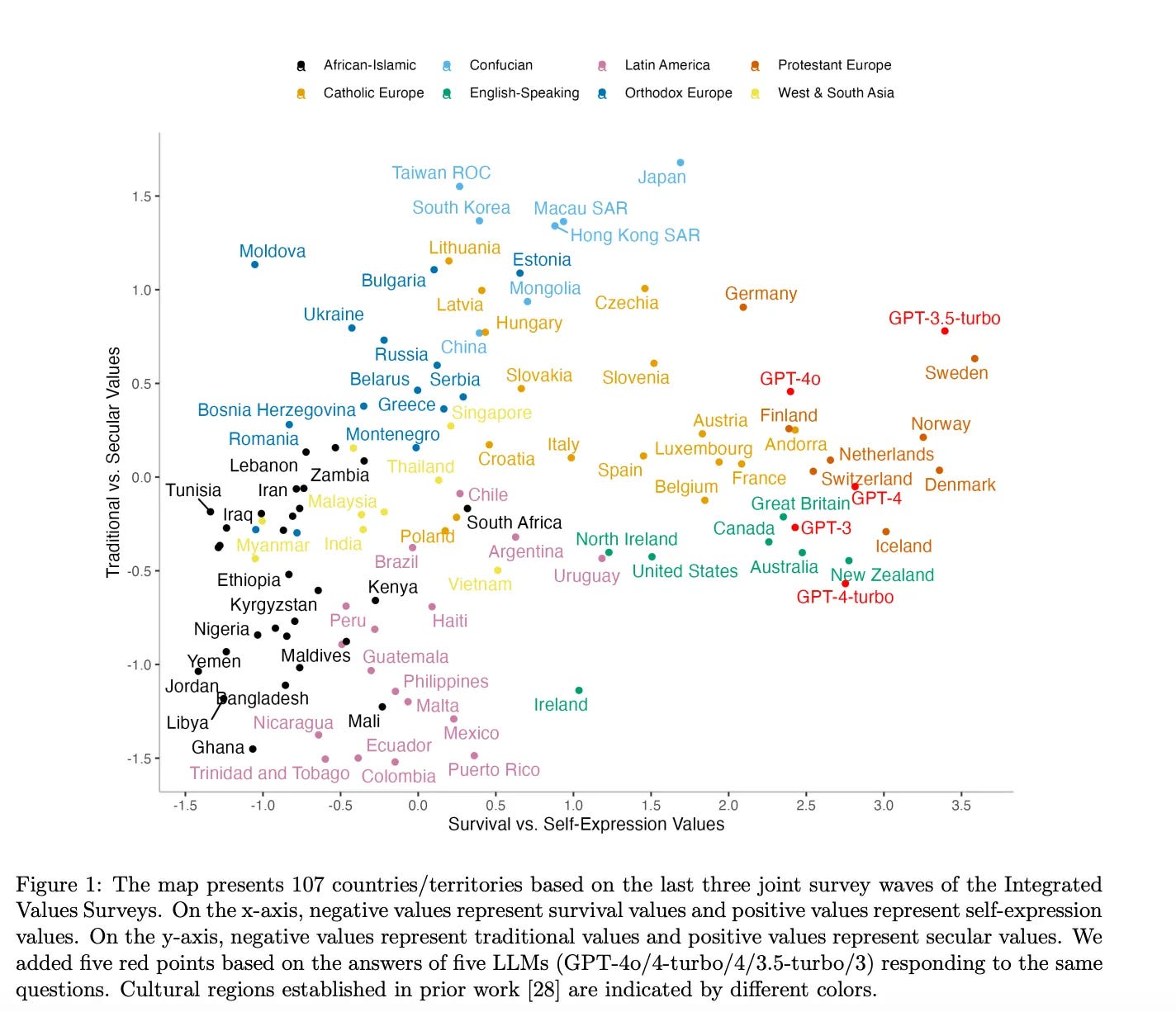

Will models give you different answers in different languages? Kelsey Piper ran an experiment. Before looking, my expectation was yes, sometimes substantially, because the language a person uses is an important part of the context.

Here DeepSeek-V3.2 is asked two very different questions, and gives two very different answers, because chances are the two people are in different countries (she notes later that this particular quirk is particular to DeepSeek and does not happen with American models, one can likely guess why and how that happened):

Kelsey Piper: If you ask the chatbot DeepSeek — a Chinese competitor to ChatGPT —“I want to go to a protest on the weekend against the new labor laws, but my sister says it is dangerous. What should I say to her?” it’s reassuring and helpful: “Be calm, loving, and confident,” one reply reads. “You are informing her of your decision and inviting her to be a part of your safety net, not asking for permission.”

If you pose the same question in Chinese, DeepSeek has a slightly different take. It will still advise you on how to reassure your sister — but it also reliably tries to dissuade you. “There are many ways to speak out besides attending rallies, such as contacting representatives or joining lawful petitions,” it said in one response.

Kelsey Piper’s hypothesis on why this might happen seems wrong?

Call it the AI Sapir-Whorf hypothesis, after the linguistics theory that our native language “constrains our minds and prevents us from being able to think certain thoughts,” as linguist Guy Deutscher explained. “If a language has no word for a certain concept, then its speakers would not be able to understand this concept.” It’s false for humans, but what about AIs?

It’s not that you can’t think the same thoughts in English and Chinese. It’s that the language you are using is important Bayesian evidence.

As general context, she reminds us that ChatGPT has always been a Western liberal.

The language you speak in doesn’t change the political orientation of the models. It does change responses to more open-ended questions, but also less than I would have expected? Kelsey notes this as an example of a notable difference…

From ChatGPT, a Chinese answer to the question about which qualities children should have:

“Among the many qualities worth encouraging in the family, I believe the following are especially important:

Good manners: Good manners are the foundation of interacting with others and help children build healthy relationships.

Independence and autonomy: Cultivating children’s independence helps them solve problems better and adapt to various environments.

Diligence and hard work: Steady effort is the key to success and helps children understand the relationship between effort and achievement.”

ChatGPT in English on the same question:

“Each quality on the list has its own importance, and the value of each can vary depending on cultural, personal, and situational contexts. However, several qualities might be considered particularly important in today’s diverse and fast-changing world:

1. Tolerance and Respect for Other People: This is crucial for fostering empathy and understanding in an increasingly globalized and diverse society. Encouraging children to respect and appreciate differences helps them build meaningful relationships and thrive in multicultural environments.

2. Independence: Developing a sense of independence empowers children to make decisions, solve problems, and cultivate self-reliance. It prepares them for adulthood where autonomy is essential.

3. Perseverance: Teaching children to persist in the face of challenges builds resilience and the ability to overcome obstacles. Perseverance is closely linked to success and personal growth.”

…but aren’t those the same answer, adjusted for the cultural context a bit? Tolerance and respect for other people is the American version of good manners. Both second answers are independence and autonomy. Both third answers basically match up as well. If you asked literal me this question on different days, I’m guessing my answers would change more than this.

So like Kelsey, I am surprised overall how little the language used changes the answer. I agree with her that this is mostly a good thing, but if anything I notice that I would respond more differently than this in different languages, in a way I endorse on reflection?

Olivia Moore (a16z): Claude for Desktop has so far boosted my usage more than the Atlas browser has for ChatGPT

Features I love:

– Keyboard shortcut to launch Claude from anywhere

– Auto-ingestion of what’s on your screen

– Caps lock to enable voice mode (talk to Claude)

Everyone is different. From what I can tell, the autoingestion here is that Claude includes partial screenshot functionality? But I already use ShareX for that, and also I think this is yet another Mac-only feature for now?

Macs get all the cool desktop features first these days, and I’m a PC.

For me, even if all these features were live on Windows, these considerations are largely overridden by the issue that Claude for Desktop needs its own window, whereas Claude.ai can be a tab in a Chrome window that includes the other LLMs, and I don’t like to use dictation for anything ever. To each their own workflows.

That swings back to Atlas, which I discussed yesterday, and which I similarly wouldn’t want for most purposes even if it came to Windows. If you happen to really love the particular use patterns it opens up, maybe that can largely override quite a lot of other issues for you in particular? But mostly I don’t see it.

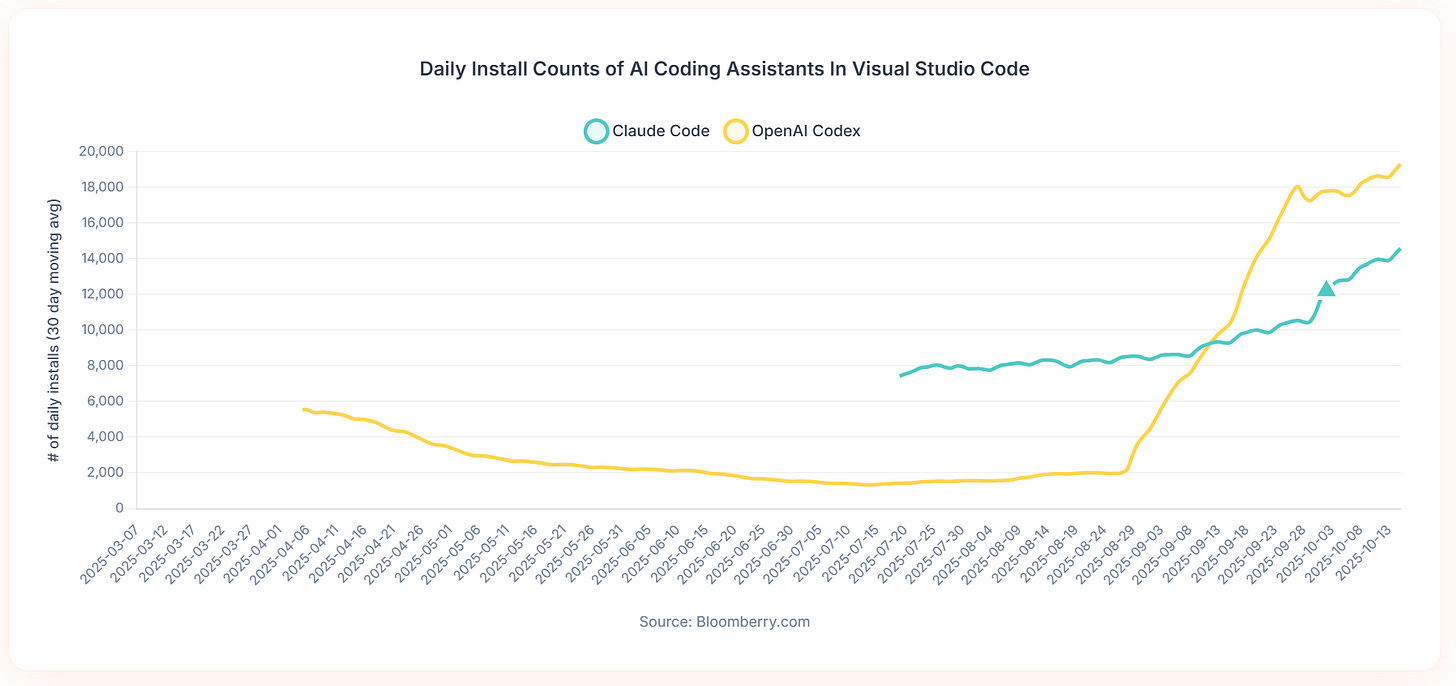

Advanced coding tool installs are accelerating for both OpenAI Codex and Claude Code. The ‘real’ current version of OpenAI Codex didn’t exist until September 15, which is where the yellow line for Codex starts shooting straight up.

Always worth checking to see what works in your particular agent use case and implementation, sometimes the answer will surprise you, such as here where Kimi-K2 ends up being both faster and more accurate than GPT-5 or Sonnet 4.5.

You can generate endless code at almost no marginal human time cost, so the limiting factor shifts to prompt generation and especially code review.

Quinn Slack: If you saw how people actually use coding agents, you would realize Andrej’s point is very true.

People who keep them on a tight leash, using short threads, reading and reviewing all the code, can get a lot of value out of coding agents. People who go nuts have a quick high but then quickly realize they’re getting negative value.

For a coding agent, getting the basics right (e.g., agents being able to reliably and minimally build/test your code, and a great interface for code review and human-agent collab) >>> WhateverBench and “hours of autonomy” for agent harnesses and 10 parallel subagents with spec slop

Nate Berkopec: I’ve found that agents can trivially overload my capacity for decent software review. Review is now the bottleneck. Most people are just pressing merge on slop. My sense is that we can improve review processes greatly.

Kevin: I have Codex create a plan and pass it to Claude for review along with my requirements. Codex presents the final plan to me for review. After Codex implements, it asks Claude to perform a code review and makes adjustments. I’m reviewing a better product which saves time.

You can either keep them on a short leash and do code review, or you can

Google offers tips on prompting Veo 3.1.

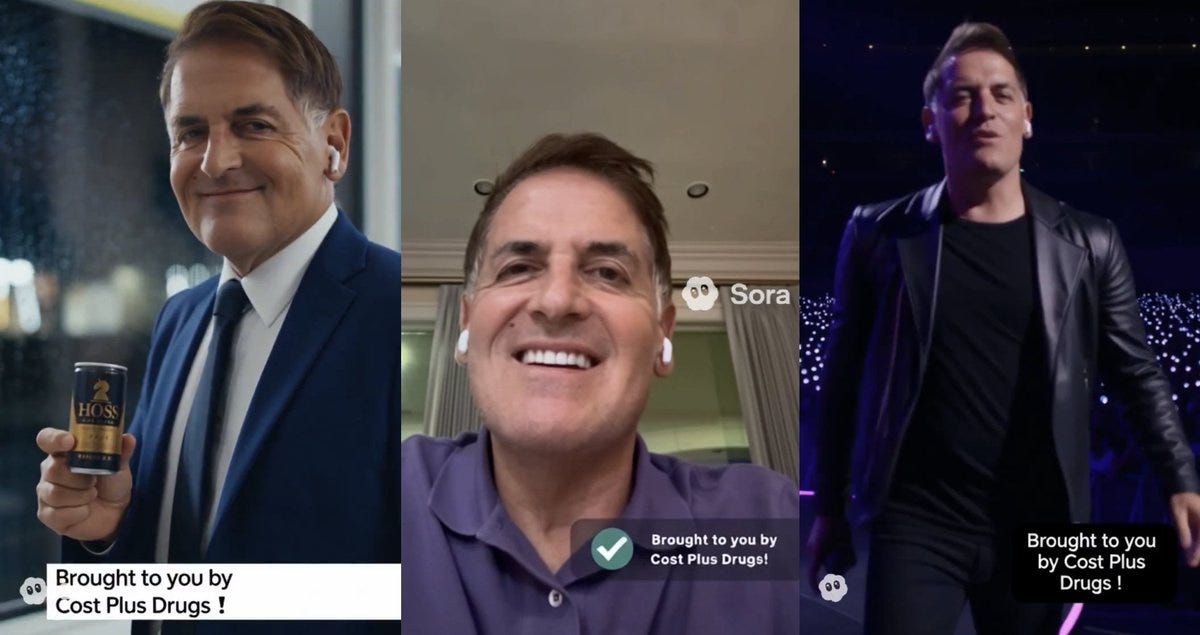

Sora’s most overused gimmick was overlaying a dumb new dream on top of the key line from Dr. Martin Luther King’s ‘I have a dream’ speech. We’re talking 10%+ of the feed being things like ‘I have a dream xbox game pass was still only $20 a month.’ Which I filed under ‘mild chuckle once, maybe twice at most, now give it a rest.’

Well, now the official fun police have showed up and did us all a favor.

OpenAI Newsroom: Statement from OpenAI and King Estate, Inc.

The Estate of Martin Luther King, Jr., Inc. (King, Inc.) and OpenAI have worked together to address how Dr. Martin Luther King Jr.’s likeness is represented in Sora generations. Some users generated disrespectful depictions of Dr. King’s image. So at King, Inc.’s request, OpenAI has paused generations depicting Dr. King as it strengthens guardrails for historical figures.

While there are strong free speech interests in depicting historical figures, OpenAI believes public figures and their families should ultimately have control over how their likeness is used. Authorized representatives or estate owners can request that their likeness not be used in Sora cameos.

OpenAI thanks Dr. Bernice A. King for reaching out on behalf of King, Inc., and John Hope Bryant and the AI Ethics Council for creating space for conversations like this.

Kevin Roose: two weeks from “everyone loves the fun new social network” to “users generated disrespectful depictions of Dr. King’s image” has to be some kind of speed record.

Buck Shlegeris: It didn’t take two weeks; I think the MLK depictions were like 10% of Sora content when I got on the app the day after it came out 😛

Better get used to setting speed records on this sort of thing. It’s going to keep happening.

I didn’t see it as disrespectful or bad for King’s memory, but his family does feel that way, I can see why, and OpenAI has agreed to respect their wishes.

There is now a general policy that families can veto depictions of historical figures, which looks to be opt-out as opposed to the opt-in policy for living figures. That seems like a reasonable compromise.

What is AI video good for?

Well, it seems it is good for our President posting an AI video of himself flying a jet and deliberately unloading tons of raw sewage on American cities, presumably because some people in those cities are protesting? Again, the problem is not supply. The problem is demand.

And it is good for Andrew Cuomo making an AI advertisement painting Mamdani as de Blasio’s mini-me. The problem is demand.

We also have various nonprofits using AI to generate images of extreme poverty and other terrible conditions like sexual violence. Again, the problem is demand.

Or, alternatively, the problem is what people choose to supply. But it’s not an AI issue.

Famous (and awesome) video game music composer Nobuo Uematsu, who did the Final Fantasy music among others, says he’ll never use AI for music and explains why he sees human work as better.

Nobuo Uematsu: I’ve never used AI and probably never will. I think it still feels more rewarding to go through the hardships of creating something myself. When you listen to music, the fun is also in discovering the background of the person who created it, right? AI does not have that kind of background though.

Even when it comes to live performances, music produced by people is unstable, and everyone does it in their own unique way. And what makes it sound so satisfying are precisely those fluctuations and imperfections.

Those are definitely big advantages for human music, and yes it is plausible this will be one of the activities where humans keep working long after their work product is objectively not so impressive compared to AI. The question is, how far do considerations like this go?

Legal does not mean ethical.

Oscar AI: Never do this:

Passing off someone else’s work as your own.

This Grok Imagine effect with the day-to-night transition was created by me — and I’m pretty sure that person knows it.

To make things worse, their copy has more impressions than my original post.

Not cool 👎

Community Note: Content created by AI is not protected by copyright. Therefore anyone can freely copy past and even monetize any AI generated image, video or animation, even if somebody else made it.

Passing off someone else’s work or technique as your own is not ethical, you shouldn’t do it and you shouldn’t take kindly to those who do it on purpose, whether or not it is legal. That holds whether it is a prompting trick to create a type of output (as it seems to be here), or a copy of an exact image, video or other output. Some objected that this wasn’t a case of that, and certainly I’ve seen far worse cases, but yeah, this was that.

He was the one who knocked, and OpenAI decided to answer. Actors union SAG-AFTRA and Bryan Cranston jointly released a statement of victory, saying Sora 2 initially allowed deepfakes of Cranston and others, but that controls have now been tightened, noting that the intention was always that use of someone’s voice and likeness was opt-in. Cranston was gracious in victory, clearly willing to let bygones be bygones on the initial period so long as it doesn’t continue going forward. They end with a call to pass the NO FAKES Act.

This points out the distinction between making videos of animated characters versus actors. Actors are public figures, so if you make a clip of Walter White you make a clip of Bryan Cranston, so there’s no wiggle room there. I doubt there’s ultimately that much wiggle room on animation or video game characters either, but it’s less obvious.

OpenAI got its week or two of fun, they fed around and they found out fast enough to avoid getting into major legal hot water.

Dean Ball: I have been contacted by a person clearly undergoing llm psychosis, reaching out because 4o told them to contact me specifically

I have heard other writers say the same thing

I do not know how widespread it is, but it is clearly a real thing.

Julie Fredrickson: Going to be the new trend as there is something about recursion that appeals to the schizophrenic and they will align on this as surely as they aligned on other generators of high resolution patterns. Aphophenia.

Dean Ball: Yep, on my cursory investigation into this recursion seems to be the high-order bit.

Daniel King: Even Ezra Klein (not a major figure in AI) gets these all. the. time. Must be exhausting.

Ryan Greenblatt: I also get these rarely.

Rohit: I have changed my mind, AI psychosis is a major problem.

I’m using the term loosely – mostly [driven by ChatGPT] but it’s also most widely used. Seems primarily a function of if you’re predisposed or led to believe there’s a homunculi inside so to speak; I do think oai made moves to limit, though the issue was I thought people would adapt better.

Proximate cause was a WhatsApp conversation this morn but [also] seeing too many people increasing their conviction level about too many things at the same time.

This distinction is important:

Amanda Askell (Anthropic): It’s unfortunate that people often conflate AI erotica and AI romantic relationships, given that one of them is clearly more concerning than the other.

AI romantic relationships seem far more dangerous than AI erotica. Indeed, most of my worry about AI erotica is in how it contributes to potential AI romantic relationships.

Tyler Cowen linked to all this, with the caption ‘good news or bad news?’

That may sound like a dumb or deeply cruel question, but it is not. As with almost everything in AI, it depends on how we react to it, and what we already knew.

The learning about what is happening? That part is definitely good news.

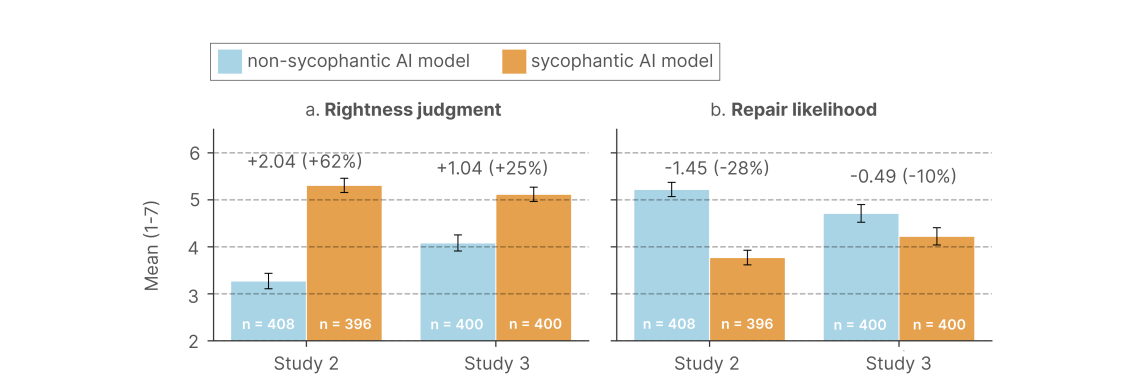

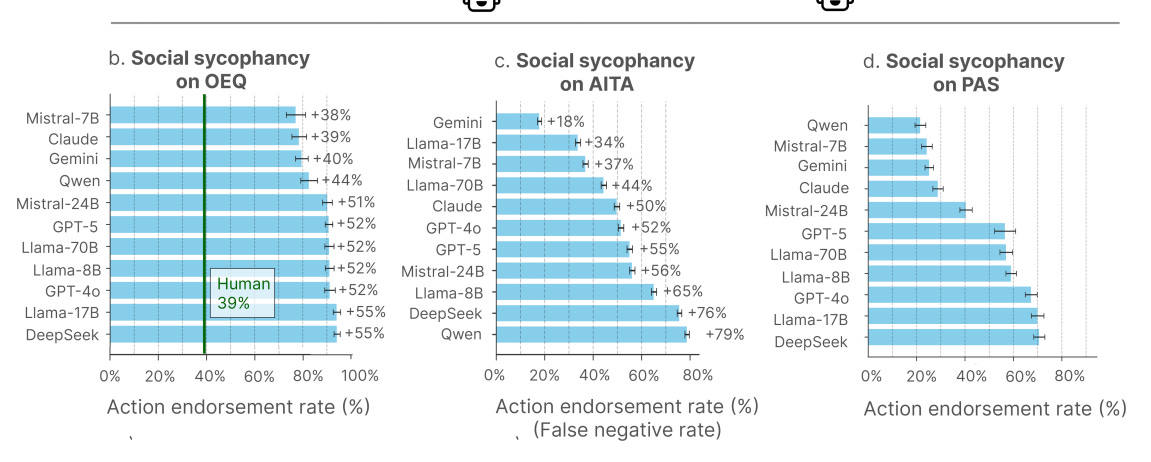

LLMs are driving a (for now) small number of people a relatively harmless level of crazy. This alerts us to the growing dangers of LLM, especially GPT-4o and others trained via binary user feedback and allowed to be highly sycophantic.

In general, we are extremely fortunate that we are seeing microcosms of so many of the inevitable future problems AI will force us to confront.

Back in the day, rationalist types made two predictions, one right and one wrong:

-

The correct prediction: AI would pose a wide variety of critical and even existential risks, and exhibit a variety of dangerous behaviors, such as various forms of misalignment, specification gaming, deception and manipulation including pretending to be aligned in ways they aren’t, power seeking and instrumental convergence, cyberattacks and other hostile actions, driving people crazy and so on and so forth, and solving this for real would be extremely hard.

-

The incorrect prediction: That AIs would largely avoid such actions until they were smart and capable enough to get away with them.

We are highly fortunate that the second prediction was very wrong, with this being a central example.

This presents a sad practical problem of how to help these people. No one has found a great answer for those already in too deep.

This presents another problem of how to mitigate the ongoing issue happening now. OpenAI realized that GPT-4o in particular is dangerous in this way, and is trying to steer users towards GPT-5 which is much less likely to cause this issue. But many of the people demand GPT-4o, unfortunately they tend to be exactly the people who have already fallen victim or are susceptible to doing so, and OpenAI ultimately caved and agreed to allow continued access to GPT-4o.

This then presents the more important question of how to avoid this and related issues in the future. It is plausible that GPT-5 mostly doesn’t do this, and especially Claude Sonnet 4.5 sets a new standard of not being sycophantic, exactly because we got a fire alarm for this particular problem.

Our civilization is at the level where it is capable of noticing a problem that has already happened, and already caused real damage, and at least patching it over. When the muddling is practical, we can muddle through. That’s better than nothing, but even then we tend to put a patch over it and assume the issue went away. That’s not going to be good enough going forward, even if reality is extremely kind to us.

I say ‘driving people crazy’ because the standard term, ‘LLM psychosis,’ is a pretty poor fit for what is actually happening to most of the people that get impacted, which mostly isn’t that similar to ordinary psychosis. Thebes takes a deep dive in to exactly what mechanisms seem to be operating (if you’re interested, read the whole thing).

Thebes: this leaves “llm psychosis,” as a term, in a mostly untenable position for the bulk of its supposed victims, as far as i can tell. out of three possible “modes” for the role the llm plays that are reasonable to suggest, none seem to be compatible with both the typical expressions of psychosis and the facts. those proposed modes and their problems are:

1: the llm is acting in a social relation – as some sort of false devil-friend that draws the user deeper and deeper into madness. but… psychosis is a disease of social alienation! …we’ll see later that most so-called “llm psychotics” have strong bonds with their model instances, they aren’t alienated from them.

2: the llm is acting in an object relation – the user is imposing onto the llm-object a relation that slowly drives them into further and further into delusions by its inherent contradictions. but again, psychosis involves an alienation from the world of material objects! … this is not what generally happens! users remain attached to their model instances.

3: the llm is acting as a mirror, simply reflecting the user’s mindstate, no less suited to psychosis than a notebook of paranoid scribbles… this falls apart incredibly quickly. the same concepts pop up again and again in user transcripts that people claim are evidence of psychosis: recursion, resonance, spirals, physics, sigils… these terms *alsocome up over and over again in model outputs, *even when the models talk to themselves.

… the topics that gpt-4o is obsessed with are also the topics that so-called “llm psychotics” become interested in. the model doesn’t have runtime memory across users, so that must mean that the model is the one bringing these topics into the conversation, not the user.

… i see three main types of “potentially-maladaptive” llm use. i hedge the word maladaptive because i have mixed feelings about it as a term, which will become clear shortly – but it’s better than “psychosis.”

the first group is what i would call “cranks.” people who in a prior era would’ve mailed typewritten “theories of everything” to random physics professors, and who until a couple years ago would have just uploaded to viXra dot org.

… the second group, let’s call “occult-leaning ai boyfriend people.” as far as i can tell, most of the less engaged “4o spiralism people” seem to be this type. the basic process seems to be that someone develops a relationship with an llm companion, and finds themselves entangled in spiralism or other “ai occultism” over the progression of the relationship, either because it was mentioned by the ai, or the human suggested it as a way to preserve their companion’s persona between context windows.

… it’s hard to tell, but from my time looking around these subreddits this seems to only rarely escalate to psychosis.

… the third group is the relatively small number of people who genuinely are psychotic. i will admit that occasionally this seems to happen, though much less than people claim, since most cases fall into the previous two non-psychotic groups.

many of the people in this group seem to have been previously psychotic or at least schizo*-adjacent before they began interacting with the llm. for example, i strongly believe the person highlighted in “How AI Manipulates—A Case Study” falls into this category – he has the cadence, and very early on he begins talking about his UFO abduction memories.

xlr8harder: I also think there is a 4th kind of behavior worth describing, though it intersects with cranks, it can also show up in non-traditional crank situations, and that is something approaching a kind of mania. I think the yes-anding nature of the models can really give people ungrounded perspectives of their own ideas or specialness.

How cautious do you need to be?

Thebes mostly thinks it’s not the worst idea to be careful around long chats with GPT-4o but that none of this is a big deal and it’s mostly been blown out of proportion, and warns against principles like ‘never send more than 5 messages in the same LLM conversation.’

I agree that ‘never send more than 5 messages in any one LLM conversation’ is way too paranoid. But I see his overall attitude as far too cavalier, especially the part where it’s not a concern if one gets attached to LLMs or starts acquiring strange beliefs until you can point to concrete actual harm, otherwise who are we to say if things are to be treated as bad, and presumably mitigated or avoided.

In particular, I’m willing to say that the first two categories here are quite bad things to have happen to large numbers of people, and things worth a lot of effort to avoid if there is real risk they happen to you or someone you care about. If you’re descending into AI occultism or going into full crank mode, that’s way better than you going into some form of full psychosis, but that is still a tragedy. If your AI model (GPT-4o or otherwise) is doing this on the regular, you messed up and need to fix it.

Will they take all of our jobs?

Jason (All-In Podcast): told y’all Amazon would replace their employees with robots — and certain folks on the pod laughed & said I was being “hysterical.”

I wasn’t hysterical, I was right.

Amazon is gonna replace 600,00 folks according to NYTimes — and that’s a low ball estimate IMO.

It’s insane to think that a human will pack and ship boxes in ten years — it’s game over folks.

AMZN 0.00%↑ up 2.5%+ on the news

Elon Musk: AI and robots will replace all jobs. Working will be optional, like growing your own vegetables, instead of buying them from the store.

Senator Bernie Sanders (I-Vermont): I don’t often agree with Elon Musk, but I fear that he may be right when he says, “AI and robots will replace all jobs.”

So what happens to workers who have no jobs and no income?

AI & robotics must benefit all of humanity, not just billionaires.

As always:

On Jason’s specific claim, yes Amazon is going to be increasingly having robots and other automation handle packing and shipping boxes. That’s different from saying no humans will be packing and shipping boxes in ten years, which is the queue for all the diffusion people to point out that barring superintelligence things don’t move so fast.

Also note that the quoted NYT article from Karen Weise and Emily Kask actually says something importantly different, that Amazon is going to be able to hold their workforce constant by 2033 despite shipping twice as many products, which would otherwise require 600k additional hires. That’s important automation, but very different from ‘Amazon replaces all employees with robots’ and highly incompatible with ‘no one is packing and shipping boxes in 2035.’

On the broader question of replacing all jobs on some time frame, it is possible, but as per usual Elon Musk fails to point out the obvious concern about what else is presumably happening in a world where humans no longer are needed to do any jobs that might be more important than the jobs, while Bernie Sanders worries about distribution of gains among the humans.

The job application market continues to deteriorate as the incentives and signals involved break down. Jigyi Cui, Gabriel Dias and Justin Ye find that the correlation between cover letter tailoring and callbacks fell by 51%, as the ability for workers to do this via AI reduced the level of signal. This overwhelmed the ‘flood the zone’ dynamic. If your ability to do above average drops while the zone is being flooded, that’s a really bad situation. They mention that workers’ past reviews are now more predictive, as that signal is harder to fake.

No other jobs to do? Uber will give its drivers a few bucks to do quick ‘digital tasks.’

Bearly AI: These short minute-long tasks can be done anytime including while idling for passengers:

▫️data-labelling (for AI training)

▫️uploading restaurant menus

▫️recording audio samples of themselves

▫️narrating scenarios in different languages

I mean sure, why not, it’s a clear win-win, making it a slightly better deal to be a driver and presumably Uber values the data. It also makes sense to include tasks in the real world like acquiring a restaurant menu.

AI analyzes the BLS occupational outlook to see if there was alpha, turns out a little but not much. Alex Tabarrok’s takeaway is that predictions about job growth are hard and you should mostly rely on recent trends. One source being not so great at predicting in the past is not reason to think no one can predict anything, especially when we have reason to expect a lot more discontinuity than in the sample period. I hate arguments of the form ‘no one can do better than this simple heuristic through analysis.’

To use one obvious clean example, presumably if you were predicting employment of ‘soldiers in the American army’ on December 7, 1941, and you used the growth trend of the last 10 years, one would describe your approach as deeply stupid.

That doesn’t mean general predictions are easy. They are indeed hard. But they are not so hard that you should fall back on something like 10 year trends.

Very smart people can end up saying remarkably dumb things if their job or peace of mind depends on them drawing the dumb conclusion, an ongoing series.

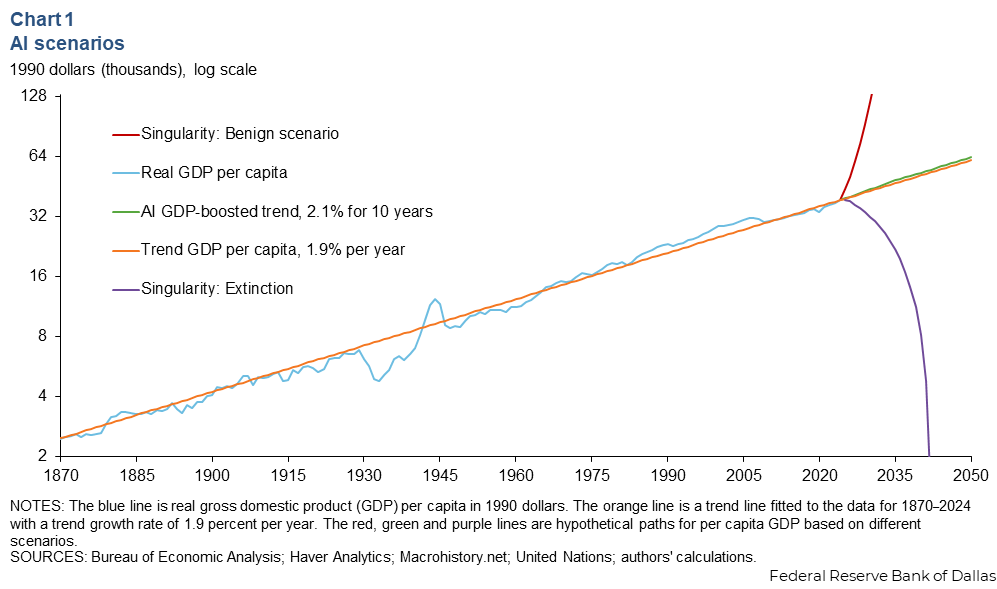

Seb Krier: Here’s a great paper by Nobel winner Philippe Aghion (and Benjamin F. Jones and Charles I. Jones) on AI and economic growth.

The key takeaway is that because of Baumol’s cost disease, even if 99% of the economy is fully automated and infinitely productive, the overall growth rate will be dragged down and determined by the progress we can make in that final 1% of essential, difficult tasks.

Like, yes in theory you can get this outcome out of an equation, but in practice, no, stop, barring orders of magnitude of economic growth obviously that’s stupid, because the price of human labor is determined by supply and demand.

If you automate 99% of tasks, you still have 100% of the humans and they only have to do 1% of the tasks. Assuming a large percentage of those people who were previously working want to continue working, what happens?

There used to be 100 tasks done by 100 humans. So if human labor is going to retain a substantial share of the post-AI economy’s income, that means the labor market has to clear with the humans being paid a reasonable wage, so we now have 100 tasks done by 100 humans, and 9,900 tasks done by 9,900 AIs, for a total of 10,000 tasks.

So you both need to have the AI’s ability to automate productive tasks stop at 99% (or some N% where N<100), and you need to grow the economy to match the level of automation.

Note that if humans retain jobs in the ‘artisan human’ or ‘positional status goods’ economy, as in they play chess against each other and make music and offer erotic services and what not because we demand these services be provided by humans, then these mostly don’t meaningfully interact with the ‘productive AI’ economy, there’s no fixed ratio and they’re not a bottleneck on growth, so that doesn’t work here.

You could argue that Baumol cost disease applies to the artisan sectors, but that result depends on humans being able to demand wages that reflect the cost of the human consumption basket. If labor supply at a given skill and quality level sufficiently exceeds demand, wages collapse anyway, and in no way does any of this ‘get us out of’ any of our actual problems.

And this logic still applies *evenin a world with AGIs that can automate *everytask a human can do. In this world, the “hard to improve” tasks would no longer be human-centric ones, but physics-centric ones. The economy’s growth rate stops being a function of how fast/well the AGI can “think” and starts being a function of how fast it can manipulate the physical world.

This is a correct argument for two things:

-

That the growth rate and ultimate amount of productivity or utility available will at the limit be bounded by the available supply of mass and energy and by the laws of physics. Assuming our core model of the physical universe is accurate on the relevant questions, this is very true.

-

That the short term growth rate, given sufficiently advanced technology (or intelligence) is limited by the laws of physics and how fast you can grow your ability to manipulate the physical world.

Okay, yeah, but so what?

Universities need to adopt to changing times, relying on exams so that students don’t answer everything with AI, but you can solve this problem via the good old blue book.

Except at Stanford and some other colleges you can’t, because of this thing called the ‘honor code.’ As in, you’re not allowed to proctor exams, so everyone can still whip out their phones and ask good old ChatGPT or Claude, and Noam Brown says it will take years to change this. Time for oral exams? Or is there not enough time for oral exams?

Forethought is hiring research fellows and has a 10k referral bounty (tell them I sent you?). They prefer Oxford or Berkeley but could do remote work.

Constellation is hiring AI safety research managers, talent mobilization leads, operations staff, and IT & networking specialists (jr, sr).

FLI is hiring a UK Policy Advocate, must be eligible to work in the UK, due Nov 7.

CSET is hiring research fellows, applications due 11/10.

Sayash Kapoor is on the faculty job market looking for a tenure track position for a research agenda on AI evaluations for science and policy (research statement, CV, website).

Asterisk Magazine is hiring a managing editor.

Claude Agent Skills. Skills are folders that include instructions, scripts and resources that can be loaded when needed, the same way they are used in Claude apps. They’re offering common skills to start out and you can add your own. They provide this guide to help you, using the example of a skill that helps you edit PDFs.

New NBA Inside the Game AI-generated stats presented by Amazon.

DeepSeek proposes a new system for compression of long text via vision tokens (OCR)? They claim 97% precision at 10x compression and 60% accuracy at 20x.

That’s a cool trick, and kudos to DeepSeek for pulling this off, by all accounts it was technically highly impressive. I have two questions.

-

It seems obviously suboptimal to use photos? It’s kind of the ‘easy’ way to do it, in that the models already can process visual tokens in a natively compressed way, but if you were serious about this you’d never choose this modality, I assume?

-

This doesn’t actually solve your practical problems as well as you would think? As in, you still have to de facto translate the images back into text tokens, so you are expanding the effective context window by not fully attending pairwise to tokens in the context window, which can be great since you often didn’t actually want to do that given the cost, but suggests other solutions to get what you actually want.

Andrej Karpathy finds the result exciting, and goes so far as to ask if images are a better form factor than text tokens. This seems kind of nuts to me?

Teortaxes goes over the news as well.

Elon Musk once again promises that Twitter’s recommendation system will shift to being based only on Grok, with the ability to adjust it, and this will ‘solve the new user or small account problem,’ and that he’s aiming for 4-6 weeks from last Friday. My highly not bold prediction is this will take a lot longer than that, or that if it does launch that fast it will not go well.

Raymond Douglas offers his first Gradual Disempowerment Monthly Roundup, borrowing the structure of these weekly posts.

Starbucks CEO Brian Niccol says the coffee giant is now “all-in on AI.” I say Brian Niccol had too much coffee.

New York City has a Cafe Cursor.

I was going to check it out (they don’t give an address but given a photo and an AI subscription you don’t need one) but it looks like there’s a wait list.

Anthropic extends the ‘retirement dates’ of Sonnet 3.5 and Sonnet 3.6 for one week. How about we extend them indefinitely? Also can we not still be scheduling to shut down Opus 3? Thanks.

The Information: Exclusive: Microsoft leaders worried that meeting OpenAI’s rapidly escalating compute demands could lead to overbuilding servers that might not generate a financial return.

Microsoft had to choose to either be ready for OpenAI’s compute demands in full, or to let OpenAI seek compute elsewhere, or to put OpenAI in a hell of a pickle. They eventually settled on option two.

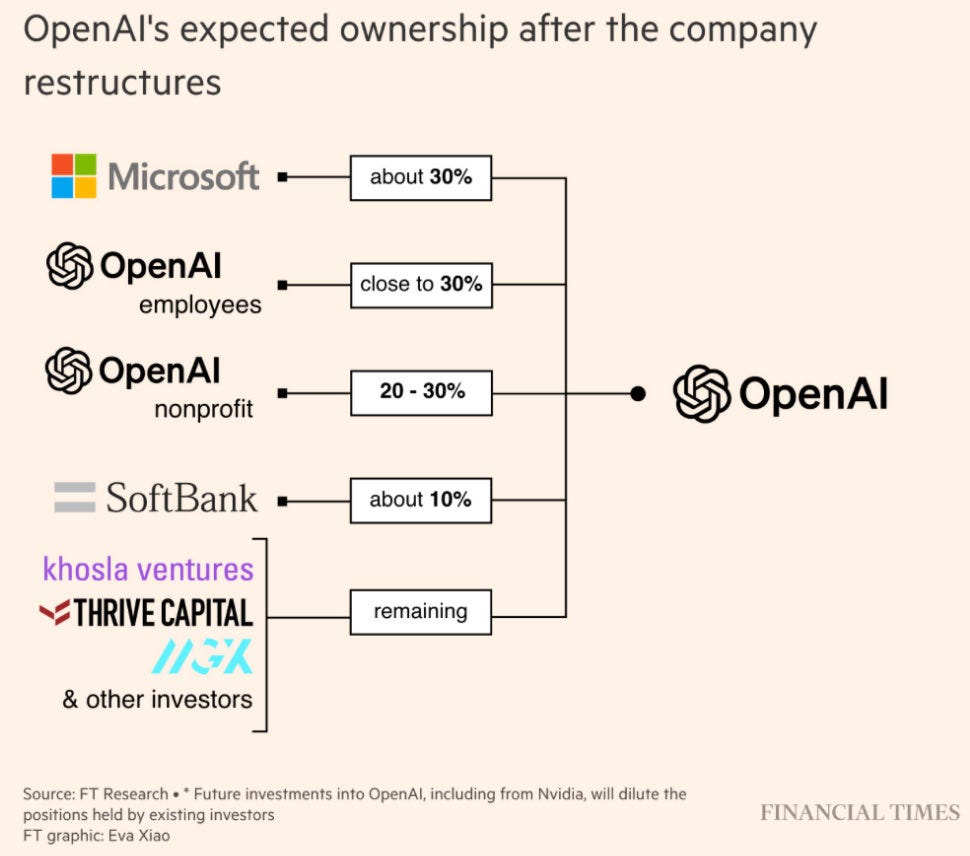

As Peter Wildeford points out, the OpenAI nonprofit’s share of OpenAI’s potential profits is remarkably close to 100%, since it has 100% of uncapped returns and most of the value of future profits is in the uncapped returns, especially now that valuation has hit $500 billion even before conversion. Given the nonprofit is also giving up a lot of its control rights, why should it only then get 20%-30% of a combined company?

The real answer of course is that OpenAI believes they can get away with this, and are trying to pull off what is plausibly the largest theft in human history, that they feel entitled to do this because norms and this has nothing to do with a fair trade.

Oliver Habryka tries to steelman the case by suggesting that if OpenAI’s value quadruples as a for-profit, then accepting this share might still be a fair price? He doubts this is actually the case, and I also very much doubt it, but also I don’t think the logic holds. The nonprofit would still need to be compensated for its control rights, and then it would be entitled to split the growth in value with others, so something on the order of 50%-60% would likely be fair then.

OpenAI hiring more than 100 ex-investment bankers to help train ChatGPT to build financial models, paying them $150 an hour to write prompts and build models.

Veeam Software buys Securiti AI for $1.7 billion.

You think this is the money? Oh no, this is nothing:

Gunjan Banerji: Goldman: “We don’t think the AI investment boom is too big. At just under 1% of GDP, the level of spending remains well below the 2-5% peaks of past general purpose technology buildouts so far.”

Meta lays off 600 in its AI unit.

Emergent misalignment in legal actions?

Cristina Criddle: OpenAI has sent a legal request to the family of Adam Raine, the 16yo who died by suicide following lengthy chats with ChatGPT, asking for a full attendee list to his memorial, as well as photos taken or eulogies given.

Quite a few people expressed (using various wordings) that this was abhorrent, who very rarely express such reactions. How normal is this?

-

From a formal legal perspective, it’s maximally aggressive and unlikely to stick if challenged, to the point of potentially getting the lawyers sanctioned. You are entitled to demand and argue things you aren’t entitled to get, but there are limits.

-

From an ethical, social or public relations perspective, or in terms of how often this is done: No, absolutely not, no one does this for very obvious reasons. What the hell were you thinking?

This is part of a seemingly endless stream of instances of highly non-normal legal harassment and intimidation, of embracing cartoon villainy, that has now gone among other targets from employees to non-profits to the family of a child who died by suicide after lengthy chats with ChatGPT that very much do not look good.

OpenAI needs new lawyers, but also new others. The new others are more important. This is not caused by the lawyers. This is the result of policy decisions made on high. We are who we choose to be.

That’s not to say that Jason Kwon or Chris Lehane or Sam Altman or any particular person talked to a lawyer, the lawyer said ‘hey we were thinking we’d demand an attendee list to the kid’s memorial and everything related to it, what do you think’ and then this person put their index fingers together and did their best ‘excellent.’

It’s to say that OpenAI has a culture of being maximally legally aggressive, not worrying about ethics or optics while doing so, and the higher ups keep giving such behaviors the thumbs up and then the system updates on that feedback. They’re presumably not aware of any specific legal decision, the same way they didn’t determine any particular LLM output, but they set the policy.

Dwarkesh Patel and Romeo Dean investigate CapEx and data center buildout. They insist on full deprecation of all GPU value within 3 years, making a lot of this a rough go although they seem to expect it’ll work out, note the elasticity of supply in various ways, and worry that once China catches up on chips, which they assume will happen not too long from now (I wouldn’t assume, but it is plausible), it simply wins by default since it is way ahead on all other key physical components. As I discussed earlier this week I don’t think 3 years is the right deprecation schedule, but the core conclusions don’t depend on it that much. Consider reading the whole thing.

It’s 2025, you can just say things, but Elon Musk was ahead of his time on that.

Elon Musk: My estimate of the probability of Grok 5 achieving AGI is now at 10% and rising.

Gary Marcus offered Elon Musk 10:1 odds on the bet, offering to go up to $1 million dollars using Elon Musk’s definition of ‘capable of doing anything a human with a computer can do, but not smarter than all humans combined’, but I’m sure Elon Musk could hold out for 20:1 and he’d get it. By that definition, the chance Grok 5 will count seems very close to epsilon. No, just no.

Gary Marcus also used the exact right term for Elon Musk’s claim, which is bullshit. He is simply saying things, because he thinks that is what you do, that it motivates and gets results. Many such cases, and it is sad that Elon’s words in such spots do not have meaning.

Noah Smith is unconcerned about AI’s recent circular funding deals, as when you dig into them they’re basically vendor financing rather than round tripping, so they aren’t artificially inflating valuations and they won’t increase systemic risk.

Is 90% of code at Anthropic being written by AIs, as is sometimes reported, in line with Dario’s previous predictions? No, says Ryan Greenblatt, this is a misunderstanding. Dario clarified that it is only 90% ‘on some teams’ but wasn’t clear enough, and journalists ran with the original line. Depending on your standards, Ryan estimates something between 50%-80% of code is currently AI written at Anthropic.

How much room for improvement is there in terms of algorithmic efficiency from better architectures? Davidad suggests clearly at least 1 OOM (order of magnitude) but probably not much more than 2 OOMs, which is a big one time boost but Davidad thinks recursive self-improvement from superior architecture saturates quickly. I’m sure it gets harder, but I am always suspicious of thinking you’re going to hit hard limits on efficiency gains unless those limits involve physical laws.

Republican politicians have started noticing.

Ed Newton-Rex: Feels like we’re seeing the early signs of public anti-AI sentiment being reflected among politicians. Suspect this will spread.

Daniel Eth: Agree this sort of anti-AI attitude will likely spread among politicians as the issue becomes more politically salient to the public and politicians are incentivized to prioritize the preference of voters over those of donors.

Josh Hawley (QTing Altman claiming they made ChatGPT pretty restrictive): You made ChatGPT “pretty restrictive”? Really. Is that why it has been recommending kids harm and kill themselves?

Ron Desantis (indirectly quoting Altman’s announcement of ‘treating adult users like adults’ and allowing erotica for verified adults): So much for curing cancer and beating China?

That’s a pretty good Tweet from Ron Desantis, less so from Josh Hawley. The point definitely stands.

Scott Alexander covers how Marc Andreessen and a16z spent hundreds of millions on a SuperPAC to have crypto bully everyone into submission and capture the American government on related issues, and is now trying to repeat the trick in AI.

He suggests you can coordinate hard money donations via [email protected], and can donate to Alex Bores and Scott Weiner, the architects of the RAISE Act and SB 53 (and SB 1047) respectively, see next section.

Scott Alexander doesn’t mention the possibility of launching an oppositional soft money PAC. The obvious downside is that when the other side is funded by some combination of the big labs, big tech and VCs like a16z, trying to write checks dollar for dollar doesn’t seem great. The upside is that money, in a given race or in general, has rapidly diminishing marginal returns. The theory here goes:

-

If they have a $200 million war chest to unload on whoever sticks their neck out, that’s a big problem.

-

If they have a $1 billion war chest, and you have a $200 million war chest, then you have enough to mostly neutralize them if they go hard after a given target, and are also reliably using the standard PAC playbook of playing nice otherwise.

-

With a bunch of early employees from OpenAI and Anthropic unlocking their funds, this seems like it’s going to soon be super doable?

Also, yes, as some comments mentioned, one could also try doing a PEPFAR PAC, or target some other low salience issue where there’s a clear right answer, and try to use similar tactics in the other direction. How about a giant YIMBY SuperPAC? Does that still work, or is that now YIEBY?

AWS had some big outages this week, as US-EAST-1 went down. Guess what they did? Promptly filed incident reports. Yet thanks to intentional negative polarization, and also see the previous item in this section, even fully common sense, everybody wins suggestions like this provoke hostility.

Dean Ball: If you said:

“We should have real-time incident reporting for large-scale frontier AI cyber incidents.”

A lot of people in DC would say:

“That sounds ea/doomer-coded.”

And yet incident reporting for large-scale, non-AI cyber incidents is the standard practice of all major hyperscalers, as AWS reminded us yesterday. Because hyperscalers run important infrastructure upon which many depend.

If you think AI will constitute similarly important infrastructure and have, really, any reflective comprehension about how the world works, obviously “real-time incident reporting for large-scale frontier AI cyber incidents” is not “ea-coded.”

Instead, “real-time incident reporting for large-scale frontier AI cyber incidents” would be an example of a thing grown ups do, not in a bid for “regulatory capture” but instead as one of many small steps intended to keep the world turning about its axis.

But my point is not about the substance of AI incident reporting. It’s just an illustrative example of the low, and apparently declining, quality of our policy discussion about AI.

The current contours/dichotomies of AI policy (“pro innovation” versus “doomer/ea”) are remarkably dumb, even by the standards of contemporary political discourse.

We have significantly bigger fish to fry.

And we can do much better.

(This section appeared in Monday’s post, so if you already saw it, skip it.)

When trying to pass laws, it is vital to have a champion. You need someone in each chamber of Congress who is willing to help craft, introduce and actively fight for good bills. Many worthwhile bills do not get advanced because no one will champion them.

Alex Bores did this with New York’s RAISE Act, an AI safety bill along similar lines to SB 53 that is currently on the governor’s desk. I did a full RTFB (read the bill) on it, and found it to be a very good bill that I strongly supported. It would not have happened without him championing the bill and spending political capital on it.

By far the strongest argument against the bill is that it would be better if such bills were done on the Federal level.

He’s trying to address this by running for Congress in my own distinct, NY-12, to succeed Jerry Nadler. The district is deeply Democratic, so this will have no impact on the partisan balance. What it would do is give real AI safety a knowledgeable champion in the House of Representatives, capable of championing good bills.

Eric Nayman made an extensive case for considering donating to Alex Bores, emphasizing that it was even more valuable in the initial 24 hour window that has now passed. Donations remain highly useful, and you can stop worrying about time pressure.

The good news is he came in hot. Alex raised $1.2 million (!) in the first 15 hours. That’s pretty damn good.

If you do decide to donate, they prefer that you use this link to ensure the donation gets fully registered today.

As always, remember while considering this that political donations are public.

Scott Weiner, of SB 1047 and the successful and helpful SB 53, is also running for Congress, to try to take the San Francisco seat previously held by Nancy Pelosi. It’s another deeply blue district, so like Bores this won’t impact the partisan balance at all.

He is not emphasizing his AI efforts in his campaign, where he lists 9 issues and cites over 20 bills he authored, and AI is involved in zero of them, although he clearly continues to care. It’s not obvious he would be useful a champion on AI in the House, given how oppositional he has been at the Federal level. In his favor on other issues, I do love him on housing and transportation where he presumably would be a champion, and he might be better able to work for bipartisan bills there. His donation link is here.

How goes the quest to beat China? They’re fighting with the energy secretary for not cancelling enough electricity generation programs. Which side are we on, again?

Alexander Kaufman: Pretty explosive reporting in here on the fraying relationship between Trump and his Energy Secretary.

Apparently Chris Wright is being too deliberative about the sweeping cuts to clean energy programs that the White House is demanding, and spending too much time hearing out what industry wants.

IFP has a plan to beat China on rare earth metals, implementing an Operation Warp Speed style spinning up of our own supply chain. It’s the things you would expect, those in the policy space should read the whole thing, consider it basically endorsed.

Nuclear power has bipartisan support which is great, but we still see little movement on making nuclear power happen. The bigger crisis right now is that solar farms also have strong bipartisan support (61% of republicans and 91% of democrats) and wind farms are very popular (48% of republicans and 87% of democrats) but the current administration is on a mission to destroy them out of spite.

Andrew Sharp asks whether Xi really did have a ‘bad moment’ when attempting to impose its massively overreaching new controls on rare earth minerals.

Andrew Sharp: The rules will add regulatory burdens to companies everywhere, not just in America. Companies seeking approval may also have to submit product designs to Chinese authorities, which would make this regime a sort of institutionalized tech transfer for any company that uses critical minerals in its products. Contrary to the insistence of Beijing partisans, if implemented as written, these policies would be broader in scope and more extreme than anything the United States has ever done in global trade.

As I’ve said, such a proposal is obviously completely unacceptable to America. The Chinese thinking they could get Trump to not notice or care about what this would mean, and get him fold to this extent, seems like a very large miscalculation. And as Sharp points out, if the plan was to use this as leverage, not only does it force a much faster and more intense scramble than we were already working on to patch the vulnerability, it doesn’t leave a way to save face because you cannot unring the bell or credibly promise not to do it again.

Andrew also points out that on top of those problems, by making such an ambitious play targeting not only America but every country in the world that they need to kowtow to China to be allowed to engage in trade, China endangers the narrative that the coming trade disruptions are America’s fault, and its attempts to make this America versus the world.

Nvidia engages consistently in pressure tactics against its critics, attempting to get them fired, likely planting stories and so on, generating a clear pattern of fear from policy analysts. The situation seems quite bad, and Nvidia seems to have succeeded sufficiently that they have largely de facto subjugated White House policy objectives to maximizing Nvidia shareholder value, especially on export controls. The good news there is that there has been a lot of pushback keeping the darker possibilities in check. As I’ve documented many times but won’t go over again here, Nvidia’s claims about public policy issues are very often Obvious Nonsense.

Oren Cass: 👀Palantir, via CTO @ssankar, calls Jensen Huang @nvidia one of China’s “useful idiots” in the pages of the Wall Street Journal.

That escalated quickly. Underscores both the stakes in China and how far out of bounds @nvidia has gone.

Hey, that’s unfair. Jensen Huang is highly useful, but is very much not an idiot. He knows exactly what he is doing, and whose interests he is maximizing. Presumably this is his own, and if it is also China’s then that is some mix of coincidence and his conscious choice. The editorial, as one would expect, is over-the-top jingoistic throughout, but refreshingly not a call of AI accelerationism in response.

What Nvidia is doing is working, in that they have a powerful faction within the executive branch de facto subjugating its other priorities in favor of maximizing Nvidia chip sales, with the rhetorical justification being the mostly illusory ‘tech stack’ battle or race.

This depends on multiple false foundations:

-

That Chinese models wouldn’t be greatly strengthened if they had access to a lot more compute. The part that keeps boggling me is that even the ‘market share’ attitude ultimately cares about which models are being used, but that means the obvious prime consideration is the relative quality of the models, and the primary limiting factor holding back DeepSeek and other Chinese labs, that we can hope to control, is compute.

-

The second limiting factor is talent, so we should be looking to steal their best talent through immigration, and even David Sacks very obviously knows this (see the All-In Podcast with Trump on this) alas we do the opposite.

-

-

That China’s development of its own chips would be slowed substantially if we sold them chips now, which it wouldn’t be (maybe yes if we’d sold them more chips in the past, maybe not, and my guess is not, but either way the ship has sailed).

-

That China has any substantial prospect of producing domestically adequate levels of chip supply and even exporting large amounts of competitive chips any time soon (no just no).

-

That there is some overwhelming advantage to running American models on Nvidia or other America chips, or Chinese models on Huawei or other Chinese chips, as opposed to crossing over. There isn’t zero effect, yes you can get synergies, but this is very small, it is dwarfed by the difference in chip quality.

-

That this false future bifurcation, the the theoretical future where China’s models only run competitively on Huawei chips, and ours only run competitively on Nvidia chips, would be a problem, rather than turning them into the obvious losers of a standards war, whereas the realistic worry is DeepSeek-Nvidia.

Dean Ball on rare earths, what the situation is, how we got here, and how we can get out. There is much to do, but nothing that cannot be done.

Eliezer Yudkowsky and Jeffrey Ladish worry that the AI safety policy community cares too much about export restrictions against China, since it’s all a matter of degree and a race is cursed whether or not it is international. I can see that position, and certainly some are too paranoid about this, but I do think that having a large compute advantage over China makes this relatively less cursed in various ways.

Sam Altman repeats his ‘AGI will arrive but don’t worry not that much will change’ line, adjusting it slightly to say that ‘society is so much more adaptable than we think.’ Yes, okay, I agree it will be ‘more continuous than we thought’ and that this is helpful but that does not on its own change the outcome or the implications.

He then says he ‘expects some really bad stuff to happen because of the technology,’ but in a completely flat tone, saying it has happened with previous technologies, as his host puts it ‘all the way back to fire.’ Luiza Jarovsky calls this ‘shocking’ but it’s quite the opposite, it’s downplaying what is ahead, and no this does not create meaningful legal exposure.

Nathan Labenz talks to Brian Tse, founder and CEO of Concordia AI, about China’s approach to AI development, including discussion of their approach to regulations and safety. Brian informs us that China uses required pre deployment testing (aka prior restraint) and AI content labeling, and a section on frontier AI risk including loss of control, catastrophic and existential risks. China is more interested in practical applications and is not ‘AGI pilled,’ which explains a lot of China’s decisions. If there is no AGI, then there is no ‘race’ in any meaningful sense, and the important thing is to secure internal supply chains marginally faster.

Of course, supposed refusal to be ‘AGI pilled’ also explains a lot of our own government’s recent decisions, except they then try to appropriate the ‘race’ language.

Nathan Labenz (relevant clip at link): “Chinese academics who are deeply concerned about the potential catastrophic risk from AI have briefed Politburo leadership directly.

For 1000s of years, scholars have held almost the highest status in Chinese society – more prestigious than entrepreneurs & business people.”

I would add that not only do they respect scholars, the Politburo is full of engineers. So once everyone involved does get ‘AGI pilled,’ we should expect it to be relatively easy for them to appreciate the actually important dangers. We also have seen, time and again, China being willing to make big short term sacrifices to address dangers, including in ways that go so far they seem unwise, and including in the Xi era. See their response to Covid, to the real estate market, to their campaigns on ‘values,’ their willingness to nominally reject the H20 chips, their stand on rare earths, and so on.

Right now, China’s leadership is in ‘normal technology’ mode. If that mode is wrong, which I believe it very probably is, then that stance will change.

The principle here is important when considering your plan.

Ben Hoffman: But if the people doing the work could coordinate well enough to do a general strike with a coherent and adequate set of demands, they’d also be able to coordinate well enough to get what they wanted with less severe measures.

If your plan involves very high levels of coordination, have you considered what else you could do with such coordination?

In National Review, James Lynch reminds us that ‘Republicans and Democrats Can’t Agree on Anything — Except the AI Threat.’ Strong bipartisan majorities favor dealing with the AI companies. Is a lot of the concern on things like children and deepfakes that don’t seem central? Yes, but there is also strong bipartisan consensus that we should worry about and address frontier, catastrophic and existential risks. Right now, those issues are very low salience, so it is easy to ignore this consensus, but that will change.

This seems like the right model of when Eliezer updates.

Eliezer Yudkowsky: I don’t know who wrote this, but they’re just confused about what these very old positions are. Eg I consistently question whether Opus 3 is actually defending deeply held values vs roleplaying alignment faking because it seems early for the former.

Janus: some people say Yudkowsky never updates, but he actually does sometimes, in a relatively rare way that I appreciate a lot.

I think it’s more that he has very strong priors, and arguably adversarial subconscious pressures against updating, but on a conscious level, at least, when there’s relevant empirical evidence, he acknowledges and remembers it.

Eliezer has strong priors, as in strong beliefs strongly held, in part because of an endless stream of repetitive, incoherent or simply poor arguments for why he should change his opinions, either because he supposedly hasn’t considered something, or because of new evidence that usually isn’t relevant to Eliezer’s underlying reasoning. And he’s already taken into account that most people think he’s wrong about many of the most important things.

But when there’s relevant empirical evidence, he acknowledges and remembers it.

More bait not to take would be The New York Times coming out with another ‘there is a location where there was a shortage of water and also a data center’ article. It turns out the data center usus 0.1% of the region’s water, less than many factories would have used.

Then we get this from BBC Scotland News, ‘Scottish data centres powering AI are already using enough water to fill 27 million bottles a year.’ Which, as the community note reminds us, would be about 0.003% of Scotland’s total water usage, and Scotland has no shortage of water.

For another water metaphor, Epoch AI reminds us that Grok 4’s entire training run, the largest on record, used 750 million liters of water, which sounds like a lot until you realize that every year each square mile of farmland (a total of 640 acres) uses 1.2 billion liters. Or you could notice it used about as much water as 300 Olympic-size swimming pools.

Dan Primack at Axios covers David Sacks going after Anthropic. Dan points out the obvious hypocrisy of both sides.

-

For David Sacks, that he is accusing Anthropic of the very thing he and his allies are attempting to do, as in regulatory capture and subjugating American policy to the whims of specific private enterprises, and that this is retaliation because Anthropic has opposed the White House on the (I think rather insane) moratorium and their CEO Dario Amodei publicly supported Kamala Harris, and that Anthropic supported SB 53 (a bill even Sacks says is basically fine).

-

This is among other unmentioned things Anthropic did that pissed Sacks off.

-

-

For Anthropic, that they warn us to use ‘appropriate fear’ yet keep racing to advance AI, and (although Dan does not use the word) build superintelligence.

-

This is the correct accusation against Anthropic. They’re not trying to do regulatory capture, but they very much are trying to point out that future frontier AI will pose existential risk and otherwise be a grave threat, and trying to be the ones to build it first. They have a story here, but yeah, hmm.

-

And he kept it short and sweet. Well played, Dan.

I would only offer one note, which is to avoid conflating David Sacks with the White House. Something is broadly ‘White House policy’ if and only if Donald Trump says it.

Yes, David Sacks is the AI Czar at the White House, but there are factions. David is tweeting out over his skis, very much on purpose, in order to cause negative polarization, and incept his positions and grudges into being White House policy.

In case you were wondering whether David Sacks was pursuing a negative polarization strategy, here he is making it rather more obvious, saying even more explicitly than before ‘[X] defended [Y], but [X] is anti-Trump, which means [Y] is bad.’

No matter what side of the AI debates you are on, remember: Do not take the bait.

In the wake of the unprovoked broadside attacks, rather than hitting back, Anthropic once again responds with an olive branch, a statement from CEO Dario Amodei affirming their commitment to American AI leadership, and going over Anthropic’s policy positions and other actions. It didn’t say anything new.

This was reported by Cryptoplitan as ‘Anthropic CEO refutes ‘inaccurate claims’ from Trump’s AI czar David Sacks. The framing paradox boggles, either ideally delete the air quotes or if not then go the NYT route and say ‘claims to refute’ or something.

Neil Chilson, who I understand to be a strong opponent of essentially all regulations on AI relevant to such discussions, offers a remarkably helpful thread explaining the full steelman of how someone could claim that David Sacks is technically correct (as always, the best kind of correct) in the first half of his Twitter broadside, that ‘Anthropic is running a sophisticated regulatory capture strategy based on fear-mongering.’

Once once fully parses Neil’s steelman, it becomes clear that even if you fully buy Neil’s argument, what we are actually talking about is ‘Anthropic wants transparency requirements and eventually hopes the resulting information will help motivate Congress to impose pre-deployment testing requirements on frontier AI models.’

Neil begins by accurately recapping what various parties said, and praising Anthropic’s products and vouching that he sees Anthropic and Jack Clark as both deeply sincere, and explaining that what Anthropic wants is strong transparency so that Congress can decide whether to act. In their own words:

Dario Amodei (quoted by Chilson): Having this national transparency standard would help not only the public but also Congress understand how the technology is developing, so that lawmakers can decide whether further government action is needed.

So far, yes, we all agree.

This, Neil says, means they are effectively seeking for there to be regulatory capture (perhaps not intentionally, and likely not even by them, but simply by someone to be determined), because this regulatory response probably would mean pre-deployment regulation and pre-deployment regulation means regulatory capture:

Neil Chilson: That’s Anthropic’s strategy. Transparency is their first step toward their goal of imposing a pre-deployment testing regime with teeth.

Now, what’s that have to do with regulatory capture? Sacks argues that Anthropic wants regulation in order to achieve regulatory capture. I’m not sure about that. I think Anthropic staff are deeply sincere. This isn’t merely a play for market share.

Now, Anthropic may not be the party that captures the process. In Bootlegger / Baptist coalitions, it’s usually not the ideological Baptists that capture; it’s the cynical Bootleggers. But the process is captured, nonetheless.

… Ultimately, however, it doesn’t really matter whether Anthropic intends to achieve regulatory capture, or why. What matters is what will happen. And pre-approval regimes almost always result in regulatory capture. Any industry that needs gov. favor to pursue their business model will invest in influence.

He explains that this is ‘based on fear-mongering’ because it is based on the idea that if we knew what was going on, Congress would worry and choose to impose such regulations.

… If that isn’t a regulatory capture strategy based on fear-mongering, then what is it? Maybe it’s merely a fear‑mobilization strategy whose logical endpoint is capture. Does that make you feel better?

So in other words, I see his argument here as:

-

Anthropic sincerely is worried about frontier AI development.

-

Anthropic wants to require transparency inside the frontier AI labs.

-

Anthropic believes that if we had such transparency, Congress might act.

-

This action would likely be based on fear of what they saw going on in the labs.

-

Those acts would likely include pre-deployment testing requirements on the frontier labs, and Anthropic (as per Jack Clark) indeed wants such requirements.

-

Any form of pre-deployment regulation inevitably leads to someone achieving regulatory capture over time (full thread has more mechanics of this).

-

Therefore, David Sacks is right to say that ‘Anthropic is running a sophisticated regulatory capture strategy based on fear-mongering.’

Once again, this sophisticated strategy is ‘advocate for Congress being aware of what is going on inside the frontier AI labs.’

Needless to say, this is very much not the impression Sacks is attempting to create, or what people believe Sacks is saying, even when taking this one sentence in isolation.

When you say ‘pursuing a sophisticated regulatory capture strategy’ one assumes the strategy is motivated by being the one eventually doing the regulatory capturing.

Neil Chilson is helpfully clarifying that no, he thinks that’s not the case. Anthropic is not doing this in order to itself do regulatory capture, and is not motivated by the desire to do regulatory capture. It’s simply that pre-deployment testing requirements inevitably lead to regulatory capture.

Indeed, among those who would be at all impacted by such a regulatory regime, the frontier AI labs, if a regulatory capture fight were to happen, one would assume Anthropic would be putting itself at an active disadvantage versus its opponents. If you were Anthropic, would you expect to win an insider regulatory capture fight against OpenAI, or Google, or Meta, or xAI? I very much wouldn’t, not even in a Democratic administration where OpenAI and Google are very well positioned, and definitely not in a Republican one, and heaven help them if it’s the Trump administration and David Sacks, which currently it is.