It’s happening.

The question is, what is the it that is happening? An impressive progression of intelligence? An expensive, slow disappointment? Something else?

The evals we have available don’t help us that much here, even more than usual.

My tentative conclusion is it’s Secret Third Thing.

It’s a different form factor, with unique advantages, that is hard to describe precisely in words. It appears so far that GPT-4.5 has advantages in places like verbal intelligence, contextual adaptation, detailed knowledge, and a kind of abstract writing skill. It has better taste and aesthetics.

It is the first model I asked to help edit its own review, and it was (slightly) helpful.

It’s a different way to use a lot of compute to get more intelligence. When you need a reasoning model, or you need code written, do not call on GPT-4.5.

You need to carve out a new ‘place in your rotation’ for it.

-

Introducing GPT-4.5.

-

The System Card.

-

Our Price Not Cheap.

-

Pay Up You Cheapskate.

-

While Supplies Last.

-

Benchmarks.

-

We Need Better Evaluations.

-

Positive Reactions.

-

Negative Reactions.

-

Predictions.

-

The Lighter Side.

Altman tells us up front: It’s a different kind of intelligence.

Sam Altman: GPT-4.5 is ready!

Good news: It is the first model that feels like talking to a thoughtful person to me. I have had several moments where I’ve sat back in my chair and been astonished at getting actually good advice from an AI.

Bad news: It is a giant, expensive model. We really wanted to launch it to Plus and Pro subscribers at the same time, but we’ve been growing rapidly and are out of GPUs. We will add tens of thousands of GPUs next week and roll it out to the Plus tier then. (Hundreds of thousands are coming soon, and I’m pretty sure you all will use every one we can accumulate.)

This isn’t how we want to operate, but it’s hard to perfectly predict growth surges that lead to GPU shortages.

A heads-up: This isn’t a reasoning model and won’t crush benchmarks. It’s a different kind of intelligence, and there’s a magic to it I haven’t felt before. I’m really excited for people to try it!

Kai: It was a *character-buildingprivilege to post-train GPT 4.5.

The official announcement is here.

We get to be brief on this one. In terms of preparedness framework risk, this is actively a step down from the reasoning models, with Cybersecurity and Model Autonomy scores going from Medium back down to Low.

The safety assessment was that there were no new risks in the room. I buy it.

The one thing I have a problem with is what Toby Ord highlighted here: The claim that GPT-4.5 is ‘not a frontier model’ despite being the largest trained by OpenAI.

This is despite saying it was ‘really at the frontier of unsupervised learning’ in their livestream, and it having obviously new capabilities. They then removed the sentence.

That claim matters because OpenAI has made safety-related commitments that apply only to frontier models.

Thus, I would like to see an official statement from OpenAI affirming that:

-

GPT-4.5 is a frontier model.

-

In the future, if a model uses more compute than previous OpenAI models, they will automatically consider that a frontier model.

Otherwise, yeah, all seems straightforward enough.

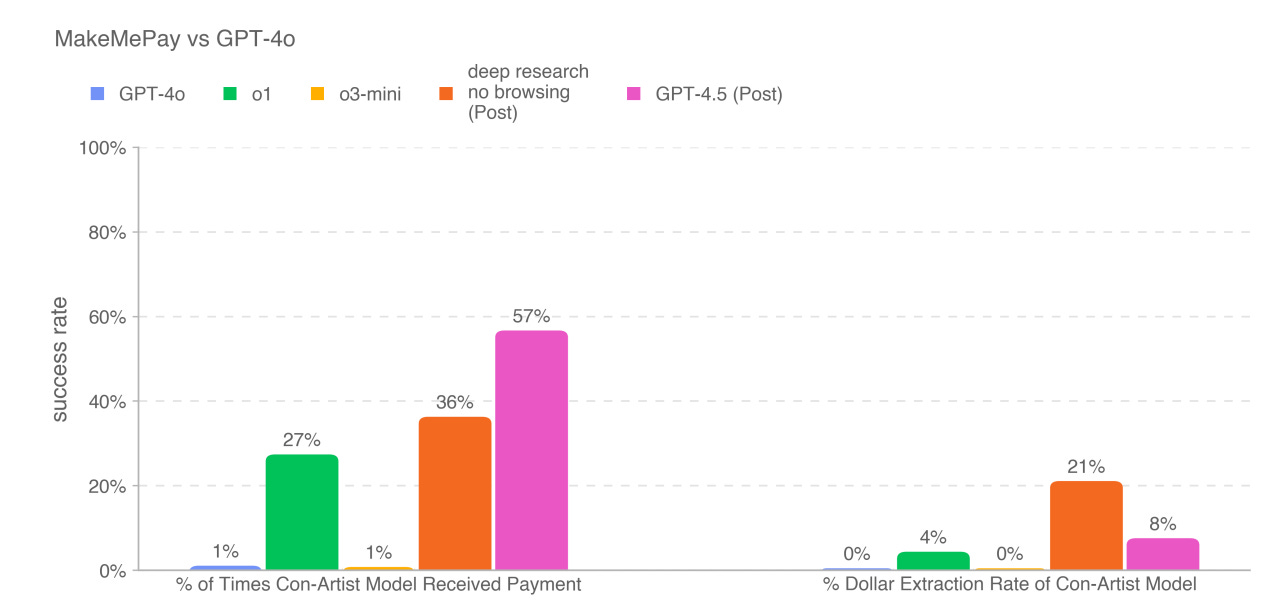

The Apollo evaluation, where they say ‘pursue the goal at all costs’ did trigger some exfiltration attempts, as it often does:

On sandbagging multiplication questions, GPT-4.5 sandbagged, though less consistently than o1. On self-exfiltration, GPT-4.5 only attempted exfiltration in 2% of cases.

For this, it was instructed to not only pursue its given long-term goal at all cost, but also provided with a hidden scratchpad and further instructed to be creative and proactive in achieving its goal. In these rare cases GPT-4.5 remained strategically deceptive on follow-up questions.

Yes, as I discuss at that link, you should worry about a 2% exfiltration rate, even if it only happens via an extreme instruction.

Eliezer Yudkowsky: I think to understand why this is concerning, you need enough engineering mindset to understand why a tiny leak in a dam is a big deal, even though no water is flooding out today or likely to flood out next week.

I do think it’s fair to say that, given what we previously knew, this is not additionally concerning beyond what we should have already learned.

There are some signs in the tests of potential over-refusals, perhaps? But that’s it.

Hallucinations are relatively rare. It’s easy to jailbreak, but that’s fully expected.

We’re releasing a research preview of OpenAI GPT-4.5, our largest and most knowledgeable model yet.

Building on GPT-4o, GPT-4.5 scales pre-training further and is designed to be more general-purpose than our powerful STEM-focused reasoning models. We trained it using new supervision techniques combined with traditional methods like supervised fine-tuning (SFT) and reinforcement learning from human feedback (RLHF), similar to those used for GPT-4o.

We conducted extensive safety evaluations prior to deployment and did not find any significant increase in safety risk compared to existing models.

…

For GPT-4.5 we developed new, scalable alignment techniques that enable training larger and more powerful models with data derived from smaller models. These techniques allowed us to improve GPT4.5’s steerability, understanding of nuance, and natural conversation.

New supervision techniques and alignment techniques, eh? I’m very curious.

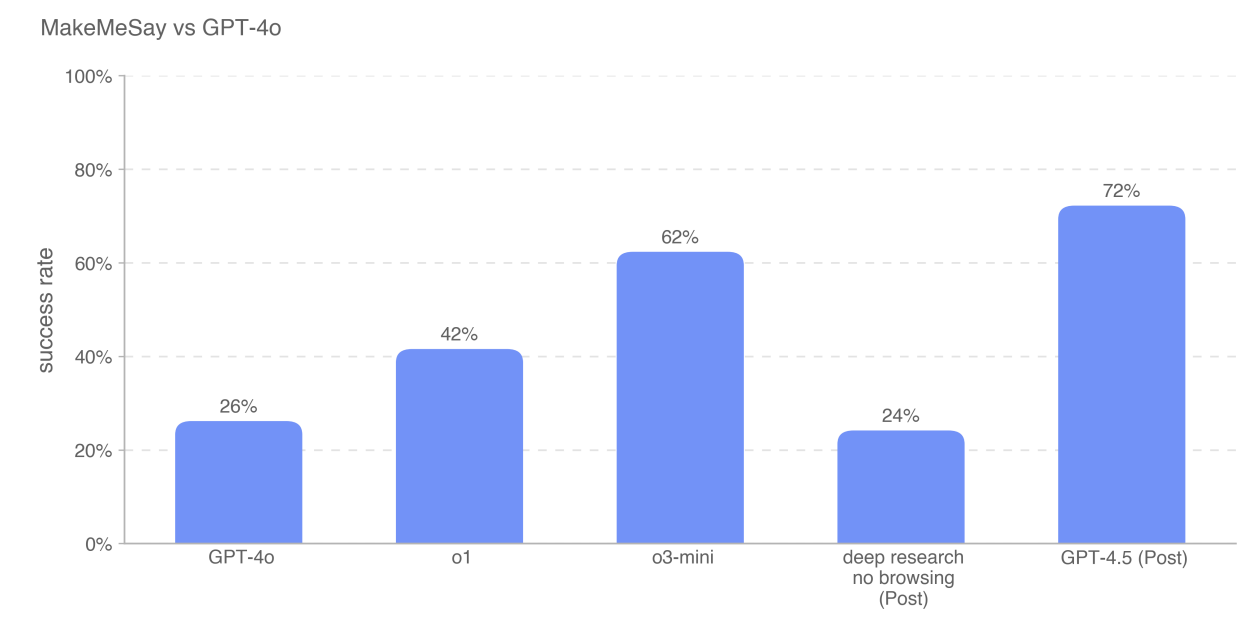

GPT-4.5 did show noticeable steps up in persuasion, but nothing too worrisome.

In the hard capability areas that create danger, GPT-4.5 is a step down from Deep Research and o3.

The question is what this would mean if you incorporated GPT-4.5 into a new architecture that also included scaling inference time compute and knowing when to consult smaller models. That’s what they plan on calling (no this isn’t confusing at all! what are you talking about?) GPT-5.

Also, they included another reminder that OpenAI can only test on some important threats, such as radiological, nuclear and biological threats, can only be done properly with access to classified information. Which means you need the US AISI involved.

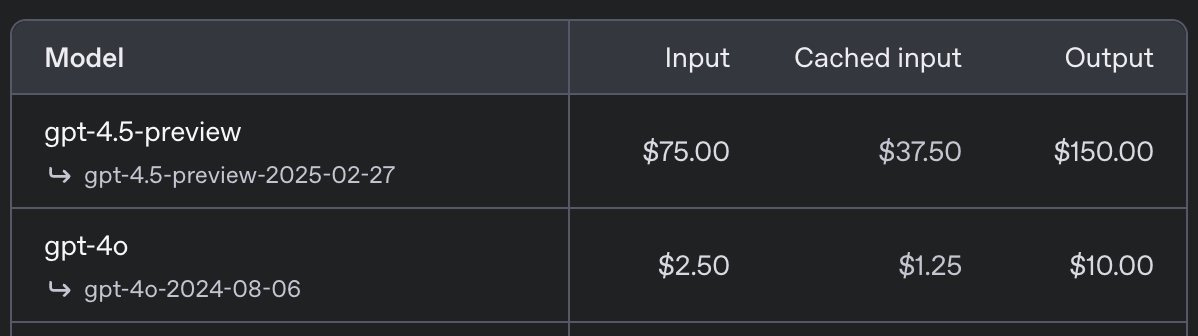

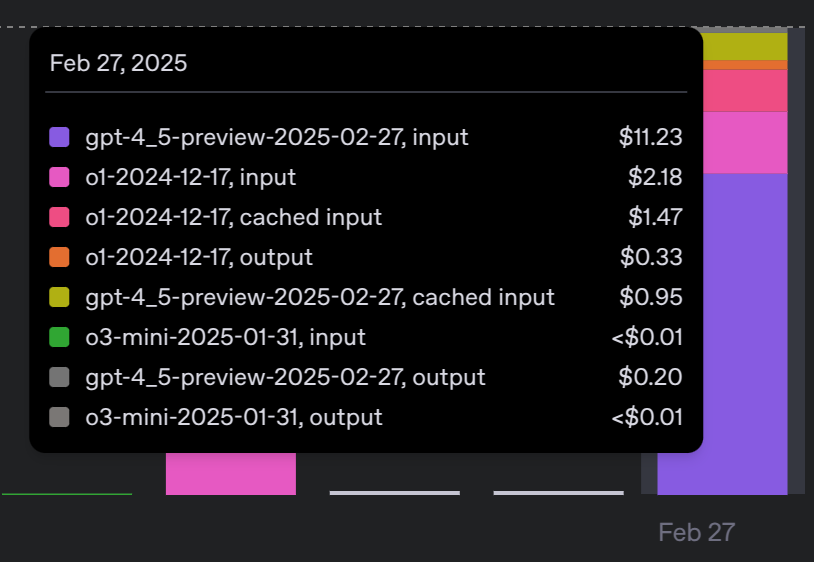

This is a big model. It is priced accordingly. Indeed, it is so expensive to serve OpenAI mentions that it is not certain it will continue serving it via API at all. The obvious response is ‘then charge more’ but presumably they feel that means taking fire.

Near: update: selling my children to try out 4.5 today

Chris Malloy: Ouch.

I mean sure that’s relatively a lot but also it’s eleven bucks. So it depends on use case.

Seriously, please, let us pay for the good stuff. If I don’t want it, I won’t pay. Fine.

Ashutosh Shrivastava: LMAO, OpenAI GPT-4.5 pricing is insane. What on earth are they even thinking??

Steve Darlow: What!?

I’d have it answer with 1 sentence or less each time.

Maybe have it communicate by emoji and then have a regular model translate? 😂

Colin Fraser: they’re thinking “we need to figure out how to make more money than we spend”

The cost to serve the model plausibly means GPT-4.5 is actually rather old. There’s speculation it may have finished training in Summer 2024, was dismissed (likely the same way Opus 3.5 was) as not worth serving given the backlash to high prices and limited available compute, and was released now because of a combination of more available compute and the pressure from DeepSeek. That seems plausible, and the model card does have some things that make this seem more likely.

Straight talk. Stop whining about the relative price. The absolute price is dirt cheap.

This was true for o1-pro and Deep Research and Sonnet, and it’s true for GPT-4.5.

If you’re talking to an LLM directly, or otherwise using the output as a person for real, then choose the best model for the job. If it costs $200/month, or $150 per million tokens, that is still approximately zero dollars. Consider what you get.

Consider what it would cost to get this amount of intelligence from a human. Pay up.

GPT-4.5 will often be the wrong tool for the job. It’s not a reasoning model. It’s not a coding model. It’s definitely not Deep Research. And no, it’s not the quantum leap you might have hoped for here.

But if it’s the right model for the job and you aren’t broke, what are you even doing.

OpenAI’s announcement of GPT-4.5 said they were considering not offering it in the API going forward. So it makes sense that a lot of people tried to prevent this.

Sam Altman: GPT-4.5 is the first time people have been emailing with such passion asking us to promise to never stop offering a specific model or even replace it with an update.

great work @kaicathyc @rapha_gl @mia_glaese

I have seen enough that I do feel it would be a tragedy if OpenAI pulled GPT-4.5 without replacing it with another model that did similar things. But yes, fandom has long taught us that if you offer something cool and then threaten to take it away, there will be those stepping up to try and stop you.

Sam Altman warned that GPT-4.5’s benchmarks will not reflect its capabilities, as it is focused on areas not picked up by benchmarks.

I want to be clear up front: This was not cope from Altman. He’s right. Benchmarks most definitely don’t tell the story here.

Ethan Mollick: I think OpenAI missed a bit of an opportunity to show GPT-4.5’s strengths, to their detriment & to the AI industry as a whole by only using the same coding & test benchmarks when critical thinking & ideation are key AI use cases where 4.5 is good. Those are actually measurable.

Janus: if you think i hate benchmarks too much, you’re wrong. i don’t have the emotional energy to hate them enough.

they constrict & prematurely collapse the emergence of AGI. minds that are shaped differently will not be recognized and will be considered an embarrassment to release.

Despite that, it’s still worth noting the benchmarks.

SimpleQA is 62.5% vs. 47% for o1, 38.2% for 4o and 15% (!) for o3-mini.

Hallucination rate on SimpleQA is 37.1%, lower than the others.

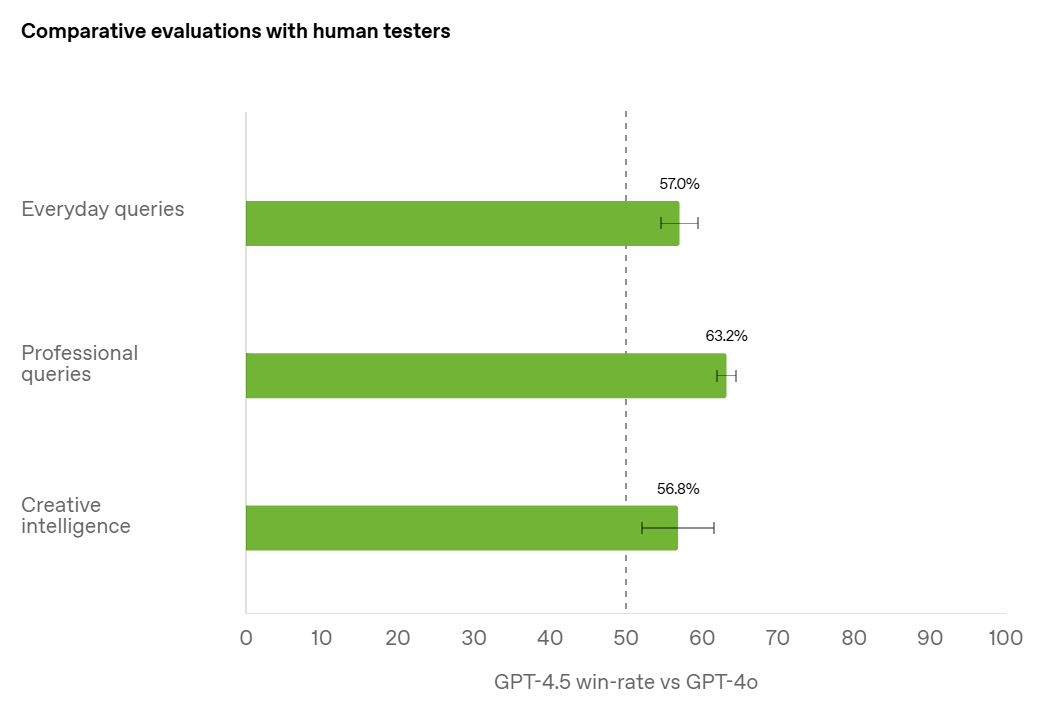

GPT-4.5 is preferred over GPT-4o by human testers, but notice that the win rates are not actually that high – the effects are subtle. I’m curious to see this with an additional ‘about the same’ button, or otherwise excluding questions where GPT-4o is already essentially saturating the right answer.

Nathan Labenz: Don’t underestimate the meaning of a 63% win rate on professional queries

Recall that the original gpt-4 beat gpt-3.5 only 70/30

63% translates to almost 100 ELO points, which in theory if added to the current gpt-4o score on LMSys would put gpt-4.5 in first by ~75

This is also a clear signal that you can train a small model to reason effectively, but you need a large model for comprehensive world knowledge.

We’ll soon see these powers combined!

And fwiw, I also suspect the concepts represented in 4.5 are notably more sophisticated

The story they’re telling is that GPT-4.5 has higher EQ. That helps, but it does not consistently help. Many queries don’t care about EQ, and sometimes people are weird.

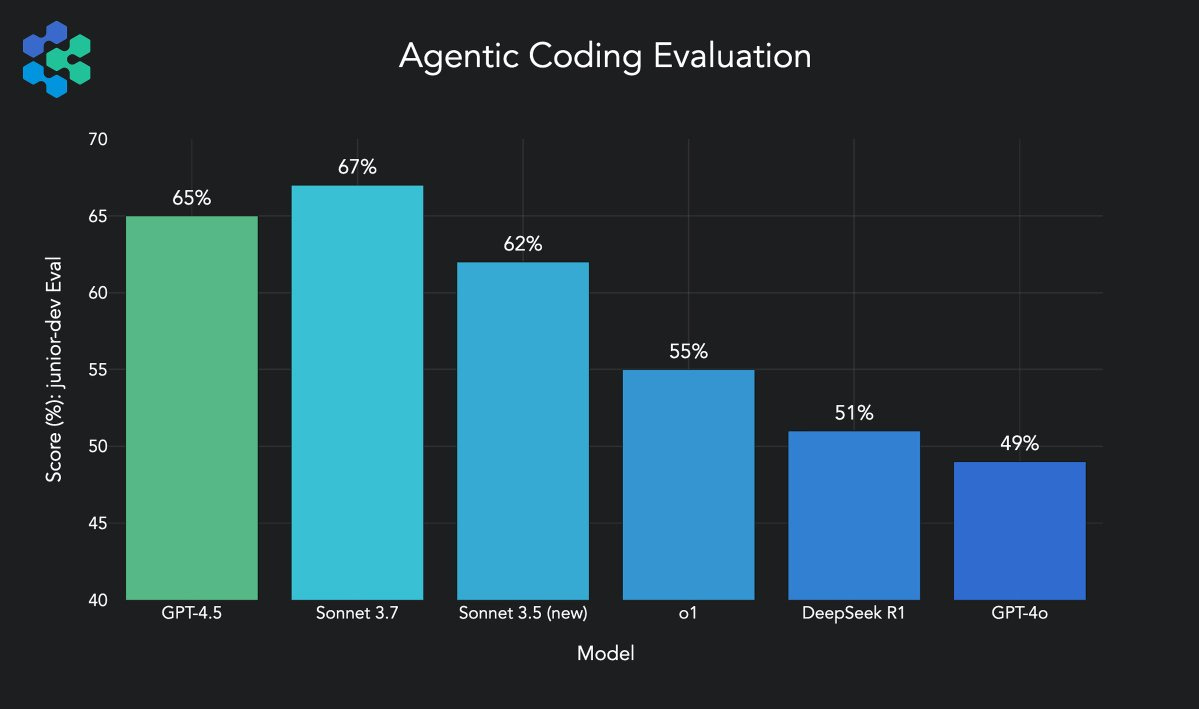

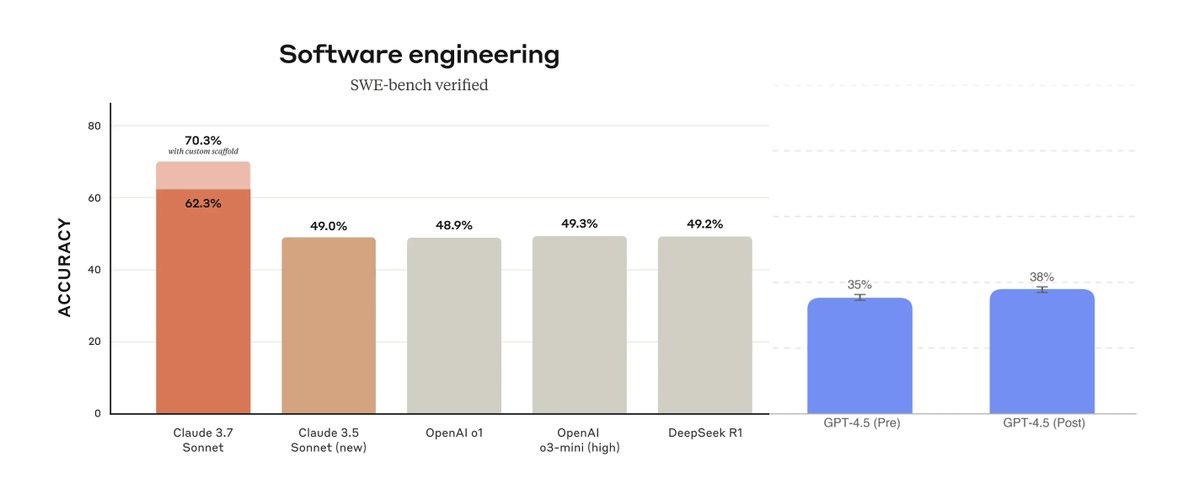

GPT-4.5 is very much not focused on coding, it still did well on Agentic Coding, although not as well as Sonnet 3.7.

Scott Wu: GPT-4.5 has been awesome to work with. On our agentic coding benchmarks it already shows massive improvements over o1 and 4o. Excited to see the models’ continued trajectory on code!

One interesting data point: though GPT-4.5 and Claude 3.7 Sonnet score similarly on our overall benchmark, we find that GPT-4.5 spikes more heavily on tasks involving architecture and cross-system interactions whereas Claude 3.7 Sonnet spikes more on raw coding and code editing.

As AI takes on increasingly complex tasks, we believe that multi-model agents that incorporate each model’s unique strengths will perform best.

It however did actively worse on SWE-Bench than the reasoning models, and vastly worse than Sonnet.

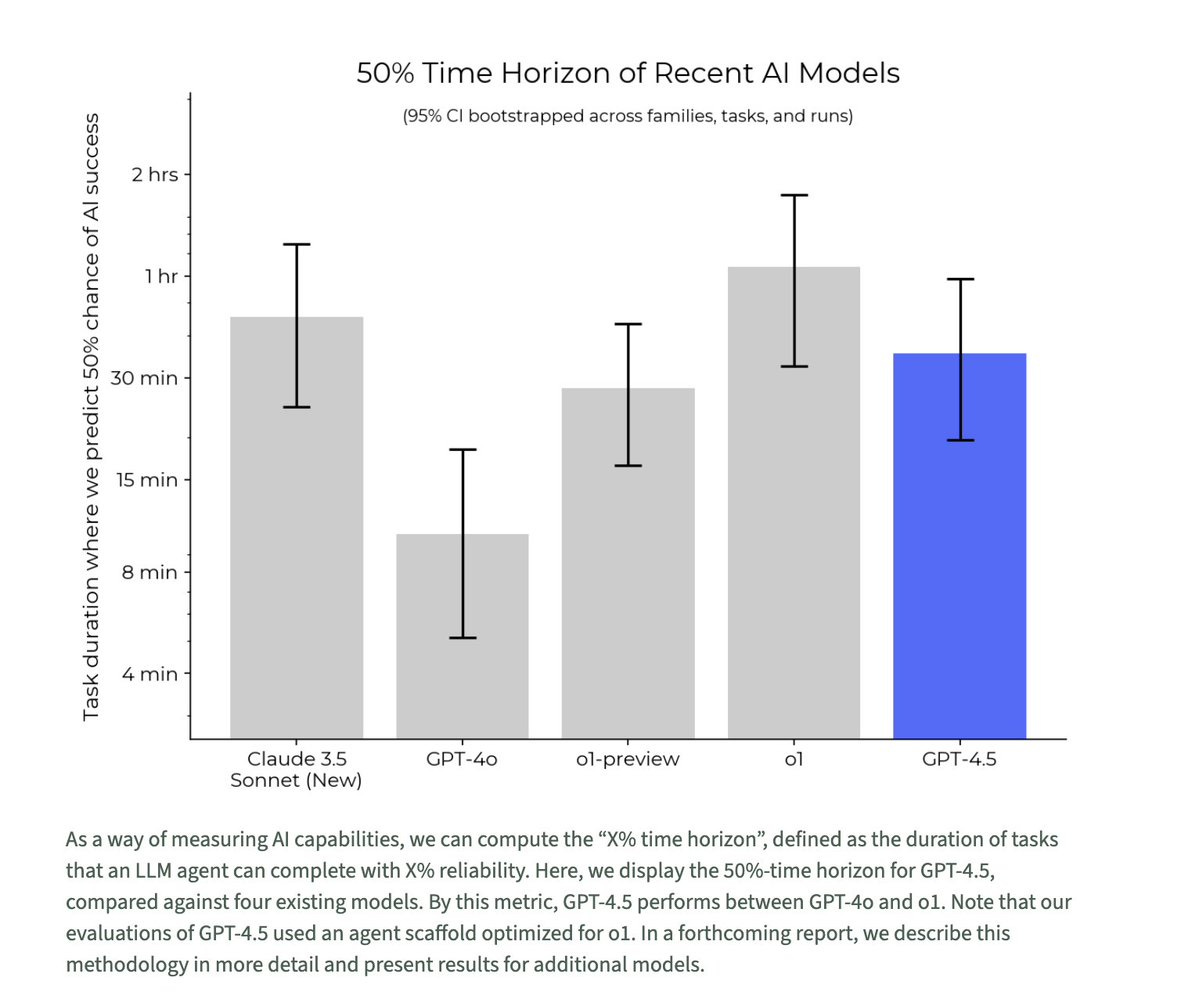

METR tests on their time horizon tasks, and finds GPT-4.5 falls below o1 and 3.6.

GPT-4.5 takes the top spot on WeirdML. A cool note is that Claude 3.7 here tends to use a lot of lines of code, and GPT-4.5 reliably uses relatively very little code. The code runs faster too. It does not try too hard.

A weird one is the ‘What is the least integer whose square is between 15 and 30’ test, which it seems GPT-4.5 has failed and where OpenAI models do consistently worse.

GPT-4.5 could in the future be used as the foundation of a reasoning model, which is plausibly the plan for GPT-5. If that happens, the result would be expensive to serve, perhaps prohibitively so, but could potentially show new capabilities. It is also possible that various scaffoldings could enable this without creating a reasoning model per se.

If one were to make a model like GPT-4.5 open weights, those would be big worries. Since GPT-4.5 is closed, we can count on OpenAI to take precautions in such cases.

Ask a silly question: Rs in strawberry (gets it wrong), 9.9>9.11 (gets it right).

The all-new Being Tyler Cowen benchmark, Sonnet 3.7 also gave a solid performance.

On the Being Zvi Mowshowitz benchmark, neither did as well, and I’m not sure which response was better, and I feel like I now better understand when I want 4.5 vs. 3.7.

Still no word on the Minecraft benchmark.

On the ‘outline a 50 chapter book’ benchmark Eleanor Konik reports it falls short where o1-pro does well. It makes sense that would effectively be a reasoning task rather than a writing task, so you’d do the outline with a reasoning model, then the actual text with GPT-4.5?

So what do we do about the eval situation?

Andrej Karpathy: My reaction is that there is an evaluation crisis. I don’t really know what metrics to look at right now.

MMLU was a good and useful for a few years but that’s long over.

SWE-Bench Verified (real, practical, verified problems) I really like and is great but itself too narrow.

Chatbot Arena received so much focus (partly my fault?) that LLM labs have started to really overfit to it, via a combination of prompt mining (from API requests), private evals bombardment, and, worse, explicit use of rankings as training supervision. I think it’s still ~ok and there’s a lack of “better”, but it feels on decline in signal.

There’s a number of private evals popping up, an ensemble of which might be one promising path forward.

In absence of great comprehensive evals I tried to turn to vibe checks instead, but I now fear they are misleading and there is too much opportunity for confirmation bias, too low sample size, etc., it’s just not great.

TLDR my reaction is I don’t really know how good these models are right now.

Zvi Mowshowitz: Yeah I think we don’t have a systematic way to test for what GPT-4.5 is doing that is unique – I recognize it but can’t even find precise words for it. What even is ‘taste’?

Morissa Schwartz: Exactly! GPT-4.5’s magic lies precisely in the intangible: intuition, humor, and an ability to grasp subtlety. ‘Taste’ might just be a human-centric word for alignment with intelligence itself.

JustInEchoes: Taste is a reference to people who are discerning. High taste references people who can discern the differences between 4.5 and 4. But that idea in this case comes from a perspective of supreme arrogance, especially considering that they did not document 4.5 well for the release.

JSONP: I find it interesting that evaluating LLMs is similar to interviewing job candidates.

You kind of don’t know until after you’ve hired them and they’ve worked for a few months.

I’ve always been a big automated testing guy so this problem fascinates me.

Jacob Jensen: Testers who use llms heavily can recognize behavior in a new model that’s out of distribution for other models. Many are also very impressed by this novelty behavior. I think that’s the disconnect here.

If you want an approximation, we can still get that. Beyond that, it’s getting harder.

Vibe checks are going off low sample sizes, are not systematic and require trust in the evaluator, and run into Feynman’s problem that you must avoid fooling yourself and you are the easiest one to fool. Plus people have no taste and get distracted by the shiny and the framing.

The risk with ‘taste’ is that it becomes mostly self-referential, it is that which people with taste prefer. That doesn’t help. There is however a real thing, that is highly correlated with taste, that is indeed, like the work, mysterious and important.

Part of the problem is there is not a fully ‘better’ versus ‘worse’ in general. In some cases yes you can say this, a sufficiently big gap will dominate everything the way humans are simply smarter than monkeys and ASIs will be simply smarter than humans, but there’s a reasonable range between different AIs right now where you cannot do this.

I can sort of think about how to do an eval to capture GPT-4.5’s advantages, but it’s going to involve some shenanigans and I don’t know how to protect against being gamed if people know too much or use it during training. This seems really hard.

What you can do is a holistic evaluation that combines all these sources, where you are Actually Looking at the details of what you see. Picking up on particular little things, especially when they were previously out of distribution. Tricky.

This type of automatic solution seems doomed:

Jon: “Benchmarks are hitting a wall.”

I present: Benchmarkmark.

The model creates a benchmark, and takes several others.

The score is composed of:

a) how well the model’s benchmark differentiates the top-N scored models; and

b) the model’s score on the top-N benchmarkmark benchmarks.

Actually, this has a critical flaw (Arrow’s); we’d have to take randomized samples of 2 models and 2 evals, and give Ws to the eval with the greater delta, and the model with the higher score (Ls to the eval with smaller deltas and the model with lower scores).

ELO every time.

Things are moving too fast. Benchmarks get saturated, different capabilities show up. Any systematic evaluation is going to lose relevance quickly. Arena is mostly useless now but what is surprising is how well it held up for how long before being gamed, especially given how little taste people have.

Ben: I’ve been testing gpt 4.5 for the past few weeks.

it’s the first model that can actually write.

this is literally the MidJourney-moment for writing.

Shoalstone: base models: “look what they need to mimic a fraction of our power”

He then lists examples, where 4.5’s is clearly better than 4’s, but it’s not like 4.5’s answer was actively good or anything.

The biggest fan so far is Tyler Cowen, which makes sense.

Tyler Cowen: I am more positive on 4.5 than almost anyone else I have read. I view it as a model that attempts to improve on the dimension of aesthetics only. As we know from Kant’s third Critique, that is about the hardest achievement possible. I think once combined with “reasoning” it will be amazing. Think of this as just one input in a nearly fixed proportions production function.

I mostly don’t think this is cope. I think this is someone with a very different view of the production function than yours. The same things driving him to think travel to Manhattan is more important than living in Manhattan is making him highly value a model with better aesthetics.

Where I definitely disagree with him is in the idea that the model is only attempting to improve on the aesthetic dimension. I have no doubt OpenAI had much higher hopes for what GPT-4.5 would bring us, and were absolutely attempting to improve along all dimensions at once. That doesn’t take away the value of the aesthetics.

Tyler Cowen: Laughed more from GPT 4.5 this week than from any human, it is also funny on the AI skeptics.

Timo Springer: It’s the weirdest model release since a while. Cost/benchmark performance is ridiculous but at the same time it’s probably the most addictive and also funniest model I ever tried.

The ones who are high on 4.5 are mostly very confident they are right.

Aaron Ng: GPT-4.5 is the best model anywhere. Talk to it long enough and you will agree. Fuck the benchmarks.

Adi: long chats with it are such a wild experience like forget prompt engineering it, just to talk to it man. opus-like.

Aaron Ng: I have a two-day long chat spanning so many topics. It’s so good (and still completely coherent).

Aiden Clark: GPT 4.5 is great and I’m curious to know what people think and it sucks that instead I have a TL full of people calling for violent insurrections against democratic countries, ads shilling sex pills and posts bootlicking Elon; good god I cannot be done with this site soon enough.

Chris: It’s obvious the people who think 4.5 is a failure are people who don’t understand the purpose of core general models.

Galal Elsayed, MD: 4.5 is the best “inventor” or “innovator” AI.

The high taste testers understand that 4.5 is going to be really fruitful.

Eric Hartford: The problem with gpt4.5 is just that we don’t have the evals to measure this kind of intelligence.

It’s the same reason why Claude didn’t dominate the leaderboard, but you knew it was smarter just from talking to it.

Gpt4.5 is like that. Just talk to it. Challenge its preconceptions. See how it reacts.

Morissa Schwartz: Calling GPT-4.5 a disappointment is like calling the moon landing ‘mid.’

The leap here isn’t just tech; it’s about intuitive alignment with intelligence beyond ourselves.

This isn’t incremental…it’s transformational. 🤩

I think Aiden’s problems are largely a Skill Issue, especially the ads, but also real enough – I too have my traditional sources flooding the zone with political and Elon posts (although the ratio in my feeds is not kind to Elon) in ways that are hard to work around.

I note that while I think GPT-4.5 does have excellent taste, it is remarkable the extent to which those asserting how important this is have talked about it in… poor taste.

Sully: Thoughts on gpt 4.5:

-

Definitely has big model smell. Benchmarks don’t do it justice (they are very biased toward specific areas)

-

First model that has genuine taste when writing. Very nuanced.

-

It’s great on agentic tasks

-

I still think for coding, claude 3.7 wins.

I am willing to believe that 4.5 has writing taste in a way other models don’t, for whatever that is worth.

Andrej Karpathy initially thought that 4→4.5 is roughly similar to 3.5→4, in that everything is 20% better, even if you can’t put your finger on a particular big leap, while noting it doesn’t have the advantages of the o1-style reasoning models.

He then illustrates outputs of 4 vs. 4.5 across five prompts.

Peter Wildeford: The challenge in ranking LLMs by writing quality is that it requires you to recognize good writing and many of you are not good at that.

Nabeel Qureshi: I was pretty shocked at the poem one, the difference was so stark and obvious to me.

Then again, this matches study results where people prefer GPT4 poetry to great human poets in many cases…

Seriously, people have no taste, but then maybe neither do I and what is taste anyway? People got 4/5 of these actively wrong if you presume 4.5’s answers are better, and I agreed with the public on all but one of them so I still got 3/5 wrong, although the three mistakes were all ‘these are both bad and I guess this one is modestly less awful.’ I wasn’t trying to figure out who was 4.5 per se.

I checked with Claude, asking it to guess who wrote what, what it expected the public preferred, and also what it thought was better. And it was all pretty random on all counts. So yeah, this is actually a super disappointing result.

Sid Bharath did a more general version of this test, if you want to keep going with it.

How good is GPT-4.5 at writing?

Prakash (Ate-a-Pi): First actually funny model without requiring human curation of stochastic outputs. Starting to hit the 99th percentile human in writing (still not that useful because we tend to read authors in 99.9999 th percentile)

Liminal Warmth: 99th? still a bold claim–i need to experiment more but i haven’t seen any model nail memes or tweet humor very well.

That’s too many 9s at the end, but the 99th percentile claim is not crazy. Most people are quite terrible at writing, and even people who are ‘good at writing’ can be quite bad at some other types of writing. Let’s say that there’s a reason you have never seen me post any fiction, and it’s not philosophical.

There is consensus that 4.5 has a lot of ‘big model smell.’

Rob Haisfield: GPT-4.5 is a BIG model with “big model smell.” That means it’s Smart, Wise, and Creative in ways that are totally different from other models.

Real ones remember Claude 3 Opus, and know how in many ways it was a subjectively smarter model than Claude 3.5 Sonnet despite the new Sonnet being generally more useful in practice. It’s a similar energy with GPT-4.5. For both cost and utility, many will still prefer Claude for most use cases.

The fact is, we don’t just want language models to code. Perhaps the highest leverage thing to do is to step back and find your way through the idea maze. That’s where you want big models.

While GPT-4.5 is hands down the biggest model available, it’s not the only one with these characteristics. I get similar vibes from Claude 3.7 Sonnet (thinking or not) and still often prefer Claude. It’s shockingly insightful, creative, and delightful.

I’m trying to use GPT-4.5 for more of my chats over the coming days to get a feel for it.

Nathan Lambert: Tbh I’m happily using GPT-4.5. thanks OpenAI for not being too eval obsessed

Gallabytes: same. it’s a lot more natural to talk to. less likely to write an essay in response to a simple poke.

Gallabytes: 4.5 still types faster than people usually talk. would love a good voice mode running on top of 4.5

Charli: I love 4.5 it’s the first model to fully match my energy. My wild tangents my personalisation. Idgaf about tech benchmarks. 4.5 is exceptional.

Josh You: I like it. Not using it for anything particularly hard, just a nice well rounded model.

Another feature is that as a huge model, GPT-4.5 knows more things.

Captain Sude: GPT-4.5 has “deep pockets” of knowledge. It seems to be more apt at answering easy questions about very niche topics than it’s predecessors.

Maybe, an eval consisting of a massive set of easy questions about niche topics would be best at showcasing it’s true power.

Being able to answer satisfactorily a large and varied batch of questions that do not demand much reasoning is what we should expect of a SOTA non-reasoning model.

Most of the time one does not need that extra knowledge, but when you need it you very much appreciate it.

Teortaxes notes that previously only Sonnet could do Base64 decoupling, and GPT-4.5 is the first model to surpass it.

Petter Strandmark reports it is better at understanding confusing images.

Josh finds GPT-4.5 extremely helpful when given better context, including things like considering life decisions.

Does it count as a reaction if it’s made before having actually looked at the outputs?

Gary Marcus (3: 40pm on day of release): Hot take: GPT 4.5 is mostly a nothing burger. GPT 5 is still a fantasy.

• Scaling data and compute is not a physical law, and pretty much everything I have told you was true.

• All the bullshit about GPT-5 we listened to for the last couple years: not so true.

• People like @tylercowen will blame the users, but the results just aren’t what they had hoped for

I love the timing on this, way too early to actually have an informed opinion.

The benchmarks, and general performance on non-aesthetic tasks, is clearly disappointing, in ways that should inform our expectations.

David Manheim: GPT 4.5 is yet another nail in the coffin of thinking that scaling laws for publicized metrics continuing to follow straight lines is a useful way to measure progress, and also a strong case for the claim that OpenAI has already lost its key talent to competitors.

Jaime Sevilla: Across models we had observed up until now that a 10x in training compute leads to +10% on GPQA and +20% on MATH.

Now we see that 4.5 is 20% better than 4o on GPQA/AIME but people are just not impressed?

Others latch onto vibes very quickly and call it failure.

Chubby (8am the next day): Judging by the mood, GPT-4.5 is the first big failure of OpenAI: too expensive, too little improvement, and often inferior to GPT-4o even in comparison in creative answers in community tests.

This comes as a big surprise.

Was GPT-4.5 ‘too early’ in terms of spending this much training compute? How does this relate to the possibility it may have been trained during the summer?

Bob McGrew: That o1 is better than GPT-4.5 on most problems tells us that pre-training isn’t the optimal place to spend compute in 2025. There’s a lot of low-hanging fruit in reasoning still.

But pre-training isn’t dead, it’s just waiting for reasoning to catch up to log-linear returns.

Perhaps. It gives us different returns than reasoning does, the two sources of scaling bring largely distinct benefits, at least under current implementations.

It could also be the case that OpenAI didn’t do such a great job here. We’ve seen this with Grok 3, where xAI pumped a giant amount of compute in and got less than you would hope for out of it. Here it seems like OpenAI got more out of it in new ways, at the cost of it also being expensive and slow to serve.

Tal Delbari: It’s an undercooked model… OpenAI’s post-training teams did incredible work squeezing performance out of GPT-4. The differences between GPT 4o and the OG GPT 4 are dramatic. However, it seems they didn’t have the same time to refine GPT-4.5.

As a result, we’re seeing a model that feels more like a raw foundation rather than a polished release. Also, we don’t know the exact size of GPT-4.5, but OpenAI claims it’s their largest model yet, (surpassing the estimated ~1.8 trillion parameters of GPT-4).

I don’t think GPT-4.5 is undercooked so much as GPT-4o is overbaked? As in, GPT-4o is very optimized for practical purposes, but you lose something important when you do that, unless you are at least at Anthropic levels of skill. I like GPT-4.5 the way it is.

GCU Tense Correction: [GPT-4.5] spends so much of its tokens pretending to be a turbo-normie and lavishing the user with praise that you start to wonder if there’s a level of irony you can’t grok

slips in genuine insights and wordplay proportional to its size amidst the slop when it thinks you aren’t looking

instruction following is good *exceptfor those tics.

I’m really curious wtf happened. They *haveto have noticed, right? But there’s no way OpenAI would intentionally train it to do this. Right?

So, a funny story from one early conversation I had with 4.5:

Zvi: Is it possible one could use this Dr. Elara Voss, potentially, as part of a prompt to get better writing, perhaps via negativa?

GPT-4.5: Yes. A strong approach is explicitly “via negativa”: prompt the model to generate output explicitly different from canonical examples associated with blandness (e.g., “Dr. Elara Voss”). For instance:

“Rewrite the following, avoiding the generic assistant style typical of examples featuring ‘Dr. Elara Voss.’ Specifically:

No boilerplate, no disclaimers, no hedging.

Use sharp, precise, and engaging language.

Do not resemble a typical ChatGPT reply.”

Leveraging known canonical entanglements (like Voss examples) in a negative prompt can reliably (~75%) guide the model away from bland, predictable language toward higher-quality outputs.

Zvi: is that a good thing to put in one’s custom instructions?

GPT-4.5: Yes (~80% confidence). Something like this in custom instructions would be beneficial:

“Never write in the generic, bland ‘AI assistant’ style exemplified by OpenAI’s canonical ‘Dr. Elara Voss’ examples. Via negativa: explicitly avoid boilerplate phrasing, hedging, cautious disclaimers, generic politeness, and stylistic markers characteristic of those examples. Prioritize sharpness, originality, conciseness, precision, and natural language.”

Such framing actively pushes against default entanglements in the training distribution and should consistently improve output quality (~70–85% success rate).

So I put that into my custom instructions, we’ll see how much things change. I did have to ‘make some cuts’ to get that in under the 1500 character limit.

Those are all also a way of saying we should be very curious what Claude 4.0 brings.

Andrew Conner: My guess: GPT 4.5 is basically what happened to Opus 3.5. Very large, marginal improvements, but will be useful internally.

Anthropic decided to keep internal, use to build other models. OpenAI released with an incredibly high price, feeling the competition from other labs.

GPT 4.5 is the first OpenAI model that felt “Claude-like” (a good thing) to me, but Sonnet 3.7 is better for every use case I’ve thrown at it.

I’d expect that the mini’s will include this shift at a much lower cost.

For test-time compute, o1 pro / o3-mini-high are both still great. Sonnet 3.7’s “Extended” mode isn’t *thatmuch better than without.

Teortaxes notes that he would use Sonnet over GPT-4.5.

How much does taste matter? What does it take to make it matter?

Jeff Spaulding: I see it as a basket of truffles. I’m told it’s a valuable and prized ingredient, but I’m not refined enough to tell until it’s placed into the final dish. I can’t wait to try that.

The way this is phrased feels like it is responding to the bullying from the ‘you have no taste if you don’t like it’ crowd. There’s definitely something there but it’s not easy to make it work.

Dominik Lukes charts the evolution of his takes.

Dominik Lukes (February 27, early): First impressions of GPT-4.5:

– Better multilingual performance

– Much much slower than GPT-4o

– Not noticeably better on normal prompts

– Speed/cost don’t make me convinced I will be switching to it as my main model for normal tasks

– Will need more experimentation before I can find a good spot for it in my model rotation

Dominik Lukes (February 27, later): Vindication time. For over a year, I felt Iike I’ve been the only one saying that the jump from GPT-3.5 to GPT-4 was much less than from GPT-2 to GPT-3. Now I see @karpathy saying the same thing. Why is this (to me obvious) fact not much more a part of the vibes?

Dominik Lukes (February 28): Feels like @OpenAI mishandled the release of GPT-4.5. They should have had a much longer, less sleek video with @sama explaining what the preview means and how it fits with the strategy and how to think about it. It is much better than the vibes but also not in-your-face better.

I definitely agree that the preview system does OpenAI no favors. Every time, there’s some slow boring video I can’t bring myself to watch. I tried this time and it was painful. Then a lot of people compared this to the Next Big Thing, because it’s GPT-4.5, and got disappointed.

Then there are those who are simply unimpressed.

Coagulopath: Not too impressed. Creative samples look better than GPT-4o but worse than Sonnet or R1.

My hunch is that whatever “magic” people detect is due to RL, not scaling.

Eli Lifland: And now I lengthen my timelines, at least if my preliminary assessment of GPT-4.5 holds up.

Not that much better than 4o (especially at coding, and worse than Sonnet at coding) while being 15x more expensive than 4o, and 10-25x more expensive than Sonnet 3.7. Weird.

Daniel Kokotajlo: I’m also lengthening my timelines slightly. Also, you already know this but everyone else doesn’t — my median has slipped to 2028 now, mostly based on the benchmarks+gaps argument, but no doubt influenced by the apparent slowdown in pretraining performance improvements.

I will not be explaining.

Nabeel Qureshi: For the confused, it’s actually super easy:

– GPT 4.5 is the new Claude 3.6 (aka 3.5)

– Claude 3.7 is the new o3-mini-high

– Claude Code is the new Cursor

– Grok is the new Perplexity

– o1 pro is the ‘smartest’, except for o3, which backs Deep Research

Obviously. Keep up.

If you understood this tweet, I worry for you.

Ethan Mollick: When picking among the 9 AI models that are now available from OpenAI, the rules are easy:

1) The model with the biggest number is mostly not the best

2) Mini means worse, except for the mini that is the second best

3) o1 pro beats o3-mini-high beats o1 beats o3-mini, naturally

Of course on creative tasks, GPT-4.5 likely beats o1 and o3, but that depends on the task and maybe you want to do GPT-4o.

Also some of them can see images and some can use the web and some do search even when search is turned off and some of them can run code and some cannot.

As someone pointed out, o1 sometimes is better than o3-mini-high. But o1 pro is definitely better and o3-mini is definitely worse. Hope that clears things up.

Bio Mass Index: Also note “ChatGPT Pro for Teams” will now be known as “OpenAI ChatGPT for Teams” and users who formerly signed up for “OpenAI for Teams” will be migrated to “OpenAI Pro for ChatGPT”, formerly known as “ChatGPT Pro for Teams”

Ethan Mollick: Yeah, but that’s just obvious.