Apple will update iOS notification summaries after BBC headline mistake

Nevertheless, it’s a serious problem when the summaries misrepresent news headlines, and edge cases where this occurs are unfortunately inevitable. Apple cannot simply fix these summaries with a software update. The only answers are either to help users understand the drawbacks of the technology so they can make better-informed judgments or to remove or disable the feature completely. Apple is apparently going for the former.

We’re oversimplifying a bit here, but generally, LLMs like those used for Apple’s notification summaries work by predicting portions of words based on what came before and are not capable of truly understanding the content they’re summarizing.

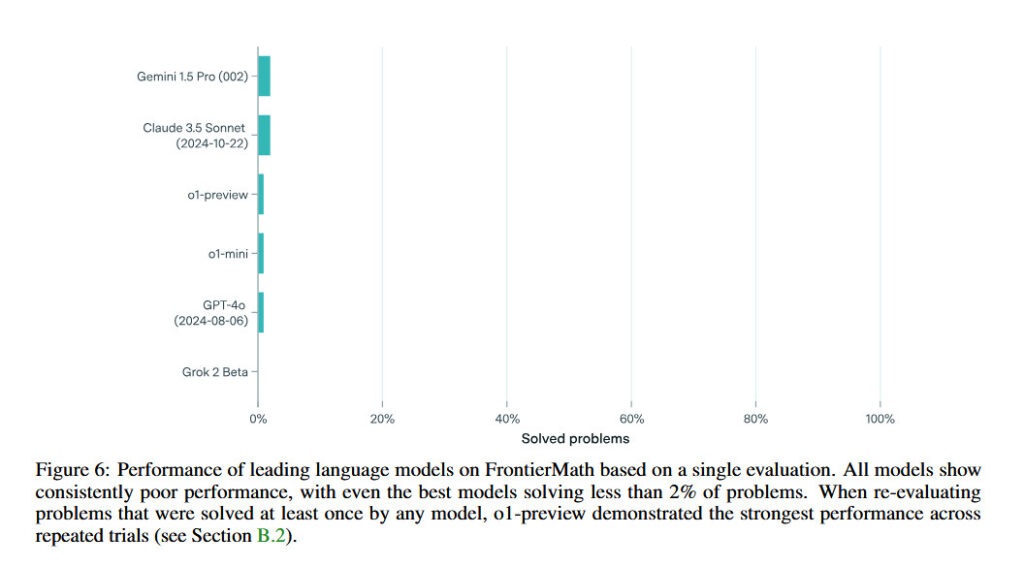

Further, these predictions are known to not be accurate all the time, with incorrect results occurring a few times per 100 or 1,000 outputs. As the models are trained and improvements are made, the error percentage may be reduced, but it never reaches zero when countless summaries are being produced every day.

Deploying this technology at scale without users (or even the BBC, it seems) really understanding how it works is risky at best, whether it’s with the iPhone’s summaries of news headlines in notifications or Google’s AI summaries at the top of search engine results pages. Even if the vast majority of summaries are perfectly accurate, there will always be some users who see inaccurate information.

These summaries are read by so many millions of people that the scale of errors will always be a problem, almost no matter how comparatively accurate the models get.

We wrote at length a few weeks ago about how the Apple Intelligence rollout seemed rushed, counter to Apple’s usual focus on quality and user experience. However, with current technology, there is no amount of refinement to this feature that Apple could have done to reach a zero percent error rate with these notification summaries.

We’ll see how well Apple does making its users understand that the summaries may be wrong, but making all iPhone users truly grok how and why the feature works this way would be a tall order.

Apple will update iOS notification summaries after BBC headline mistake Read More »