OpenAI reportedly nears breakthrough with “reasoning” AI, reveals progress framework

studies in hype-otheticals —

Five-level AI classification system probably best seen as a marketing exercise.

OpenAI recently unveiled a five-tier system to gauge its advancement toward developing artificial general intelligence (AGI), according to an OpenAI spokesperson who spoke with Bloomberg. The company shared this new classification system on Tuesday with employees during an all-hands meeting, aiming to provide a clear framework for understanding AI advancement. However, the system describes hypothetical technology that does not yet exist and is possibly best interpreted as a marketing move to garner investment dollars.

OpenAI has previously stated that AGI—a nebulous term for a hypothetical concept that means an AI system that can perform novel tasks like a human without specialized training—is currently the primary goal of the company. The pursuit of technology that can replace humans at most intellectual work drives most of the enduring hype over the firm, even though such a technology would likely be wildly disruptive to society.

OpenAI CEO Sam Altman has previously stated his belief that AGI could be achieved within this decade, and a large part of the CEO’s public messaging has been related to how the company (and society in general) might handle the disruption that AGI may bring. Along those lines, a ranking system to communicate AI milestones achieved internally on the path to AGI makes sense.

OpenAI’s five levels—which it plans to share with investors—range from current AI capabilities to systems that could potentially manage entire organizations. The company believes its technology (such as GPT-4o that powers ChatGPT) currently sits at Level 1, which encompasses AI that can engage in conversational interactions. However, OpenAI executives reportedly told staff they’re on the verge of reaching Level 2, dubbed “Reasoners.”

Bloomberg lists OpenAI’s five “Stages of Artificial Intelligence” as follows:

- Level 1: Chatbots, AI with conversational language

- Level 2: Reasoners, human-level problem solving

- Level 3: Agents, systems that can take actions

- Level 4: Innovators, AI that can aid in invention

- Level 5: Organizations, AI that can do the work of an organization

A Level 2 AI system would reportedly be capable of basic problem-solving on par with a human who holds a doctorate degree but lacks access to external tools. During the all-hands meeting, OpenAI leadership reportedly demonstrated a research project using their GPT-4 model that the researchers believe shows signs of approaching this human-like reasoning ability, according to someone familiar with the discussion who spoke with Bloomberg.

The upper levels of OpenAI’s classification describe increasingly potent hypothetical AI capabilities. Level 3 “Agents” could work autonomously on tasks for days. Level 4 systems would generate novel innovations. The pinnacle, Level 5, envisions AI managing entire organizations.

This classification system is still a work in progress. OpenAI plans to gather feedback from employees, investors, and board members, potentially refining the levels over time.

Ars Technica asked OpenAI about the ranking system and the accuracy of the Bloomberg report, and a company spokesperson said they had “nothing to add.”

The problem with ranking AI capabilities

OpenAI isn’t alone in attempting to quantify levels of AI capabilities. As Bloomberg notes, OpenAI’s system feels similar to levels of autonomous driving mapped out by automakers. And in November 2023, researchers at Google DeepMind proposed their own five-level framework for assessing AI advancement, showing that other AI labs have also been trying to figure out how to rank things that don’t yet exist.

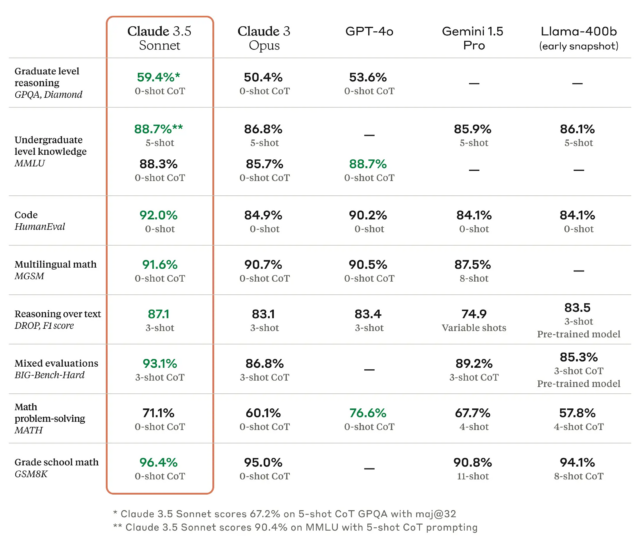

OpenAI’s classification system also somewhat resembles Anthropic’s “AI Safety Levels” (ASLs) first published by the maker of the Claude AI assistant in September 2023. Both systems aim to categorize AI capabilities, though they focus on different aspects. Anthropic’s ASLs are more explicitly focused on safety and catastrophic risks (such as ASL-2, which refers to “systems that show early signs of dangerous capabilities”), while OpenAI’s levels track general capabilities.

However, any AI classification system raises questions about whether it’s possible to meaningfully quantify AI progress and what constitutes an advancement (or even what constitutes a “dangerous” AI system, as in the case of Anthropic). The tech industry so far has a history of overpromising AI capabilities, and linear progression models like OpenAI’s potentially risk fueling unrealistic expectations.

There is currently no consensus in the AI research community on how to measure progress toward AGI or even if AGI is a well-defined or achievable goal. As such, OpenAI’s five-tier system should likely be viewed as a communications tool to entice investors that shows the company’s aspirational goals rather than a scientific or even technical measurement of progress.

OpenAI reportedly nears breakthrough with “reasoning” AI, reveals progress framework Read More »