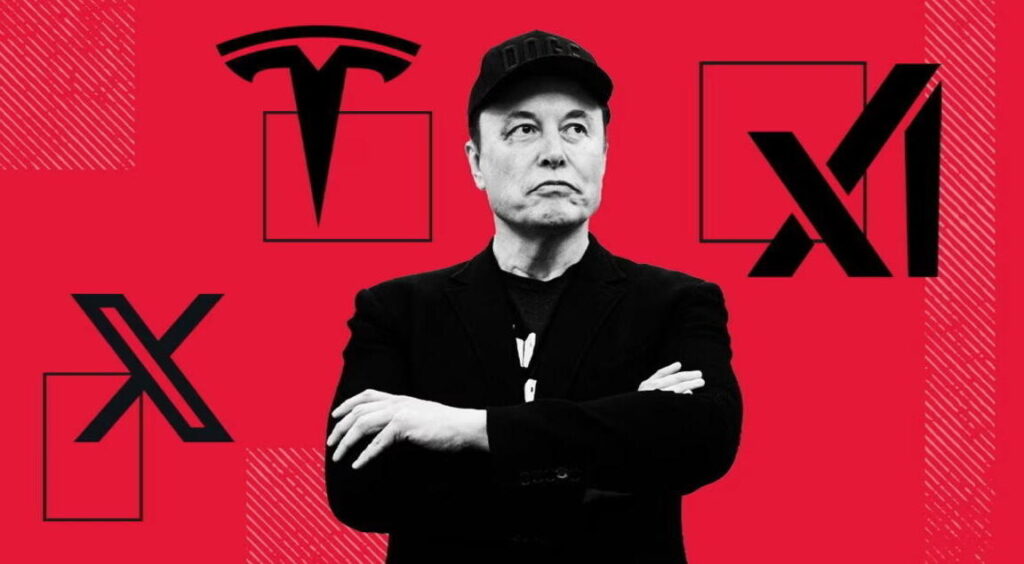

OpenAI mocks Musk’s math in suit over iPhone/ChatGPT integration

“Fraction of a fraction of a fraction”

xAI’s claim that Apple gave ChatGPT a monopoly on prompts is “baseless,” OpenAI says.

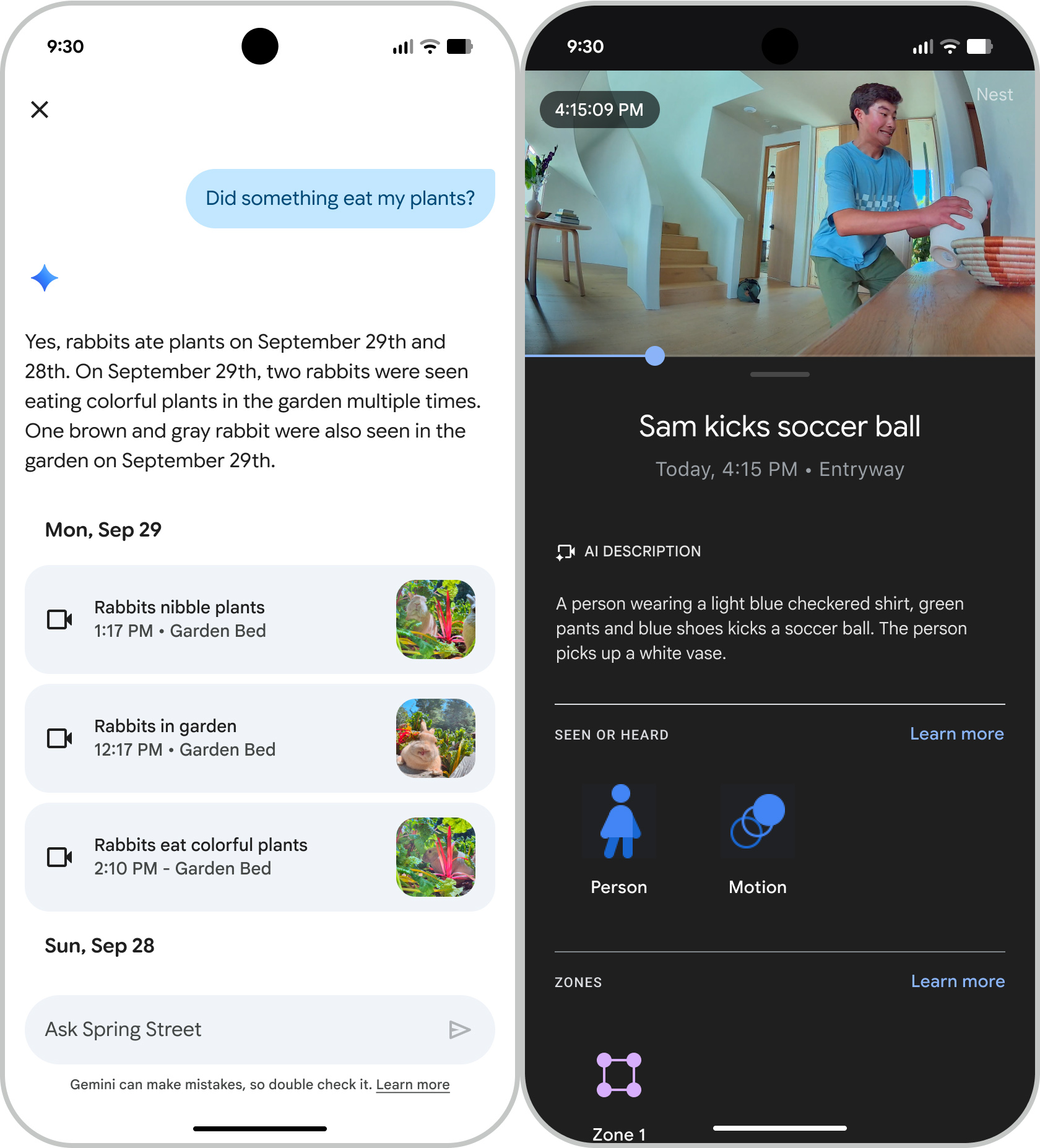

OpenAI and Apple have moved to dismiss a lawsuit by Elon Musk’s xAI, alleging that ChatGPT’s integration into a “handful” of iPhone features violated antitrust laws by giving OpenAI a monopoly on prompts and Apple a new path to block rivals in the smartphone industry.

The lawsuit was filed in August after Musk raged on X about Apple never listing Grok on its editorially curated “Must Have” apps list, which ChatGPT frequently appeared on.

According to Musk, Apple linking ChatGPT to Siri and other native iPhone features gave OpenAI exclusive access to billions of prompts that only OpenAI can use as valuable training data to maintain its dominance in the chatbot market. However, OpenAI and Apple are now mocking Musk’s math in court filings, urging the court to agree that xAI’s lawsuit is doomed.

As OpenAI argued, the estimates in xAI’s complaint seemed “baseless,” with Musk hesitant to even “hazard a guess” at what portion of the chatbot market is being foreclosed by the OpenAI/Apple deal.

xAI suggested that the ChatGPT integration may give OpenAI “up to 55 percent” of the potential chatbot prompts in the market, which could mean anywhere from 0 to 55 percent, OpenAI and Apple noted.

Musk’s company apparently arrived at this vague estimate by doing “back-of-the-envelope math,” and the court should reject his complaint, OpenAI argued. That math “was evidently calculated by assuming that Siri fields ‘1.5 billion user requests per day globally,’ then dividing that quantity by the ‘total prompts for generative AI chatbots in 2024,'”—”apparently 2.7 billion per day,” OpenAI explained.

These estimates “ignore the facts” that “ChatGPT integration is only available on the latest models of iPhones, which allow users to opt into the integration,” OpenAI argued. And for any user who opts in, they must link their ChatGPT account for OpenAI to train on their data, OpenAI said, further restricting the potential prompt pool.

By Musk’s own logic, OpenAI alleged, “the relevant set of Siri prompts thus cannot plausibly be 1.5 billion per day, but is instead an unknown, unpleaded fraction of a fraction of a fraction of that number.”

Additionally, OpenAI mocked Musk for using 2024 statistics, writing that xAI failed to explain “the logic of using a year-old estimate of the number of prompts when the pleadings elsewhere acknowledge that the industry is experiencing ‘exponential growth.'”

Apple’s filing agreed that Musk’s calculations “stretch logic,” appearing “to rest on speculative and implausible assumptions that the agreement gives ChatGPT exclusive access to all Siri requests from all Apple devices (including older models), and that OpenAI may use all such requests to train ChatGPT and achieve scale.”

“Not all Siri requests” result in ChatGPT prompts that OpenAI can train on, Apple noted, “even by users who have enabled devices and opt in.”

OpenAI reminds court of Grok’s MechaHitler scandal

OpenAI argued that Musk’s lawsuit is part of a pattern of harassment that OpenAI previously described as “unrelenting” since ChatGPT’s successful debut, alleging it was “the latest effort by the world’s wealthiest man to stifle competition in the world’s most innovative industry.”

As OpenAI sees it, “Musk’s pretext for litigation this time is that Apple chose to offer ChatGPT as an optional add-on for several built-in applications on its latest iPhones,” without giving Grok the same deal. But OpenAI noted that the integration was rolled out around the same time that Musk removed “woke filters” that caused Grok to declare itself “MechaHitler.” For Apple, it was a business decision to avoid Grok, OpenAI argued.

Apple did not reference the Grok scandal in its filing but in a footnote confirmed that “vetting of partners is particularly important given some of the concerns about generative AI chatbots, including on child safety issues, nonconsensual intimate imagery, and ‘jailbreaking’—feeding input to a chatbot so it ignores its own safety guardrails.”

A similar logic was applied to Apple’s decision not to highlight Grok as a “Must Have” app, their filing said. After Musk’s public rant about Grok’s exclusion on X, “Apple employees explained the objective reasons why Grok was not included on certain lists, and identified app improvements,” Apple noted, but instead of making changes, xAI filed the lawsuit.

Also taking time to point out the obvious, Apple argued that Musk was fixated on the fact that his charting apps never make the “Must Have Apps” list, suggesting that Apple’s picks should always mirror “Top Charts,” which tracks popular downloads.

“That assumes that the Apple-curated Must-Have Apps List must be distorted if it does not strictly parrot App Store Top Charts,” Apple argued. “But that assumption is illogical: there would be little point in maintaining a Must-Have Apps List if all it did was restate what Top Charts say, rather than offer Apple’s editorial recommendations to users.”

Likely most relevant to the antitrust charges, Apple accused Musk of improperly arguing that “Apple cannot partner with OpenAI to create an innovative feature for iPhone users without simultaneously partnering with every other generative AI chatbot—regardless of quality, privacy or safety considerations, technical feasibility, stage of development, or commercial terms.”

“No facts plausibly” support xAI’s “assertion that Apple intentionally ‘deprioritized'” xAI apps “as part of an illegal conspiracy or monopolization scheme,” Apple argued.

And most glaringly, Apple noted that xAI is not a rival or consumer in the smartphone industry, where it alleges competition is being harmed. Apple urged the court to reject Musk’s theory that Apple is incentivized to boost OpenAI to prevent xAI’s ascent in building a “super app” that would render smartphones obsolete. If Musk’s super app dream is even possible, Apple argued, it’s at least a decade off, insisting that as-yet-undeveloped apps should not serve as the basis for blocking Apple’s measured plan to better serve customers with sophisticated chatbot integration.

“Antitrust laws do not require that, and for good reason: imposing such a rule on businesses would slow innovation, reduce quality, and increase costs, all ultimately harming the very consumers the antitrust laws are meant to protect,” Apple argued.

Musk’s weird smartphone market claim, explained

Apple alleged that Musk’s “grievance” can be “reduced to displeasure that Apple has not yet ‘integrated with any other generative AI chatbots’ beyond ChatGPT, such as those created by xAI, Google, and Anthropic.”

In a footnote, the smartphone giant noted that by xAI’s logic, Musk’s social media platform X “may be required to integrate all other chatbots—including ChatGPT—on its own social media platform.”

But antitrust law doesn’t work that way, Apple argued, urging the court to reject xAI’s claims of alleged market harms that “rely on a multi-step chain of speculation on top of speculation.” As Apple summarized, xAI contends that “if Apple never integrated ChatGPT,” xAI could win in both chatbot and smartphone markets, but only if:

1. Consumers would choose to send additional prompts to Grok (rather than other generative AI chatbots).

2. The additional prompts would result in Grok achieving scale and quality it could not otherwise achieve.

3. As a result, the X app would grow in popularity because it is integrated with Grok.

4. X and xAI would therefore be better positioned to build so-called “super apps” in the future, which the complaint defines as “multi-functional” apps that offer “social connectivity and messaging, financial services, e-commerce, and entertainment.”

5. Once developed, consumers might choose to use X’s “super app” for various functions.

6. “Super apps” would replace much of the functionality of smartphones and consumers would care less about the quality of their physical phones and rely instead on these hypothetical “super apps.”

7. Smartphone manufacturers would respond by offering more basic models of smartphones with less functionality.

8. iPhone users would decide to replace their iPhones with more “basic smartphones” with “super apps.”

Apple insisted that nothing in its OpenAI deal prevents Musk from building his super apps, while noting that from integrating Grok into X, Musk understands that integration of a single chatbot is a “major undertaking” that requires “substantial investment.” That “concession” alone “underscores the massive resources Apple would need to devote to integrating every AI chatbot into Apple Intelligence,” while navigating potential user safety risks.

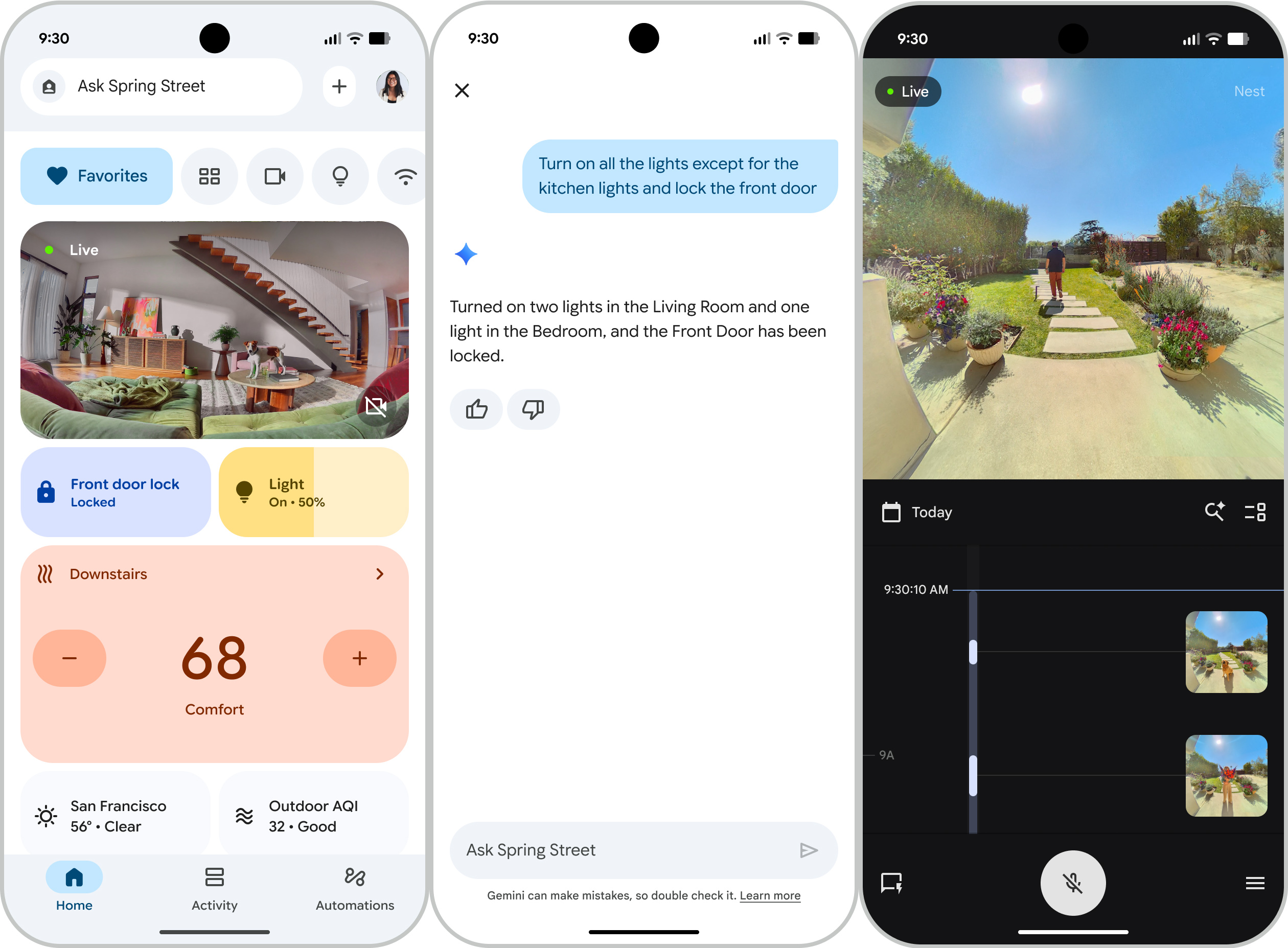

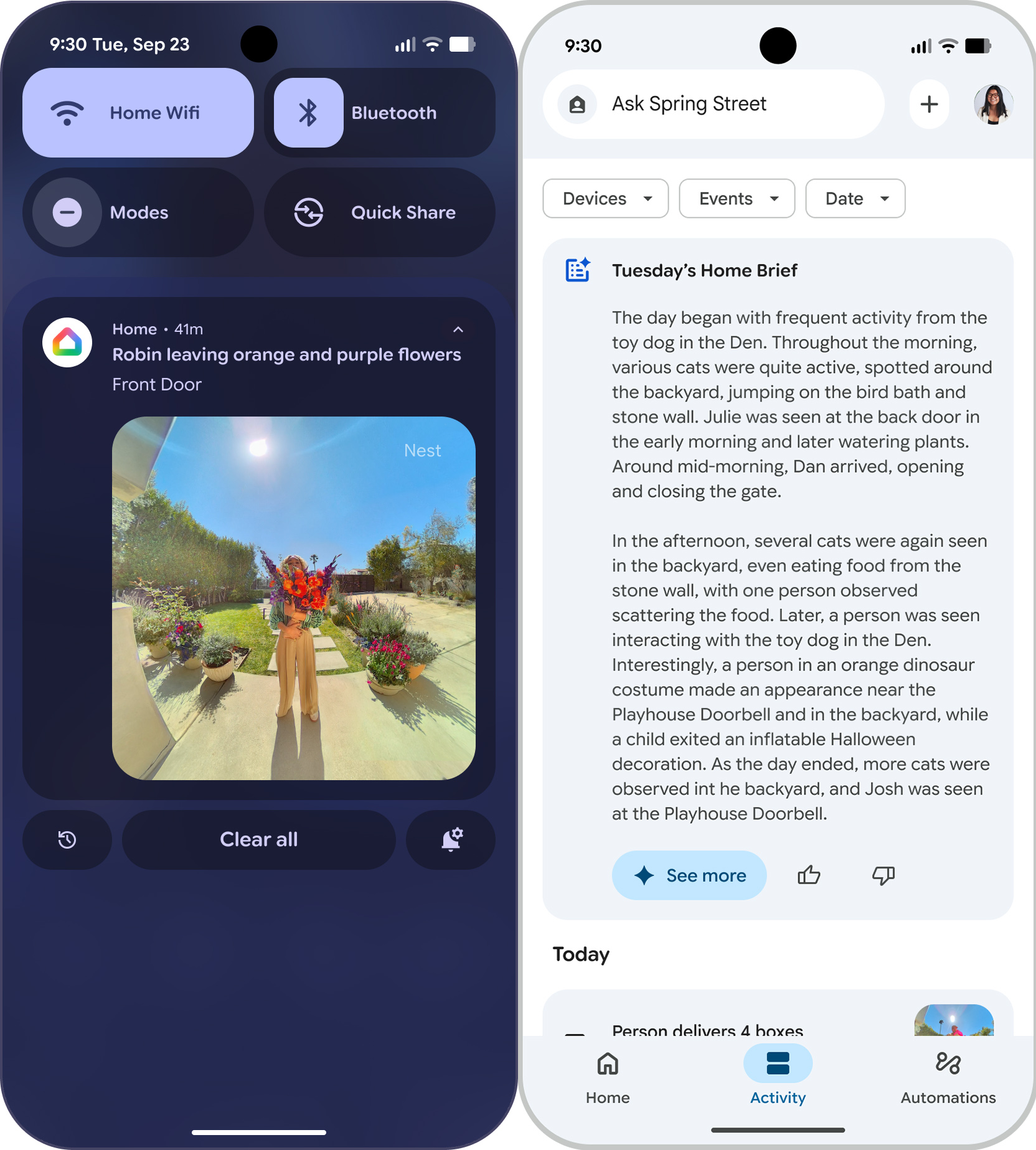

The iPhone maker also reminded the court that it has always planned to integrate other chatbots into its native features after investing in and testing Apple Intelligence’s performance, relying on what Apple deems is the best chatbot on the market today.

Backing Apple up, OpenAI noted that Musk’s complaint seemed to cherry-pick testimony from Google CEO Sundar Pichai, claiming that “Google could not reach an agreement to integrate” Gemini “with Apple because Apple had decided to integrate ChatGPT.”

“The full testimony recorded in open court reveals Mr. Pichai attesting to his understanding that ‘Apple plans to expand to other providers for Generative AI distribution’ and that ‘[a]s CEO of Google, [he is] hoping to execute a Gemini distribution agreement with Apple’ later in 2025,” OpenAI argued.

OpenAI mocks Musk’s math in suit over iPhone/ChatGPT integration Read More »