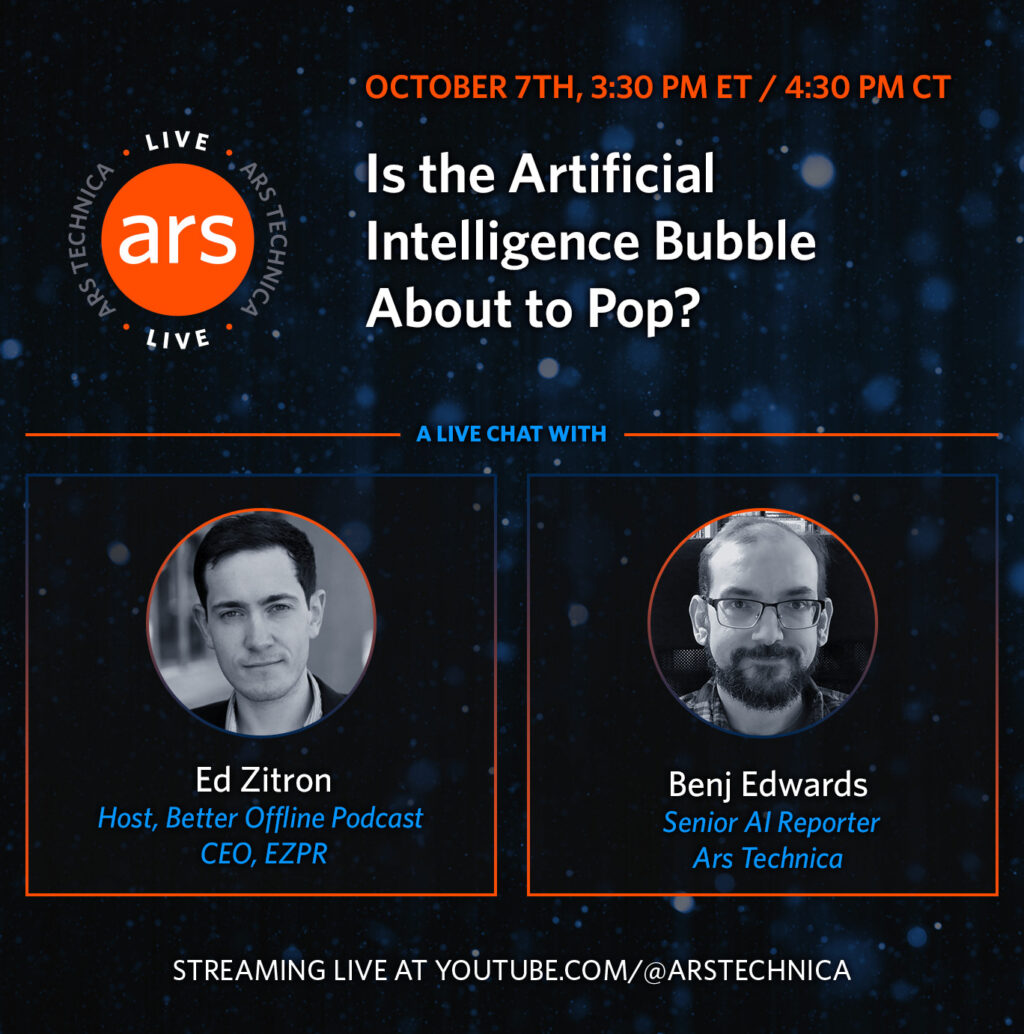

Ars Live: Is the AI bubble about to pop? A live chat with Ed Zitron.

As generative AI has taken off since ChatGPT’s debut, inspiring hundreds of billions of dollars in investments and infrastructure developments, the top question on many people’s minds has been: Is generative AI a bubble, and if so, when will it pop?

To help us potentially answer that question, I’ll be hosting a live conversation with prominent AI critic Ed Zitron on October 7 at 3: 30 pm ET as part of the Ars Live series. As Ars Technica’s senior AI reporter, I’ve been tracking both the explosive growth of this industry and the mounting skepticism about its sustainability.

You can watch the discussion live on YouTube when the time comes.

Zitron is the host of the Better Offline podcast and CEO of EZPR, a media relations company. He writes the newsletter Where’s Your Ed At, where he frequently dissects OpenAI’s finances and questions the actual utility of current AI products. His recent posts have examined whether companies are losing money on AI investments, the economics of GPU rentals, OpenAI’s trillion-dollar funding needs, and what he calls “The Subprime AI Crisis.”

Credit: Ars Technica

During our conversation, we’ll dig into whether the current AI investment frenzy matches the actual business value being created, what happens when companies realize their AI spending isn’t generating returns, and whether we’re seeing signs of a peak in the current AI hype cycle. We’ll also discuss what it’s like to be a prominent and sometimes controversial AI critic amid the drumbeat of AI mania in the tech industry.

While Ed and I don’t see eye to eye on everything, his sharp criticism of the AI industry’s excesses should make for an engaging discussion about one of tech’s most consequential questions right now.

Please join us for what should be a lively conversation about the sustainability of the current AI boom.

Ars Live: Is the AI bubble about to pop? A live chat with Ed Zitron. Read More »