Google’s updated Veo model can make vertical videos from reference images with 4K upscaling

Enhanced support for Ingredients to Video and the associated vertical outputs are live in the Gemini app today, as well as in YouTube Shorts and the YouTube Create app, fulfilling a promise initially made last summer. Veo videos are short—just eight seconds long for each prompt. It would be tedious to assemble those into a longer video, but Veo is perfect for the Shorts format.

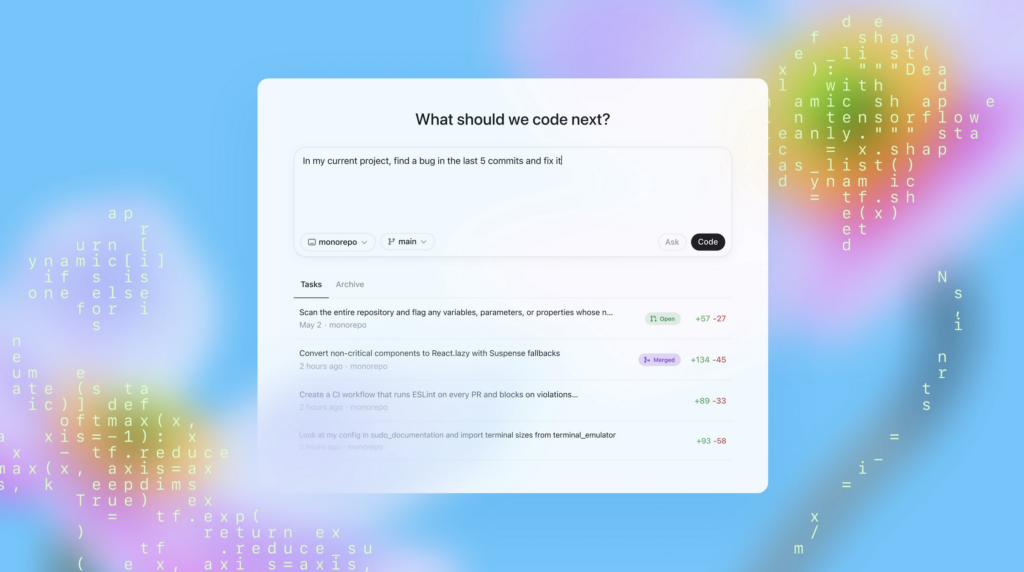

Veo 3.1 Updates – Seamlessly blend textures, characters, and objects.

The new Veo 3.1 update also adds an option for higher-resolution video. The model now supports 1080p and 4K outputs. Google debuted 1080p support last year, but it’s mentioning that option again today, suggesting there may be some quality difference. 4K support is new, but neither 1080p nor 4K outputs are native. Veo creates everything in 720p resolution, but it can be upscaled “for high-fidelity production workflows,” according to Google. However, a Google rep tells Ars that upscaling is only available in Flow, the Gemini API, and Vertex AI. Video in the Gemini app is always 720p.

We are rushing into a world where AI video is essentially indistinguishable from real life. Google, which more or less controls online video via YouTube’s dominance, is at the forefront of that change. Today’s update is reasonably significant, and it didn’t even warrant a version number change. Perhaps we can expect more 2025-style leaps in video quality this year, for better or worse.