Chrome OS is “combining” with Android, but what does that mean?

Android and Chrome OS have been developed in parallel for years, but Google is planning to streamline its operating systems. In a recent interview, Android Ecosystem President Sameer Samat stated bluntly that Android and Chrome OS are merging. This shift, a long time in the making, could give Google more room to maneuver as it plans for new mobile computing experiences.

In the interview, TechRadar’s Lance Ulanoff had other things on his mind, but Samat peppered him with questions about how he uses his Apple devices. “I asked because we’re going to be combining ChromeOS and Android into a single platform, and I am very interested in how people are using their laptops these days and what they’re getting done,” said Samat.

We don’t get back to this point in the remainder of the interview, but it’s probably the most interesting thing Samat said. “Combining” can mean many things, but we can certainly speculate. In this case, it might mean the writing is on the wall for Chrome OS as it currently exists.

Chrome OS definitely had a moment during the pandemic as new remote workers and students sought cheap laptops to get them by. Google worked with multiple OEM partners to promote major Chromebook releases, and Chrome OS itself got big updates. Google expanded the support window to eight years, added Phone Hub integration, enhanced desktop management, added the Chromebook Plus certification for high-end devices, and much more.

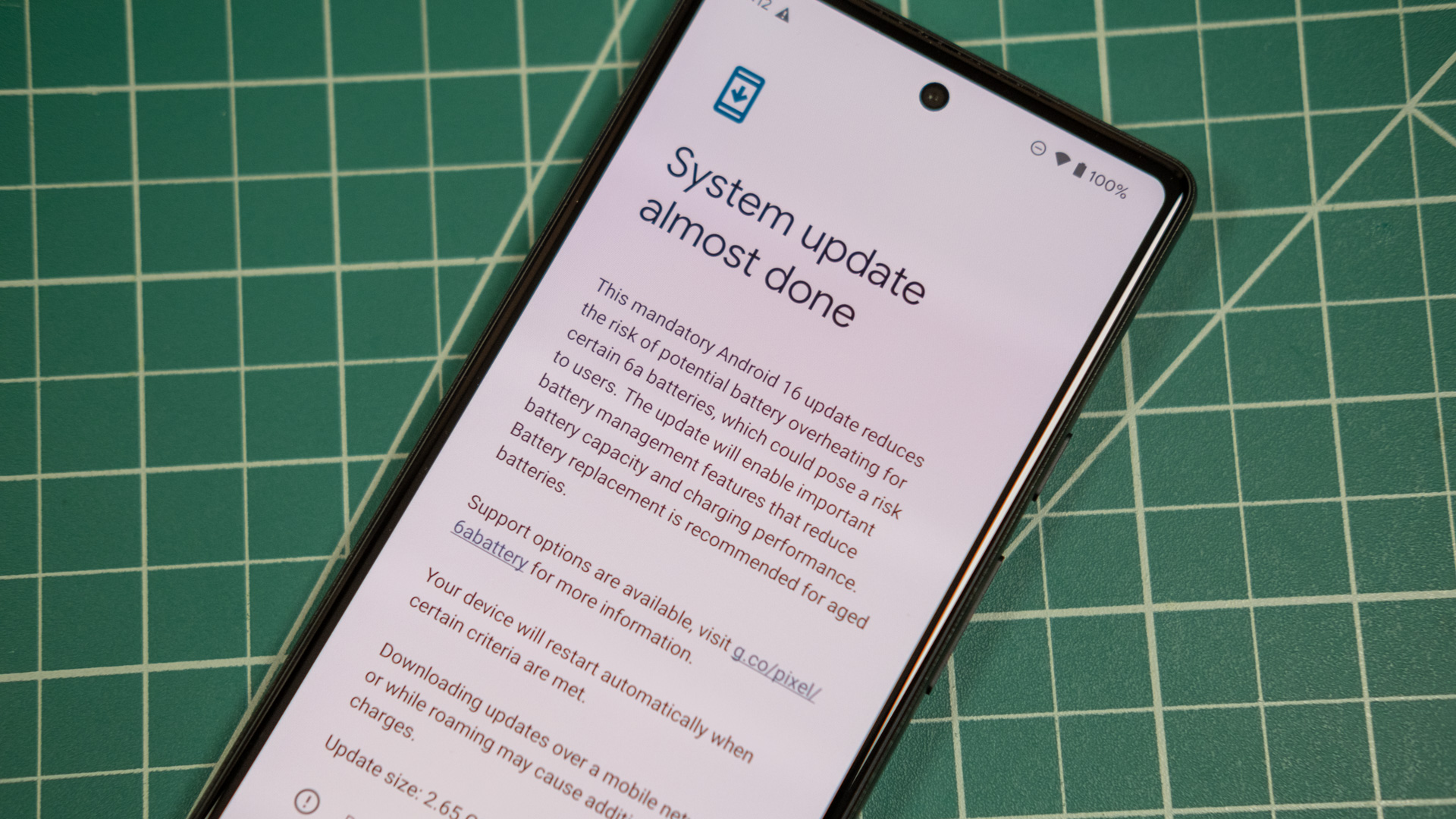

Things have stagnated since then—we hardly ever hear Google talk about Chrome OS now. In the age of AI, Google still finds time to talk about Android and add new features to the platform, even if they no longer align with new versions. In fact, Android is becoming a bit more like Chrome OS with the addition of desktop multitasking support, which will roll out in the coming months. So Google is making Android into a more capable desktop OS while Chrome OS stays the course. There have been some reports of Chrome OS essentially becoming Android, going beyond Google’s stated goal of using parts of the Android tech stack on Chromebooks.

Chrome OS is “combining” with Android, but what does that mean? Read More »