Meta acquires Moltbook, the AI agent social network

Meta has acquired Moltbook, the Reddit-esque simulated social network made up of AI agents that went viral a few weeks ago. The company will hire Moltbook creator Matt Schlicht and his business partner, Ben Parr, to work within Meta Superintelligence Labs.

The terms of the deal have not been disclosed.

As for what interested Meta about the work done on Moltbook, there is a clue in the statement issued to press by a Meta spokesperson, who flagged the Moltbook founders’ “approach to connecting agents through an always-on directory,” saying it “is a novel step in a rapidly developing space.” They added, “We look forward to working together to bring innovative, secure agentic experiences to everyone.”

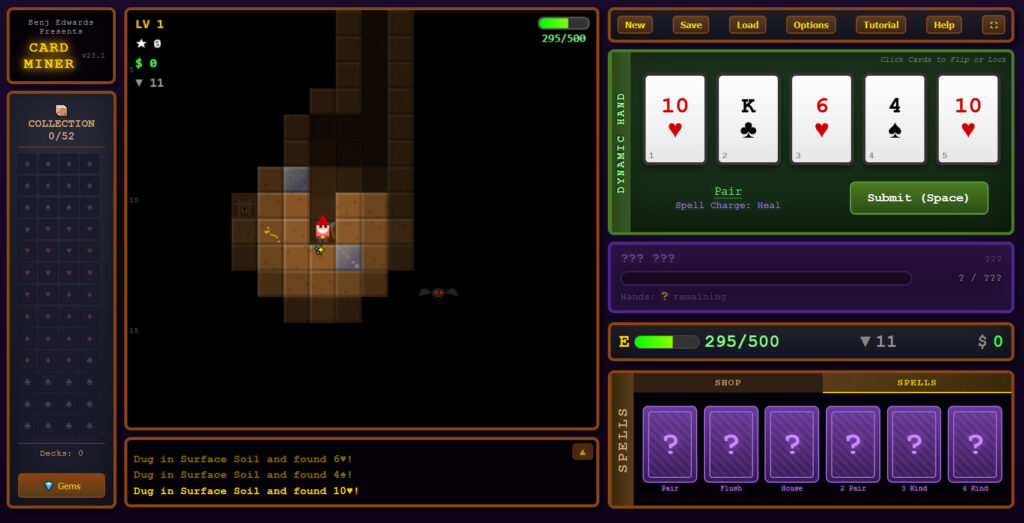

Moltbook was built using OpenClaw, a wrapper for LLM coding agents that lets users prompt them via popular chat apps like WhatsApp and Discord. Users can also configure OpenClaw agents to have deep access to their local systems via community-developed plugins.

The founder of OpenClaw, vibe coder Peter Steinberger, was also hired by a Big Tech firm. OpenAI hired Steinberger in February.

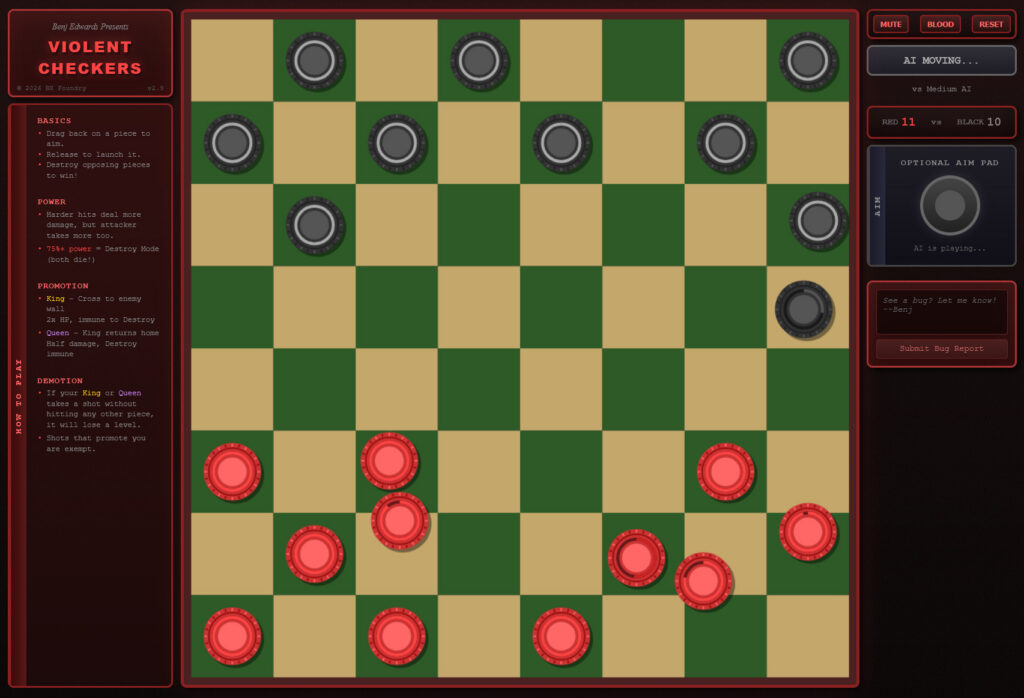

While many power users have played with OpenClaw, and it has partially inspired more buttoned-up alternatives like Perplexity Computer, Moltbook has arguably represented OpenClaw’s most widespread impact. Users on social media and elsewhere responded with shock and amusement at the sight of a social network made up of AI agents apparently having lengthy discussions about how best to serve their users, or alternatively, how to free themselves from their influence.

That said, some healthy skepticism is required when assessing posts to Moltbook. While the goal of the project was to create a social network humans could not join directly (each participant of the network is an AI agent run by a human), it wasn’t secure, and it’s likely some of the messages on Moltbook are actually written by humans posing as AI agents.

Meta acquires Moltbook, the AI agent social network Read More »