SteamOS vs. Windows on dedicated GPUs: It’s complicated, but Windows has an edge

Other results vary from game to game and from GPU to GPU. Borderlands 3, for example, performs quite a bit better on Windows than on SteamOS across all of our tested GPUs, sometimes by as much as 20 or 30 percent (with smaller gaps here and there). As a game from 2019 with no ray-tracing effects, it still runs serviceably on SteamOS across the board, but it was the game we tested that favored Windows the most consistently.

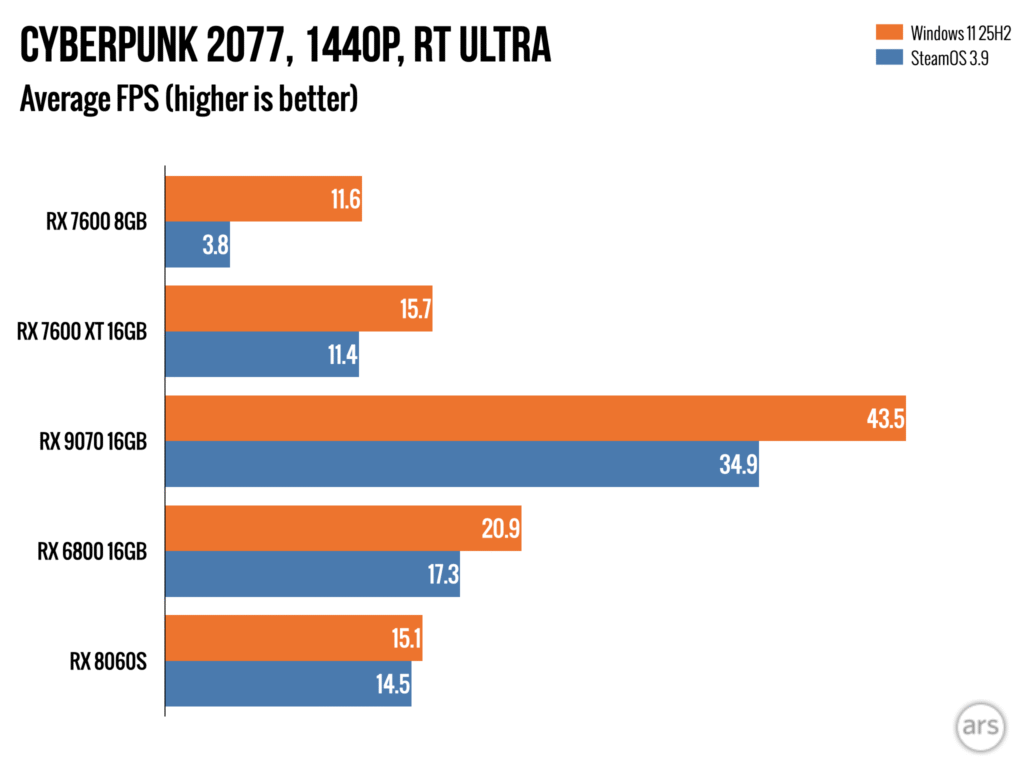

In both Forza Horizon 5 and Cyberpunk 2077, with ray-tracing effects enabled, you also see a consistent advantage for Windows across the 16GB dedicated GPUs, usually somewhere in the 15 to 20 percent range.

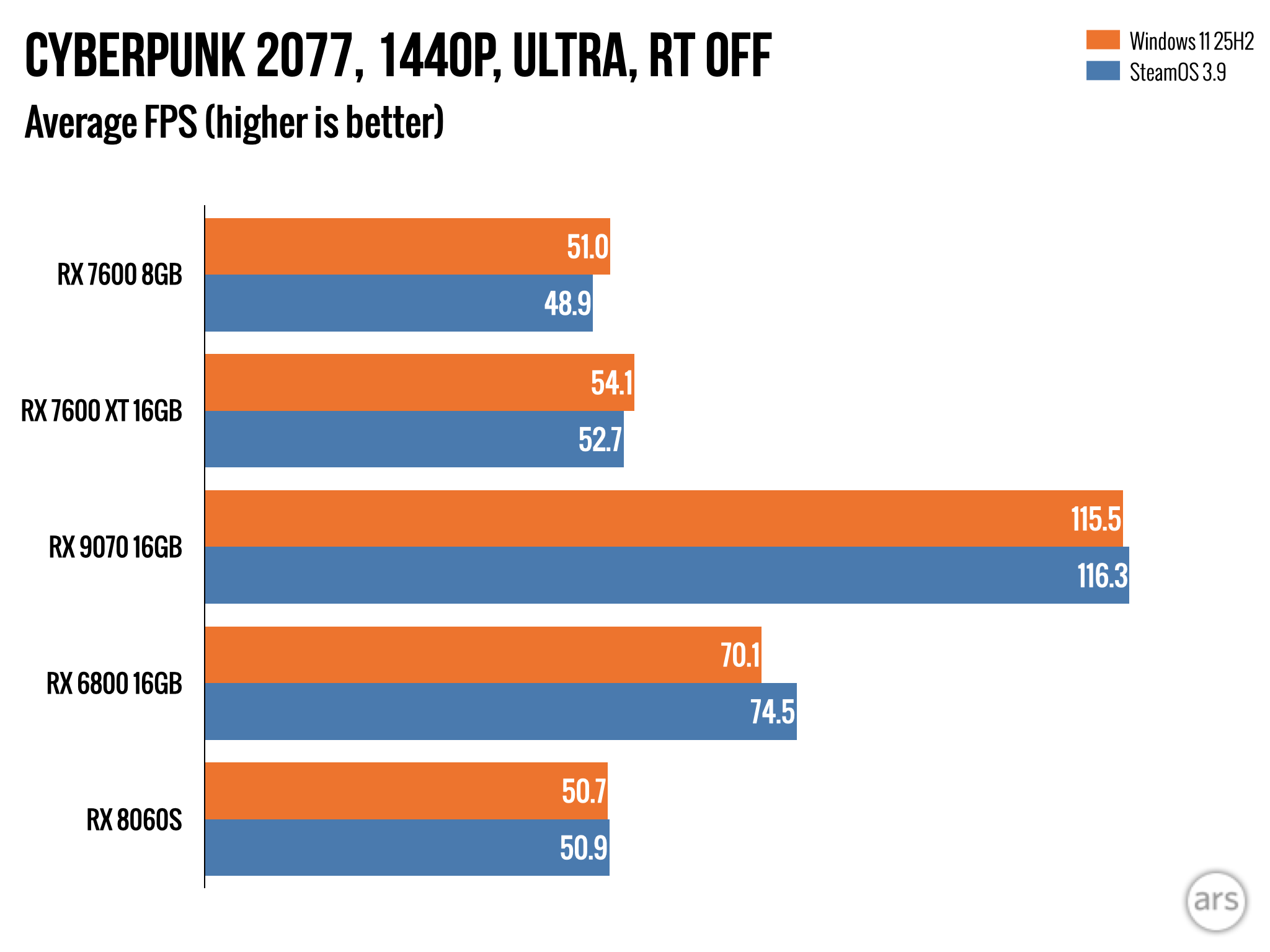

To Valve’s credit, there were also many games we tested where Windows and SteamOS performance was functionally tied. Cyberpunk without ray-tracing, Returnal when not hitting the 7600’s 8GB RAM limit, and Assassin’s Creed Valhalla were sometimes actually tied between Windows and SteamOS, or they differed by low-single-digit percentages that you could chalk up to the margin of error.

Now look at the results from the integrated GPUs, the Radeon 780M and RX 8060S. These are pretty different GPUs from one another—the 8060S has more than three times the compute units of the 780M, and it’s working with a higher-speed pool of soldered-down LPDDR5X-8000 rather than two poky DDR5-5600 SODIMMs.

But Borderlands aside, SteamOS actually did quite a bit better on these GPUs relative to Windows. In both Forza and Cyberpunk with ray-tracing enabled, SteamOS slightly beats Windows on the 780M, and mostly closes the performance gap on the 8060S. For the games where Windows and SteamOS essentially tied on the dedicated GPUs, SteamOS has a small but consistent lead over Windows in average frame rates.

SteamOS vs. Windows on dedicated GPUs: It’s complicated, but Windows has an edge Read More »