Cockpit voice recorder survived fiery Philly crash—but stopped taping years ago

Cottman Avenue in northern Philadelphia is a busy but slightly down-on-its-luck urban thoroughfare that has had a strange couple of years.

You might remember the truly bizarre 2020 press conference held—for no discernible reason—at Four Seasons Total Landscaping, a half block off Cottman Avenue, where a not-yet-disbarred Rudy Giuliani led a farcical ensemble of characters in an event so weird it has been immortalized in its own, quite lengthy, Wikipedia article.

Then in 2023, a truck carrying gasoline caught fire just a block away, right where Cottman passes under I-95. The resulting fire damaged I-95 in both directions, bringing down several lanes and closing I-95 completely for some time. (This also generated a Wikipedia article.)

This year, on January 31, a little further west on Cottman, a Learjet 55 medevac flight crashed one minute after takeoff from Northeast Philadelphia Airport. The plane, fully loaded with fuel for a trip to Springfield, Missouri, came down near a local mall, clipped a commercial sign, and exploded in a fireball when it hit the ground. The crash generated a debris field 1,410 feet long and 840 feet wide, according to the National Transportation and Safety Board (NTSB), and it killed six people on the plane and one person on the ground.

The crash was important enough to attract the attention of Pennsylvania Governor Josh Shapiro and Mexican President Claudia Sheinbaum. (The airplane crew and passengers were all Mexican citizens; they were transporting a young patient who had just wrapped up treatment at a Philadelphia hospital.) And yes, it, too, generated a Wikipedia article.

NTSB has been investigating ever since, hoping to determine the cause of the accident. Tracking data showed that the flight reached an altitude of 1,650 feet before plunging to earth, but the plane’s pilots never conveyed any distress to the local air traffic control tower.

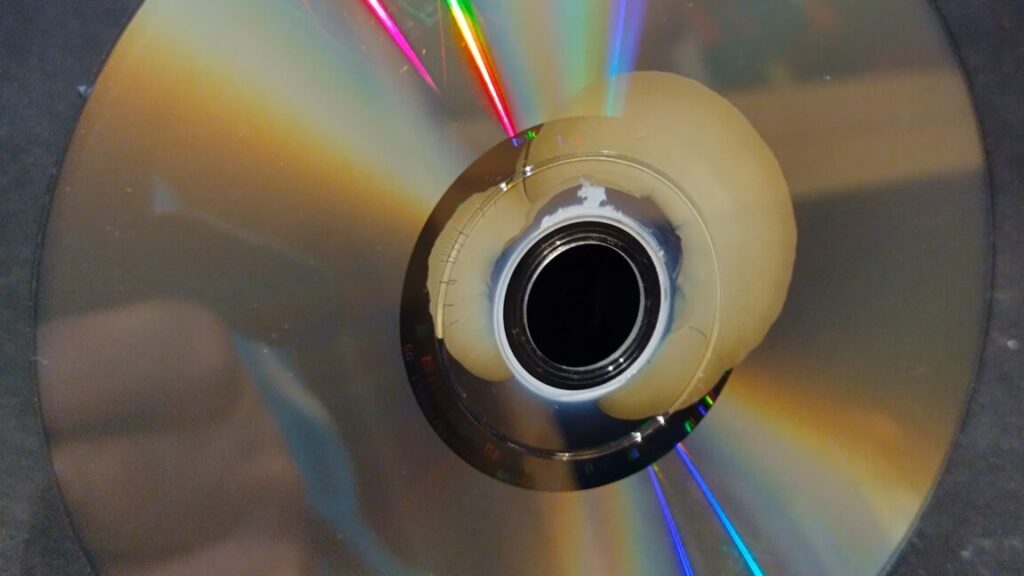

Investigators searched for the plane’s cockpit voice recorder, which might provide clues as to what was happening in the cockpit during the crash. The Learjet did have such a recorder, though it was an older, tape-based model. (Newer ones are solid-state, with fewer moving parts.) Still, even this older tech should have recorded the last 30 minutes of audio, and these units are rated to withstand impacts of 3,400 Gs and to survive fires of 1,100° Celsius (2,012° F) for a half hour. Which was important, given that the plane had both burst into flames and crashed directly into the ground.

Cockpit voice recorder survived fiery Philly crash—but stopped taping years ago Read More »